Mathematician Jason Rosenhouse has written an extraordinary post in which he pronounces the Intelligent Design movement officially dead: “Truly, ID is dead,” he declares. In this post, I’d like to put forward three good mathematical arguments illustrating why the Intelligent Design movement remains very much alive. All of these arguments come from scientists who are highly qualified in the fields they are writing about. Two of the scientists are committed evolutionists (one is a Darwinian, the other an adherent of the “nearly-neutral” theory of evolution), and the other scientist is the holder of a Caltech Ph.D. who has written two articles for the Journal of Molecular Biology (see here and here for abstracts), as well as co-authoring an article published in the Proceedings of the National Academy of Sciences, an article in Biochemistry and an article published in PLoS ONE, and his work has been reviewed in Nature. As far as I am aware, none of the three arguments listed below has been refuted – indeed, I have yet to see a satisfactory response to any of them. If Professor Rosenhouse wishes to respond, he is welcome to do so.

The mathematical arguments listed below relate to three topics: the origin of protein folds, the origin of life, and the time available for evolution.

1. The origin of protein folds

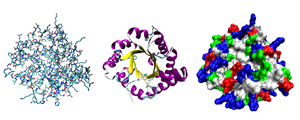

|

Above: Three possible representations of the three-dimensional structure of the protein triose phosphate isomerase. Illustration courtesy of Wikipedia.

Every living thing on this planet contains proteins, which are made up of amino acids. Proteins are fundamental components of all living cells and include many substances, such as enzymes, hormones, and antibodies, that are necessary for the proper functioning of an organism. They’re involved in practically all biological processes. To fulfill their tasks, proteins need to be folded into a complicated three-dimensional structure. Proteins can tolerate slight changes in their amino acid sequences, but a single change of the wrong kind can render them incapable of folding up, and hence, totally incapable of doing any kind of useful work within the cell. That’s why not every amino-acid sequence represents a protein: only one that can fold up properly and perform a useful function within the cell can be called a protein.

Now let’s consider a protein made up of 150 amino acids – which is a fairly modest length. All living things known to scientists – including even the humblest bacteria – contain at least some proteins which are of this length. And as Dr. Axe points out in his paper: “…[P]rotein chains have to be of a certain length in order to fold into stable three-dimensional structures. This requires several dozen amino acid residues in the simplest structures, with more complex structures requiring much longer chains.”

If we compare the number of 150-amino-acid sequences that correspond to some sort of functional protein to the total number of possible 150-amino-acid sequences, we find that only a tiny proportion of possible amino acid sequences are capable of performing a function of any kind. The vast majority of amino-acid sequences are good for nothing.

So, what proportion are we talking about here? An astronomically low proportion: 1 in 10 to the power of 74, according to work done by Dr. Douglas Axe. When we add the requirement that a protein has to be made up of amino acids that are either all left-handed or all right-handed, and when we finally add the requirement that the amino acids have to be held together by peptide bonds, we find that only 1 in 10 to the power of 164 amino-acid sequences of that length are suitable proteins. 1 in 10 to the power of 164 is 1 in 1 followed by 164 zeroes. Given that the number of discrete events (or elementary bit-operations) that have occurred during the history of the entire universe has been estimated at less than 10^150, according to calculations performed by Dr. Seth Lloyd, it should be obvious to the reader that that the 4.54 billion years of Earth history is nowhere near enough time for a protein to form as a result of unguided natural processes.

To get round this difficulty, some scientists have hypothesized that maybe Nature has a hidden bias that makes proteins more likely to form, but all the evidence suggests there isn’t any such bias – and even if there were one, that would need explaining too. Why should Nature be biased in favor of building structures that can fold up neatly and do a useful job in the cell, when it has no foresight?

Finally, scientists have suggested that maybe another molecule – RNA – formed first, and proteins came later, but the same problem arises for RNA: the vast majority of possible sequences are non-functional, and only an astronomically tiny proportion work. Robert Shapiro (1935-2011) was professor emeritus of chemistry at New York University. In a discussion hosted by Edge in 2008, entitled, Life! What a Concept, with scientists Freeman Dyson, Craig Venter, George Church, Dimitar Sasselov and Seth Lloyd, Professor Shapiro explained why he found the RNA world hypothesis incredible:

… I looked at the papers published on the origin of life and decided that it was absurd that the thought of nature of its own volition putting together a DNA or an RNA molecule was unbelievable.

I’m always running out of metaphors to try and explain what the difficulty is. But suppose you took Scrabble sets, or any word game sets, blocks with letters, containing every language on Earth, and you heap them together and you then took a scoop and you scooped into that heap, and you flung it out on the lawn there, and the letters fell into a line which contained the words “To be or not to be, that is the question,” that is roughly the odds of an RNA molecule, given no feedback — and there would be no feedback, because it wouldn’t be functional until it attained a certain length and could copy itself — appearing on the Earth.

How, then, can we account for the origin of proteins that could fold and perform useful tasks? In a recent article, Dr. Douglas Axe has argued that we should be looking well outside the Darwinian framework for an adequate explanation of protein fold origins. The following excerpt is taken from Dr. Douglas Axe’s article, The Case Against a Darwinian Origin of Protein Folds, in BioComplexity 2010(1):1-12. doi:10.5048/BIO-C.2010.1

Abstract

Four decades ago, several scientists suggested that the impossibility of any evolutionary process sampling anything but a minuscule fraction of the possible protein sequences posed a problem for the evolution of new proteins. This potential problem-the sampling problem-was largely ignored, in part because those who raised it had to rely on guesswork to fill some key gaps in their understanding of proteins. The huge advances since that time call for a careful reassessment of the issue they raised. Focusing specifically on the origin of new protein folds, I argue here that the sampling problem remains. The difficulty stems from the fact that new protein functions, when analyzed at the level of new beneficial phenotypes, typically require multiple new protein folds, which in turn require long stretches of new protein sequence. Two conceivable ways for this not to pose an insurmountable barrier to Darwinian searches exist. One is that protein function might generally be largely indifferent to protein sequence. The other is that relatively simple manipulations of existing genes, such as shuffling of genetic modules, might be able to produce the necessary new folds. I argue that these ideas now stand at odds both with known principles of protein structure and with direct experimental evidence. If this is correct, the sampling problem is here to stay, and we should be looking well outside the Darwinian framework for an adequate explanation of fold origins.

Excerpt from the paper:

“Based on analysis of the genomes of 447 bacterial species, the projected number of different domain structures per species averages 991. Comparing this to the number of pathways by which metabolic processes are carried out, which is around 263 for E. coli, provides a rough figure of three or four new domain folds being needed, on average, for every new metabolic pathway. In order to accomplish this successfully, an evolutionary search would need to be capable of locating sequences that amount to anything from one in 10^159 to one in 10^308 possibilities, something the neo-Darwinian model falls short of by a very wide margin.” (p. 11)

A more detailed, non-technical discussion of Dr. Axe’s paper can be found in my recent post, Barriers to macroevolution: what the proteins say. Curiously, none of the critics who responded to my post were able to point out any flaws in Dr. Axe’s reasoning, and I haven’t found any convincing online refutations of Dr. Axe’s paper, either.

How, I would ask, can Professor Rosenhouse assert with swaggering confidence that Intelligent Design is dead, when scientists haven’t even demonstrated the possibility of a single protein arising by unguided natural processes, and when the best data we have says that the time available is orders of magnitude too short?

2. The origin of life

|

Above: The genetic code. Illustration courtesy of Wikipedia.

Dr. Eugene V. Koonin is a Senior Investigator at the National Center for Biotechnology Information, National Library of Medicine, at the National Institutes of Health in Bethesda, Maryland, USA. Dr. Koonin is also a recognized authority in the field of evolutionary and computational biology. Recently, he authored a book, titled, The Logic of Chance: The Nature and Origin of Biological Evolution (Upper Saddle River: FT Press, 2011). I think we can fairly assume that when it comes to origin-of-life scenarios, he knows what he’s talking about.

In Appendix B of his book, The Logic of Chance, Dr. Koonin argues that the origin of life is such a remarkable event that we need to postulate a multiverse, containing a very large (and perhaps infinite) number of universes, in order to explain the emergence of life on Earth. The reason why Dr. Koonin believes we need to postulate a multiverse in order to solve the riddle of the origin of life on Earth is that all life is dependent on replication and translation systems which are fiendishly complex. As Koonin puts it:

The origin of the translation system is, arguably, the central and the hardest problem in the study of the origin of life, and one of the hardest in all evolutionary biology. The problem has a clear catch-22 aspect: high translation fidelity hardly can be achieved without a complex, highly evolved set of RNAs and proteins but an elaborate protein machinery could not evolve without an accurate translation system.

In his book, and also in his peer-reviewed article, The Cosmological Model of Eternal Inflation and the Transition from Chance to Biological Evolution in the History of Life (Biology Direct 2 (2007): 15, doi:10.1186/1745-6150-2-15), Dr. Koonin provides what he calls “a rough, toy calculation, of the upper bound of the probability of the emergence of a coupled replication-translation system in an O-region.” (By an “O-region,” Dr. Koonin means an observable universe, such as the one we live in.) The calculations on pages 434-435 in Appendix B of Dr. Koonin’s book, The Logic of Chance, are adapted from his peer-reviewed article, The Cosmological Model of Eternal Inflation and the Transition from Chance to Biological Evolution in the History of Life, Biology Direct 2 (2007): 15, doi:10.1186/1745-6150-2-15. The model itself is not intended to be realistic one – that’s why it’s called a toy model – but it makes some very generous assumptions about the availability of RNA on the primordial Earth. Using his “toy model,” Dr. Koonin estimates that the odds of even a very basic life-form – a coupled replication-translation system – emerging in the observable universe are 1 in 1 followed by 1,018 zeroes. Dr. Koonin calculates the probability of this basic life-form emerging after performing what he calls “a back-of-the-envelope calculation” of the odds of the emergence of “a primitive, coupled replication-translation system,” which requires, at a minimum, the formation of “two rRNAs with a total size of at least 1000 nucleotides,” “10 primitive adaptors of about 30 nucleotides each,” and “one RNA encoding a replicase” with “about 500 nucleotides.” He concludes:

In other words, even in this toy model that assumes a deliberately inflated rate of RNA production, the probability that a coupled translation-replication emerges by chance in a single O-region is P < 10-1018. Obviously, this version of the breakthrough stage can be considered only in the context of a universe with an infinite (or, at the very least, extremely vast) number of O-regions.

Dr. Koonin evades the theistic implications of his calculations by positing a multiverse – a “solution” which fails on no less than five grounds, which I discussed in detail in my recent post, Professor Krauss Objects.

I should add that Dr. Koonin’s 2007 paper, in which he provided a mathematical description of his toy model, Inpassed a panel of four peer reviewers, including one from Harvard University, who wrote:

In this work, Eugene Koonin estimates the probability of arriving at a system capable of undergoing Darwinian evolution and comes to a cosmologically small number…

The context of this article is framed by the current lack of a complete and plausible scenario for the origin of life. Koonin specifically addresses the front-runner model, that of the RNA-world, where self-replicating RNA molecules precede a translation system. He notes that in addition to the difficulties involved in achieving such a system is the paradox of attaining a translation system through Darwinian selection. That this is indeed a bona-fide paradox is appreciated by the fact that, without a shortage [of] effort, a plausible scenario for translation evolution has not been proposed to date. There have been other models for the origin of life, including the ground-breaking Lipid-world model advanced by Segre, Lancet and colleagues (reviewed in EMBO Reports (2000), 1(3), 217?222), but despite much ingenuity and effort, it is fair to say that all origin of life models suffer from astoundingly low probabilities of actually occurring…

…[F]uture work may show that starting from just a simple assembly of molecules, non-anthropic principles can account for each step along the rise to the threshold of Darwinian evolution. Based upon the new perspective afforded to us by Koonin this now appears unlikely. (Emphases mine – VJT.)

Finally, I’d like to point out that Dr. Koonin’s calculations have been reviewed by Dutch biologist Gert Korthof, in an online article titled, The Koonin threshold for the Origin of Life on Earth. At the end of his review, Dr. Korthof proposes an experiment that could verify or falsify Koonin’s model.

Think about that. A leading evolutionary biologist has calculated that the odds of even a very basic life-form – a coupled replication-translation system – emerging in the observable universe are 1 in 1 followed by 1,018 zeroes. To avoid the theistic implications of his argument, he posits a multiverse – a solution which, as I’ve argued before, is fraught with difficulties. How, then, can Professor Rosenhouse brazenly declare that Intelligent Design is dead?

3. Is there enough time for evolution to have occurred?

|

Above: An implementation of a Turing machine. Illustration courtesy of Wikipedia.

In 2011, I wrote a post titled, At last, a Darwinist mathematician tells the truth about evolution. My post contained a partial transcript of a talk given by Dr. Gregory Chaitin, a world-famous mathematician and computer scientist, at PPGC UFRGS (Portal do Programa de Pos-Graduacao em Computacao da Universidade Federal do Rio Grande do Sul.Mestrado), in Brazil, on 2 May 2011. Professor Chaitin is also the author of a book titled, Proving Darwin: Making Biology Mathematical (Pantheon, 2012, ISBN: 978-0-375-42314-7; paperback, Vintage, 2013, ISBN: 978-1-400-07798-4). As a mathematician who is committed to Darwinism, Chaitin is trying to create a new mathematical version of Darwin’s theory which proves that evolution can really work. However, in his 2011 talk, Professor Chaitin was refreshingly honest and up-front about the mathematical shortcomings of the theory of evolution in its current form. What follows is a series of excerpts from Chaitin’s talk (headings are mine – VJT):

The mathematical inadequacy of Darwin’s theory

[W]hat I want to do is make a theory about randomly evolving, mutating and evolving software – a little toy model of evolution where I can prove theorems, because I love Darwin’s theory, I have nothing against it, but, you know, it’s just an empirical theory. As a pure mathematician, that’s not good enough…

Life is evolving software

So here’s the way I’m looking at biology now, in this viewpoint. Life is evolving software. Bodies are unimportant, right? The hardware is unimportant. The software is important…

The relevance of computational theory to understanding how life works: a brief history

If you look at it from the point of view of biology, … I’ll give you a revisionist version of this. Turing fumbles the ball. He’s surrounded by software everywhere, in the natural world, in the biosphere. There’s software everywhere, he’s just finally realized what it is that makes biology work, but he doesn’t get it… He’s too trapped in the pre-Turing viewpoint….

So it’s von Neumann, … who did not come up with the original idea that Turing did, but who appreciated infinitely better than Turing the full scope and implications of this new viewpoint. And this is sort of typical of von Neumann. He’s a wonderful mathematician, he’s my hero….

So von Neumann looked at this work of Turing and said, “This applies not just to artificial automata, Turing machines, which are computers, it applies in the biological world. And von Neumann has a paper published in 1951, … called … “The General and Logical Theory of Automata”, and he’s talking about natural automata and artificial automata. Artificial automata are computers; natural automata are biological organisms.

OK, so software is everywhere there, and what I want to do is make a theory about randomly evolving, mutating and evolving software – a little toy model of evolution where I can prove theorems, because I love Darwin’s theory, I have nothing against it, but, you know, it’s just an empirical theory. As a pure mathematician, that’s not good enough…

Modern organisms are too messy to use if you want a mathematical model which rigorously demonstrates the possibility of evolution

Biology is too much of a mess. DNA is a programming language which is billions of years old and which has grown by accretion, and … we know a little bit about it, but it’s just a catastrophe. So instead of working with randomly mutating DNA, let’s work with randomly mutating computer programs, where we invented the language, and we can keep it a theoretical computer program, one that a theoretician would make, so you know, you specify the semantics, you know the rules of the game.

And … I’m proposing that as a more tractable question to work on… So I propose to call this new field metabiology, and you’re all welcome to get involved in it. So far there’s just me and my wife Virginia working on this. It’s wide open. And the idea is to exploit this analogy between artificial software – computer programs – and natural programs, DNA.

[I]nstead of trying to prove theorems about what happens with random mutations on DNA, we’re going to try to prove theorems about random mutations on computer programs. OK. This is the proposal – to make a field like that.

A toy model is required to rigorously demonstrate the possibility of evolution

So, my organisms are software organisms. They are only software. My organisms are programs. You pick some language, and the space of all possible organisms is the space of all possible programs in that language. And this is a very rich space. So that’s the idea…

Toy models are extremely unrealistic

So, my organisms are software organisms. They are only software. My organisms are programs. You pick some language, and the space of all possible organisms is the space of all possible programs in that language. And this is a very rich space. So that’s the idea…

So how do we make a toy model of evolution? …

OK, here comes the toy model… So the model is very simple. There’s only one organism. Not only there’s no bodies, it’s a program… There is no population, there is no environment, there is no competition… [but] it’ll evolve anyway. Let me tell you what there is. So it’s a single software organism, it’s a program, and it will be mutated and evolving … What does this program do? Well, I’m interested in programs that calculate a single positive whole number, and then they halt… So my organism is really a pure mathematician or a computer scientist. And the reason that I’m going to get them to evolve is that I’m going to give them something challenging to do, something which can use an unlimited amount of creativity. So what is the goal of this organism? … How do I decide if an organism has become more fit? What is the notion of fitness … for this organism? What is its goal in life? Well its goal is … the Busy Beaver problem. It’s a very simple problem … and it’s just the idea of: name a very big positive integer. And this might sound like a trivial, stupid thing, but it’s not. It’s sort of the simplest problem which requires an unlimited amount of creativity, which means that in a way, Godel’s incompleteness theorem applies to it. There is no general method… No closed system will give you the best possible answer. There are always better and better ways to do it. So the reason is basically that this problem is equivalent to Turing’s halting problem. So that’s the theoretical basis of why this problem is so fundamental and can utilize an unlimited amount of mathematical creativity. OK, so the fitness of this organism, which comes from the program, is the number it calculates. The bigger the number, the better the organism. So that’s their goal in life. ..These are mathematicians and their aim is to calculate enormously big numbers. The bigger, the better…

he breakthrough is to allow algorithmic mutations – very powerful mutational mechanisms which, as far as I know, are not the case in biology, but nobody really knows all the mutational mechanisms in biology. So this is a very high-level kind of mutation. An algorithmic mutation means: I will take a program – my mutation will be a program – that I give the current organism as input and it produces a mutated organism as output. So that’s a function. It’s a computable function. That’s a very powerful notion of mutation. And the crucial thing is: what is the probability going to be?

OK, so this is the idea. If the algorithmic mutation M which is a function basically, a computable function which takes the organism and maps it into the mutated organism. If that’s a K-bit program then this will have a probability of 1 over 2 to the K. That’s a very natural measure, and for those of you who have heard of the halting probability, which was mentioned in the very nice introduction, which is the probability that a Turing machine halts, which is my version of Turing’s halting problem. You see this field knows how to associate probabilities with algorithms in a natural way. …Those of us who work in this field are convinced that this is a natural way – we can give various reasons – for associating probabilities on computer programs. OK?

So from a mathematical view this is now a very natural way to assign a probability to a mutation.…

Even toy models of evolution require a Turing oracle in order to work properly

Now one important thing to say is that there’s a little problem with this: we need something which Turing invented, not in his famous paper of 1936, but in a less well-known but pretty wonderful paper of 1938, which are called oracles….

And basically what an oracle is, it’s something you add to a normal computer, to a normal Turing machine, that enables the machine to do things that are uncomputable. You’re allowed to ask God or someone to give you the answer to some question where you can’t compute the answer, and the oracle will immediately give you the answer, and you go on ahead. So I need an oracle to enable me to carry out this random walk… [Y]ou can get stuck running the new organism to see what it calculates, you see, and you’ll go on forever, and it’ll never calculate anything, so you’re just stuck there and the random walk dies. So we’ve got to keep that from happening. And if you actually want to do that, as a thought experiment, you would need an oracle.….

The first thing I … want to see is: how fast will this system evolve? How big will the fitness be? How big will the number be that these organisms name? How quickly will they name the really big numbers? So how can we measure the rate of evolutionary progress, or mathematical creativity of my little mathematicians, these programs? Well, the way to measure the rate of progress, or creativity, in this model, is to define a thing called the Busy Beaver function.

Three possible kinds of evolution: Intelligent Design (the smartest possible kind), Darwinian evolution, and exhaustive search (the slowest kind)

So anyway, let me tell you about three different evolutionary regimes you can have with this model. This is the one I’m really interested in. This is cumulative random evolution. OK? But first I want to tell you about two extremes, two sort of evolutionary regimes, because we want to get a feeling for how well this model does, when you’re picking the mutations at random, in the way I’ve just described. So to get that sort of bracket, the sort of best and worst possibilities, to see how this kind of model behaves, you need to look at two extremes which are not normal cumulative evolution in the way I’ve described. So one extreme is total stupidity. You don’t look at the current organism. For the next organism, you pick an organism at random. In other words, the mutation isn’t told of the current organism. It just gives you a new organism at random without being able to use any information from the current organism. It’s stupid. So what this does, this basically amounts to exhaustive search, in the space of all possible programs with a probability measure that comes from algorithmic information theory. And if you do that, this is the stupidest possible way to evolve… your organism will reach fitness – the Busy Beaver function of N – in time exponential of N. Why? Because basically that’s the amount of time it takes to try every possible N-bit program, and it’ll find the one that is the most fit, and that one has this fitness. You see, so this is, sort of, the worst case. But notice that time 2 to the N is what? You’ve tried 2 to the N mutations. That’s the timing in here. Every time you try, you generate a mutation at random and try it, to see if that gives you a bigger integer, and that counts as one clock.

Now, what is the smartest possible way, the best possible way to get evolution to take place? This is not Darwinian. This is if I pick the sequence of mutations. It has to be a computable sequence of mutations, but I get to pick the best mutations, the best order, you know, do the best possible mutations, one after the other, that will drive the evolution – the mutations you try – … as fast as possible. But it has to be done in a computable manner with the mutations. So that you could sort of call Intelligent Design. I’m the one that’s designing that, right? In my model, …in this space, I get to pick the sequence, I get to indicate the sequence of mutations that you try, that will really drive the fitness up very fast. So that’s sort of the best you can do, and what that does, it reaches Busy Beaver function of N in time N, because basically in time N it got to go to the oracle N times, and each time, you’re getting one bit of creativity, so this is clearly the best you can do, and you can do it in this model. So this is the fastest possible regime.

So this [here he points to exhaustive search] of course is very stupid, and this [here he points to Intelligent Design] requires Divine Inspiration or something. You know, [in Darwinian evolution] you’re not allowed to pick your mutations in the best possible order. And mutations are picked at random. That’s how Darwinian evolution works.

So what happens if we do that, which is sort of cumulative random evolution, the real thing? Well, here’s the result. You’re going to reach Busy Beaver function N in a time that is – you can estimate it to be between order of N squared and order of N cubed. Actually this is an upper bound. I don’t have a lower bound on this. This is a piece of research which I would like to see somebody do – or myself for that matter – but for now it’s just an upper bound.

Only Intelligent Design is guaranteed to evolve living things in the four billion years available. It seems that Darwinian evolution would take too much time

So what happens if we do that, which is sort of cumulative random evolution, the real thing? Well, here’s the result. You’re going to reach Busy Beaver function N in a time that is ? you can estimate it to be between order of N squared and order of N cubed. Actually this is an upper bound. I don’t have a lower bound on this. This is a piece of research which I would like to see somebody do – or myself for that matter – but for now it’s just an upper bound. OK, so what does this mean? This means, I will put it this way. I was very pleased initially with this.

Table:

Exhaustive search reaches fitness BB(N) in time 2^N.

Intelligent Design reaches fitness BB(N) in time N. (That’s the fastest possible regime.)

Random evolution reaches fitness BB(N) in time between N^2 and N^3.This means that picking the mutations at random is almost as good as picking them the best possible way. It’s doing a hell of a lot better than exhaustive search. This is BB(N) at time N and this is between N squared and N cubed. So I was delighted with this result, and I would only be more delighted if I could prove that in fact this [here he points to Darwinian evolution] will be slower than this [here he points to Intelligent Design]. I’d like to separate these three possibilities. But I don’t have that yet.

But I told a friend of mine … about this result. He doesn’t like Darwinian evolution, and he told me, “Well, you can look at this the other way if you want. This is actually much too slow to justify Darwinian evolution on planet Earth. And if you think about it, he’s right.… If you make an estimate, the human genome is something on the order of a gigabyte of bits. So it’s … let’s say a billion bits – actually 6 x 10^9 bits, I think it is, roughly – … so we’re looking at programs up to about that size [here he points to N^2 on the slide] in bits, and N is about of the order of a billion, 10^9, and the time, he said … that’s a very big number, and you would need this to be linear, for this to have happened on planet Earth, because if you take something of the order of 10^9 and you square it or you cube it, well …forget it. There isn’t enough time in the history of the Earth … Even though it’s fast theoretically, it’s too slow to work. He said, “You really need something more or less linear.” And he has a point...

More realistic models of evolution won’t allow you to rigorously prove anything about evolution

But what happens if you try to make things a little more realistic? You know, no oracles, a limited run time, you know, all kinds of things. Well, my general feeling is that it would sort of be a trade-off. The more realistic your model is – this is a very abstract fantasy world. That’s why I’m able to prove these results. So if it’s … more realistic, my general guess will be that it’ll be harder to carry out proofs. And it may be that you can’t really prove what’s going on, with more realistic situations…

Here, Professor Chaitin candidly admits that in the light of our current knowledge, Darwinian evolution is orders of magnitude too slow in generating the mutations required for life to evolve. And as far as I am aware, Professor Chaitin’s challenge of proving mathematically that Darwinian evolution can produce organisms with the required fitness in the time available – billions of years, instead of quintillions – remains unsolved.

Towards the conclusion of his talk, Professor Chaitin pessimistically suggested that the possibility of Darwinian evolution may turn out to be forever unprovable, using realistic assumptions. If he’s right here, then evolution is really a theory like no other. It is one thing to believe in a theory whose possibility has not been demonstrated. It is quite another thing to believe in a theory whose possibility cannot be demonstrated. What makes such an act of assent rational?

Perhaps Professor Rosenhouse will respond by citing a 2010 paper by Herbert S. Wilf and Warren J. Ewens, a biologist and a mathematician at the University of Pennsylvania, in Proceedings of the U.S. National Academy of Sciences (PNAS), titled, “There’s plenty of time for evolution“. However, Intelligent Design proponents are one step ahead of him. In a peer-reviewed scientific paper in the journal BIO-Complexity, titled, “Time and Information in Evolution“, authors Winston Ewert, Ann Gauger, william Dembski and Jonathan Marks demonstrate that Wilf and Ewens’ mathematical simulation of evolution doesn’t model biologically realistic processes of Darwinian evolution at all. A summary of the paper can be found here. Allow me to quote a brief excerpt:

Wilf and Ewens argue in a recent paper that there is plenty of time for evolution to occur. They base this claim on a mathematical model in which beneficial mutations accumulate simultaneously and independently, thus allowing changes that require a large number of mutations to evolve over comparatively short time periods. Because changes evolve independently and in parallel rather than sequentially, their model scales logarithmically rather than exponentially. This approach does not accurately reflect biological evolution, however, for two main reasons. First, within their model are implicit information sources, including the equivalent of a highly informed oracle that prophesies when a mutation is “correct,” thus accelerating the search by the evolutionary process. Natural selection, in contrast, does not have access to information about future benefits of a particular mutation, or where in the global fitness landscape a particular mutation is relative to a particular target. It can only assess mutations based on their current effect on fitness in the local fitness landscape. Thus the presence of this oracle makes their model radically different from a real biological search through fitness space. Wilf and Ewens also make unrealistic biological assumptions that, in effect, simplify the search. They assume no epistasis between beneficial mutations, no linkage between loci, and an unrealistic population size and base mutation rate, thus increasing the pool of beneficial mutations to be searched. They neglect the effects of genetic drift on the probability of fixation and the negative effects of simultaneously accumulating deleterious mutations. Finally, in their model they represent each genetic locus as a single letter. By doing so, they ignore the enormous sequence complexity of actual genetic loci (typically hundreds or thousands of nucleotides long), and vastly oversimplify the search for functional variants. In similar fashion, they assume that each evolutionary “advance” requires a change to just one locus, despite the clear evidence that most biological functions are the product of multiple gene products working together. Ignoring these biological realities infuses considerable active information into their model and eases the model’s evolutionary process.

In other words, evolutionists are back at square one. To be sure, there are many converging lines of circumstantial evidence for common descent. But common descent is not evolution. Evolution is an unguided natural process, working by mechanisms which require no foresight whatsoever. And evolutionists are still as far as ever from demonstrating even the plausibility (let alone the truth) of their bold claim that these unguided mechanisms could have generated the vast diversity of organisms we see around us, within the time available.

Professor Rosenhouse may reply that the foregoing arguments are merely arguments from ignorance. No, they’re not. They’re arguments based on the best scientific knowledge available to date. Of course, future discoveries may overturn them. But in the meantime, it is rational to follow the evidence where it leads us: away from unguided natural processes, and towards Intelligent Design.

What do readers think?