|

Acclaimed author John Jeremiah Sullivan has recently written an article for Lapham’s Quarterly (Spring 2013), arguing that human beings stand in a psychological continuum with other animals. Sullivan’s article, which is appropriately titled, One of us, reverently concludes that the human mind is but one of a multitude of minds on the animal spectrum: “The animal kingdom is symphonic with mental activity, and of its millions of wavelengths, we’re born able to understand the minutest sliver… This is what the study of animal consciousness can teach us, finally – that we possess an animal consciousness.” The publication of Charles Darwin’s Origin of Species is depicted in the article as the watershed event in history that opened our eyes to this great truth. Sullivan’s essay, which is of outstanding literary merit, has garnered high praise from its reviewers: it is elegantly written, informatively entertaining, passionately sincere, and commendably modest in its assessment of what we know about animal minds. But when an essayist aims to inform his readers – as Sullivan clearly does – then he has an obligation to get his facts straight. Sullivan’s essay fails on this score, for it is littered with errors from start to finish: its potted history of man’s relationship with the beasts belongs in the category of fiction. But it is a powerfully persuasive piece of fiction, whose message is bound to appeal to the “chattering classes,” who will see it as a striking confirmation of Darwin’s view that the difference between human and nonhuman minds is “one of degree and not of kind.” It is for this reason that I have decided to pen a rebuttal.

The errors Sullivan makes in his article are not trifling ones; they are inexcusable blunders. We are told, for instance, that it was the ancient Greeks who first distinguished man from the other animals, putting him in a class of his own. We are told that the early Christians taught that animals have no souls. We are told that it was Charles Darwin who took the study of animal consciousness out of the salon, and into the laboratory. And we are told that ever since then, scientists have been steadily accumulating evidence in support of Darwin’s contention that animals possess reason and consciousness. All of these assertions by Sullivan are demonstrably wrong. As former U.S. Secretary of Defense James Schlesinger once said, “Each of us is entitled to his own opinion, but not to his own facts.”

In the interests of full disclosure, I should say a little about myself. I obtained my Ph.D. in philosophy in 2007, after completing a thesis on the topic of animal minds – in particular, the simplest kind of mind that an animal could possibly be said to possess. Following philosopher Frank Ramsey’s metaphor of belief as a map by which we steer, I argued that insofar as an animal has an internal map of its environment, which represents its current state, its goal and a suitable means to reach its goal, and insofar as it is capable of updating its internal map and correcting its motor behavior in the light of new information, the animal could legitimately be said to have beliefs, desires, and what I called a “minimal mind.” At the same time, I emphasized that these beliefs and desires need not be conscious ones, in the phenomenal sense: an animal might be able to navigate around its world and attain its goals, without having any feeling of “what it is like” when satisfying its desires. After surveying the scientific literature, I concluded that insects, spiders, octopuses, squid and fish could be said to possess minimal minds. However, after reading hundreds of scientific articles on animal consciousness, as well as a great volume of philosophical literature on the subject, I concluded that the domain of phenomenally conscious creatures in Nature was probably far smaller, being confined to mammals, birds and (just possibly) octopuses – in other words, a mere 0.2% of the 7.7 million species of animals estimated to inhabit this planet, making sentience the exception rather than the norm in the animal kingdom. I hope, then, that I shall not be accused of immodesty if I claim to have some familiarity with the scientific and philosophical literature relating to animal minds.

Although I’ll have a lot to say about Sullivan’s errors in this post, I’d like to acknowledge at the outset that he is a knowledgeable man, who has read very widely. My chief complaint is that his knowledge is broad rather than deep. Sometimes, it’s necessary to dig all the way down, in order to arrive at the truth.

The errors which I have identified in Sullivan’s article fall into three main categories: scientific, philosophical and historical. Since Sullivan’s composition is written as a survey of human beings’ changing attitudes towards animals over the course of time, I shall focus my criticisms principally on the third category.

Part One: Sullivan’s scientific errors

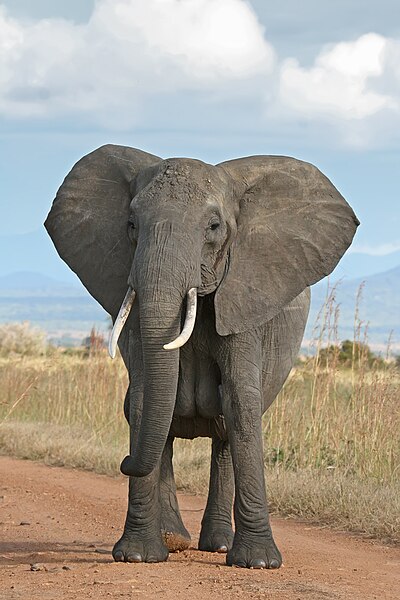

Sullivan’s central scientific claim is that every species of conscious animal – from the humble ant to the magnificent elephant – has its own kind of mind, and that the human mind, while unique, is no more unique among animal minds than the mind of, say, a bat or a cat. In other words, all animals are special, and humans are no more special than any other animal. In the opening paragraph of his essay, Sullivan briefly reviews the scientific evidence that the intelligence of other animals has been badly under-estimated:

These are stimulating times for anyone interested in questions of animal consciousness. On what seems like a monthly basis, scientific teams announce the results of new experiments, adding to a preponderance of evidence that we’ve been underestimating animal minds, even those of us who have rated them fairly highly. New animal behaviors and capacities are observed in the wild, often involving tool use — or at least object manipulation — the very kinds of activity that led the distinguished zoologist Donald R. Griffin to found the field of cognitive ethology (animal thinking) in 1978: octopuses piling stones in front of their hideyholes, to name one recent example; or dolphins fitting marine sponges to their beaks in order to dig for food on the seabed; or wasps using small stones to smooth the sand around their egg chambers, concealing them from predators. At the same time neurobiologists have been finding that the physical structures in our own brains most commonly held responsible for consciousness are not as rare in the animal kingdom as had been assumed. Indeed they are common. All of this work and discovery appeared to reach a kind of crescendo last summer, when an international group of prominent neuroscientists meeting at the University of Cambridge issued “The Cambridge Declaration on Consciousness in Non-Human Animals,” a document stating that “humans are not unique in possessing the neurological substrates that generate consciousness.” It goes further to conclude that numerous documented animal behaviors must be considered “consistent with experienced feeling states.”

Sullivan then regales his readers with a childhood story of his first family dog, a sagacious and sympathetic canine who was, according to many who knew her, “Smarter than some people I know!” Near the end of his scintillating essay, Sullivan confronts the question posed by the philosopher Thomas Nagel in 1974: “What Is It Like To Be a Bat?” Nagel answered this question by appealing to the notion of subjectivity: we can speak of animal consciousness when “there is something that it is to be that organism — something it is like for the organism.” Sullivan invokes Nagel’s notion in the concluding paragraph of his essay, where he argues that each sentient animal has its own unique kind of subjectivity, and that each “kind of mind” occupies its own special space on a continuum of consciousness:

In Michel de Montaigne’s excellent passage on animal minds in the “Apology for Raymond Sebond”, in which he writes about playing with his cat and wonders who is playing with whom, there is a funny and deceptively profound final sentence: “We divert each other with monkey tricks,” he writes. Meaning he and the cat. Both human being and cat are compared with a third animal. They are monkeys to each other, strange animals to each other. (The man is all but literally a monkey to the cat.) All three creatures involved in Montaigne’s metaphor are revealed as points on a continuum, and none of them understands the others very well. This is what the study of animal consciousness can teach us, finally — that we possess an animal consciousness.

Unfortunately, Sullivan’s article suffers from several scientific failings. First, Sullivan appears to be unaware of the existence of a large body of research supporting the hypothesis of a radical distinction between the human mind and other animal minds. The view that there is something special about the human mind is nowadays referred to as “human exceptionalism” – a term recently coined by lawyer Wesley Smith. It remains a highly controversial but tenable position, among scientists who study animal cognition. As such, it deserves a proper scientific hearing.

Second, Sullivan fails to address the vast literature on the neurological requirements for consciousness, which contradicts his thesis that there is a psychological continuum of animal minds, and that each species of animal occupies its own little point on this continuum of animal consciousness. Instead of a continuum, we see discontinuities: neuroscientists customarily distinguish between two distinct kinds of consciousness: primary consciousness (or the moment-to-moment awareness of sensory experiences and internal feelings such as emotions) and higher-order consciousness (also called “self-awareness”), which involves the capability to be conscious of being conscious, and which presupposes an awareness of one’s self as an entity that exists separately from other entities. What’s more, there’s a clear-cut distinction at the neurological level between primary consciousness and unconscious brain states: conscious brain states can be readily identified by their widespread, relatively fast, low-amplitude interactions in the thalamocortical core of the brain, which are driven by the individual’s current tasks and conditions. Finally, the neurological requirements for even the most basic kind of consciousness (primary consciousness) are fairly stringent, and while there is good evidence that mammals (and also birds, and just possibly octopuses) meet these criteria, all other animals fall a long way short. What that means is that at least 99.8% of the various species of animals in the world lack even the most rudimentary kind of subjective awareness of the world around them. We can agree with Nagel that there is something that it is like, to be a bat; however, for the vast majority of animals, there is nothing that it is “like,” to be that kind of animal.

The neurological requirements for self-awareness (or higher-order consciousness) are even more stringent: at the very most, no more than half a dozen families of creatures (great apes, elephants and corvids, and a few families of dolphins), comprising at most a few hundred species, are likely to satisfy these requirements, and there are many neuroscientists who would argue that only human beings satisfy them. Putting it another way: at least 99.99% of all animal species lack self-awareness, and we may well be the only species that possesses it.

Third, Sullivan appears to be blissfully unaware that the complex behavioral feats he cites in support of his claim that animals possess a sophisticated level of mental awareness can all be explained in a more parsimonious fashion, making the imputation of higher mental states to these creatures redundant. That includes sophisticated instances of tool use observed in animals.

Finally, Sullivan’s statements comparing human language with the alleged linguistic feats of other animals are extremely naive, and fly in the face of a large body of scientific evidence suggesting that human beings are the only animals capable of having a conversation with one another, in any meaningful sense of that term. (Communication is of course found in all living things, including organisms such as bacteria, which lack a mind of any sort.)

Chapter 1 – Scientists who believe that the human mind is like no other

From a scientific viewpoint, the greatest flaw in Sullivan’s essay lies not in what it says, but what it doesn’t say: Sullivan completely ignores a mass of scientific research pointing to a marked discontinuity between human and non-human animal minds. I suspect that’s because he is not even aware of it: the fanfare and publicity accompanying the release of the Cambridge Declaration on Consciousness last year appears to have duped him into believing that there is now a solid scientific consensus that animal minds are fundamentally no different from our own. I have already critiqued the logical and scientific errors in the Cambridge Declaration, which was signed by no more than a dozen of the world’s 75,000-odd neuroscientists, in a previous post, so I’ll say no more about it here, except to point out two glaring logical fallacies in the text of the Declaration. First, the authors of the Cambridge Declaration argue that because brain systems other than the cortex are also involved in supporting consciousness, therefore animal consciousness is possible, even in the total absence of a cortex: an obvious non sequitur. Second, the Declaration’s authors make a logical leap from the existence of strong evidence for emotions (or affective states) in certain animals to the unwarranted conclusion that these animals must possess some sort of consciousness. Emotions, however, are not necessarily conscious feelings: as Oxford university professor Marian Stamp Dawkins points out in her recent book, Why Animals Matter: Animal consciousness, animal welfare, and human well-being (OUP, 2012), “Animals and plants can ‘want’ very effectively with never a hint of consciousness, as we can see with a tree wanting to grow in a particular direction” (p. 171). Moreover, if, as many scientists contend, at least some of our human emotions are unconscious, then we have to consider the possibility that for certain animals, the entire gamut of their emotions might turn out to be unconscious.

If Sullivan wishes to open his mind to an alternative scientific view of animal minds, then I would invite him to peruse the following articles, which may be of interest to him:

(1) Darwin’s mistake: Explaining the discontinuity between human and nonhuman minds by Derek C. Penn, Keith J. Holyoak and Daniel J. Povinelli, followed by an open peer commentary, and the authors’ response (Behavioral and Brain Sciences, 2008, 31, 109–178);

(2) On the lack of evidence that non-human animals possess anything remotely resembling a ‘theory of mind’ by Derek C. Penn and Daniel J. Povinelli (Philosophical Transactions of the Royal Society B, 29 April 2007, vol. 362 no. 1480 731-744);

(3) On Becoming Approximately Rational: The Relational Reinterpretation hypothesis by Derek C. Penn and Daniel J. Povinelli (in S. Watanabe, A. P. Blaisdell, L. Huber and A. P. Young (eds.), Rational animals, irrational humans, Tokyo: Keio University Press, 2009, pp. 23-44);

(4) Meta-cognition in Animals: A Skeptical Look by Peter Carruthers (Mind and Language, Vol. 23 No. 1, February 2008, pp. 58–89);

(5) An Evolutionary Framework for the Acquisition of Symbolic Cognition by Homo sapiens by Ian Tattersall (Comparative Cognition and Behavior Reviews, 2008, Vol. 3, doi: 10.3819/ccbr.2008.30006, pp. 99-114);

(6) Mental time travel and the shaping of the human mind by Thomas Suddendorf, Donna Rose Addis and Michael C. Corballis (Philosophical Transactions of the Royal Society B, 12 May 2009, vol. 364 no. 1521, doi: 10.1098/rstb.2008.0301, pp. 1317-1324).

(7) Behavioural evidence for mental time travel in nonhuman animals by Thomas Suddendorf and Michael C. Corballis, in (Behavioral Brain Research, 31 December 2010; 215(2):292-8. doi: 10.1016/j.bbr.2009.11.044);

(8) Mental time travel: continuities and discontinuities by Thomas Suddendorf, in Trends Cognitive Sciences, 2013, 17, 151–152. See also The wandering rat: response to Suddendorf by Michael C. Corballis.

(9) The comparative study of mental time travel by W.A. Roberts and M.C. Feeney, in Trends in Cognitive Sciences, 2009 Jun;13(6):271-7. doi: 10.1016/j.tics.2009.03.003. See also

(10) Evidence for future cognition in animals by William A. Roberts, in Learning and Motivation 43 (2012): 169-180. doi: 10.1016/j.lmot.2012.05.005.

(11) “Tool use, planning, and future thinking in children and animals” (in McCormack, T., Hoerl, C. and Butterfill, S. (eds.), Tool use and causal cognition. Consciousness and self-consciousness, Oxford University Press, 2011, pp. 129-147, ISBN 9780199571154), by Teresa McCormack and Christopher Hoerl.

I would also invite Sullivan to have a look at a new book by psychologist Thomas Suddendorf, titled The Gap: The Science of What Separates Us from Other Animals (Basic Books, 2013). Suddendorf’s book, which is due for release on November 12, has already garnished high praise from scientific luminaries such as Jane Goodall, Endel Tulving and Simon Baron-Cohen. To give readers a flavor of what the book promises (I haven’t read it yet), I shall quote a brief excerpt from the review in Scientific American Mind, by Nina Bai:

In The Gap, Suddendorf examines the apparent chasm that separates humans from other animals. He covers six domains — language, mental time travel (thinking about the past and future), theory of mind (thinking about thinking), intelligence, culture and morality — in which multitudes of clever studies have probed the minds of animals and, for comparison, young children…

Although he presents both “romantic” and “killjoy” interpretations of animal ability, his sure-handed, fascinating book aims neither to exaggerate the wisdom of animals nor to promote the exceptionalism of human beings.

Instead Suddendorf distills the gap into two overarching capacities: the ability to imagine different scenarios beyond what our senses perceive and a strong drive to link our minds together, by looking to one another for information or understanding. These two capacities transform common animal traits into distinctly human ones: communication into language, memory into planning, and empathy into morality.

Personally, I am rather skeptical that the differences between humans and other animals can be distilled into the two capacities nominated by Suddendorf. Neither our ability to envisage scenarios nor our desire to link minds seem to be able to account for the simple fact that human thoughts – such as my thought that it will rain tomorrow – are inherently meaningful, in their own right: unlike the signs and words that we use, they do not derive their meaning from any social convention. Nor can their meaning be cashed out in biological terms: while biological processes may have a function, they are incapable of having a propositional meaning. Rather, the meaningfulness of human thought should, I believe, be regarded as a “brute fact” about the world: it is surely just as “basic” as the two capacities which are claimed by Suddendorf to account for our cognitive differences from other animals. Despite this reservation of mine, however, I have the greatest respect for Suddendorf’s scientific thoroughness, as well as his fair-minded approach, and I would strongly encourage readers to go and buy his book.

What’s unique about human beings?

It is unwise to be too dogmatic about what animals can and can’t do. Systematic research in the field of animal cognition has been going on for a little over a century, which makes it a relatively new field, in which scientists still have a lot to discover. Consequently, any scientific claim that the human mind is uniquely different from that of other animals must be regarded as provisional: future research may force us to change our minds. Nevertheless, a good case can be made for the view that mentally speaking, the human being is an animal like no other.

At the beginning of this chapter, I listed several scientific articles, whose authors argue for the existence of cognitive abilities which are unique to human beings. I’d now like to summarize the authors’ key findings:

(a) Self-awareness appears to be unique to human beings

|

Vincent van Gogh, self-portrait after having cut off his right ear. 1889. Some scientists contend that self-awareness is a trait unique to human beings. Oil on canvas. Collection of Mr. and Mrs. Leigh B. Block, Chicago. Image courtesy of the Yorck Project and Wikipedia.

Sullivan himself concedes in his article, One of us, that self-awareness is a uniquely human trait, even as he disparages it by quoting Georg Christof Lichtenberg’s alleged remark that only a man can draw a self-portrait, but only a man wants to. But the ethical relevance of self-awareness is obvious: after all, why should I care about an animal that’s naturally incapable of even caring about itself? Concern about reducing animal suffering is one thing; concern about the animals who are suffering is quite another. Only concern of the latter sort requires us to regard animals as other “selves,” towards whom we have certain moral duties. As Professor James Rose et al. put it in their 2012 article, Can fish really feel pain? (Fish and Fisheries, doi: 10.1111/faf.12010):

…One of the most critical determinants of suffering from pain is the personal awareness and ownership of the pain (Price 1999). This is why dissociation techniques, in which a person can use mental imagery to separate themselves from pain, are effective for reducing suffering (Price 1999). In contrast, without awareness of self, the pain is no one’s problem. (2012, p. 27)

Professor David B. Edelman is one of the signatories of the 2012 Cambridge Declaration on Consciousness. As such, he can hardly be considered an advocate of human exceptionalism. Nevertheless, even he admits that “higher-order consciousness” (which involves having a sense of being a “self” with a past and a future) may well turn out to be a trait found only in human beings. Edelman makes this surprising admission in an article he co-authored with Bernard J. Baars and Anil K. Seth, titled, Identifying hallmarks of consciousness in non-mammalian species (Consciousness and Cognition 14 (2005) 169–187):

Higher order consciousness, which emerged as a concomitant of language, occurs in modern Homo sapiens and may or may not be unique to our species. (2005, p. 174)

Philosophy professor Peter Carruthers argues that there is no good evidence that non-human animals possess self-awareness, in his essay, Meta-cognition in Animals: A Skeptical Look (Mind and Language, Vol. 23 No. 1, February 2008, pp. 58–89). In this essay, meta-cognition is defined narrowly as knowledge of one’s own mental states. Carruthers surveys the recent literature on alleged meta-cognitive processes in non-human animals, including their supposed ability to monitor own their states of certainty and uncertainty. Carruthers convincingly argues that in each case, the scientific data admit of a simpler, purely first-order, explanation, “appealing only to states and processes that are world-directed rather than self-directed.” On methodological grounds, then, “we should refuse to attribute meta-cognitive processes to animals.” Carruthers adds that in any case, we should expect meta-cognition to be rare in the animal kingdom:

…[I]n the decades that have elapsed since Premack and Woodruff (1978) first raised the question whether chimpanzees have a ‘theory of mind’, a general (but admittedly not universal) consensus has emerged that meta-cognitive processes concerning the thoughts, goals, and likely behavior of others is cognitively extremely demanding (Wellman, 1990; Baron-Cohen, 1995; Gopnik and Melzoff, 1997; Nichols and Stich, 2003), and some maintain that it may even be confined to human beings (Povinelli, 2000). For what it requires is a theory (either explicitly formulated, or implicit in the rules and inferential procedures of a domain-specific mental faculty) of the nature, genesis, and characteristic modes of causal interaction of the various different kinds of mental state. There is no reason at all to think that this theory should be easy to come by, evolutionarily speaking. And then on the assumption that the same or a similar theory is implicated in meta-cognition about one’s own mental states, we surely shouldn’t expect meta-cognitive processes to be very widely distributed in the animal kingdom.

Nor should we expect to find meta-cognition in animals that are incapable of mindreading.

In a nutshell: the view that self-awareness is a uniquely human trait is a scientifically respectable one.

(b) Autobiographical memory appears to be a uniquely human trait

|

Madeleines de Commercy. In In Search of Lost Time (also known as The Remembrance of Things Past), author Marcel Proust uses the example of the “episode of the madeleine,” to describe an incident where the taste of a madeleine cake while drinking tea calls forth involuntary memories from his past. Image courtesy of Bernard Leprêtre and Wikipedia.

In his book, The Case for Animal Rights (University of California Press, 1983), animal liberationist Tom Regan argued that animals who are “subjects-of-a-life” may properly be said to have rights. In chapter 1 of his book, Regan defined the term “subject-of-a-life” as follows: “individuals are subjects-of-a-life if they have beliefs and desires; perception, memory, and a sense of the future, including their own future; an emotional life together with feelings of pleasure and pain; preference- and welfare-interests; the ability to initiate action in pursuit of their desires and goals; a psychophysical identity over time; and an individual welfare in the sense that their experiential life fares well or ill for them, logically independently of their utility for others and logically independently of their being the object of anyone else’s interests.” Only subjects-of-a-life have rights, according to Regan. However, there is no good evidence that any non-human animals meet the entire set of criteria for being a subject-of-a-life. In particular, it remains to be shown that animals have a “psychophysical identity over time” or a “sense of the future.” I conclude that Regan has yet to demonstrate that any non-humans animals have rights.

In their article, Mental time travel and the shaping of the human mind (Philosophical Transactions of the Royal Society B, 12 May 2009, vol. 364 no. 1521, doi: 10.1098/rstb.2008.0301, pp. 1317-1324), authors Thomas Suddendorf, Donna Rose Addis and Michael C. Corballis argue that autobiographical memory is a uniquely human trait:

Episodic memory, enabling conscious recollection of past episodes, can be distinguished from semantic memory, which stores enduring facts about the world. Episodic memory shares a core neural network with the simulation of future episodes, enabling mental time travel into both the past and the future. The notion that there might be something distinctly human about mental time travel has provoked ingenious attempts to demonstrate episodic memory or future simulation in non-human animals, but we argue that they have not yet established a capacity comparable to the human faculty. The evolution of the capacity to simulate possible future events, based on episodic memory, enhanced fitness by enabling action in preparation of different possible scenarios that increased present or future survival and reproduction chances. Human language may have evolved in the first instance for the sharing of past and planned future events, and, indeed, fictional ones, further enhancing fitness in social settings…

The claim that only humans are capable of episodic memory and episodic foresight has posed a challenge to animal researchers. One of the difficulties has been to demonstrate memory that is truly episodic, and not merely semantic or procedural. For example, does the dog that returns to where a bone is buried remember actually burying it, or does it simply know where it is buried? One suggestion is that episodic memory in non-human animals might be defined in terms of what happened, where it happened and when it happened — the so-called www criteria.… Clayton and colleagues (Clayton et al. 2003) concluded that scrub jays can not only remember the what, where and when of past events, but also anticipate the future by taking steps to avoid future theft…

It remains possible, though, that these ingenious studies do not prove that the animals actually remember or anticipate episodes. For example, associative memory might be sufficient to link an object with a location, and a time tag or ‘use-by’ date might then be attached to the representation of the object to update information about it (Suddendorf & Corballis 2007). Moreover, ‘how long ago’ can be empirically distinguished from ‘when’, so the ability of scrub jays to recover food depending on how long ago it was cached need not actually imply that they remember when it was cached. As evidence for this, a recent study suggests that rats can learn to retrieve food in a radial maze on the basis of how long ago it was stored, but not on when it was stored, suggesting that ‘episodic-like memory in rats is qualitatively different from episodic memory in humans’ (Roberts et al. 2008).

More generally, the www criteria may not be sufficient to demonstrate true episodic memory. Most of us know where we were born, when we were born and, indeed, what was born, but this is semantic memory, not episodic memory (Suddendorf & Busby 2003). Conversely, one can imagine past and future events and be factually wrong about what, where and when details (in fact, we are often mistaken). This double dissociation, then, strongly suggests that we should not equate mental time travel with www memory.

The concerns raised by Suddendorf, Addis and Corballis are legitimate ones. I should mention that Corballis has recently changed his views on the possibility of mental time-travel in animals, Suddendorf is of the same view as when he co-wrote the above article. As he puts it in a recent letter to Trends in Cognitive Sciences (Volume 17, Issue 4, 151-152, 07 March 2013) titled, Mental time travel: continuities and discontinuities:

I do not think it is useful to resurrect Darwin’s blanket statement that differences in mind between humans and animals certainly are one of degree and not of kind… I see no reason why mental time travel should not have evolved gradually through Darwinian descent with modification. However, continuity over evolutionary time (e.g., from Australopithecines to Homo) should not be confused with a need to postulate an absence of gaps in the distribution of traits among extant species [9]. As transitional forms go extinct, vast qualitative differences can certainly emerge. On current evidence, it still appears that human mental time travel is profoundly special. There are few signs that animals act with the flexible foresight that is so characteristic of humans.

Perhaps Sullivan will want to argue that Suddendorf is out of date on this point, in light of the fact that a recent report by Virginia Morell in Science magazine (18 July 2013) attributed the capacity for mental time travel to orangutans (Apes Capable of ‘Mental Time Travel’):

A single cue — the taste of a madeleine, a small cake, dipped in lime tea — was all Marcel Proust needed to be transported down memory lane. He had what scientists term an autobiographical memory of the events, a type of memory that many researchers consider unique to humans. Now, a new study argues that at least two species of great apes, chimpanzees and orangutans, have a similar ability; in zoo experiments, the animals drew on 3-year-old memories to solve a problem. Their findings are the first report of such a long-lasting memory in nonhuman animals. The work supports the idea that autobiographical memory may have evolved as a problem-solving aid, but researchers caution that the type of memory system the apes used remains an open question…

A summary of the study may be found here (Memory for Distant Past Events in Chimpanzees and Orangutans, by Gema Martin-Ordas, Dorthe Berntsen and Josep Call, in Current Biology, Volume 23, Issue 15, 1438-1441, 18 July 2013). The study’s lead author believes that the findings constitute evidence for autobiographical memory in chimpanzees and orangutans. But as Virginia Morell points out in her report, other scientists are far from convinced:

Together, the experiments reveal that at least two species of great apes “can remember specific events and retrieve this memory to solve a particular problem,” something never shown before in great apes, says Jonathon Crystal, a comparative psychologist at Indiana University, Bloomington…

But Crystal and others are not convinced that this experiment demonstrates autobiographical memory. “Is there evidence here for ‘cued recall,’ as the authors argue? No,” says Martin A. Conway, a memory researcher at City University London. Conway does think the apes have “moment-by-moment episodic memory, but they are not saying to themselves, ‘I can’t believe it. I’m back in this stupid lab with this stupid test.’ Believe me, that’s not what they’re doing.”

It appears to me that the authors of the study cited have made an unwarranted logical inference. The fact that chimpanzees and orangutans are capable of retrieving memories of events from their past does not imply that the have a concept of the past, let alone of “three years in the past.” In the absence of such a concept, the use of the term “autobiographical memory” to describe the animals’ capabilities is unjustified: the authors should have chosen a less loaded term.

I discuss other similar experiments with apes (and chickadees) in my recent post, Do apes plan for the future? Why I’m skeptical (October 23, 2013), including a recent report in Science Daily (September 11, 2013), titled, Orangutans Plan Their Future Route and Communicate It to Others, claims that orangutans can make plans for the following day, which I rejected as conceptually flawed, on the grounds that the ability of orangutans to broadcast calls to other individuals indicating their intended travel directions over a span of one day need not imply that the animals are envisaging their tomorrow’s travel route as an event occurring in the future, or even that they have a concept of “the future” as such. All that they need to possess when making their calls is the intention to follow that route, and nothing more.

Finally, Teresa McCormack and Christopher Hoerl argue in their recent essay, “Tool use, planning, and future thinking in children and animals” (in McCormack, T., Hoerl, C. and Butterfill, S. (eds.), Tool use and causal cognition. Consciousness and self-consciousness, Oxford University Press, 2011, pp. 129-147, ISBN 9780199571154), that mental time-travel presupposes a “narrative grasp of events,” and that the ability to construct a life-narrative appears to be a distinctively human trait. The authors carefully evaluate instances of alleged mental time-travel in primates, before making a proposal of their own as to how this ability could be tested experimentally in the future.

I conclude that at the present time, one can plausibly maintain on scientific grounds that human beings are the only animals capable of mentally traveling back to the past and forward to the future. In any case, the tool use studies performed on laboratory animals are incapable of demonstrating the occurrence of narrative thinking on their part.

(c) Only human beings appear to possess awareness of their own mental states and other individuals’ mental states

|

A baby exploring his reflection. Image courtesy of roseoftimothywoods and Wikipedia.

Sullivan appears to be rather confused regarding animals’ capacity for meta-cognition and their possession of a “theory of mind.” At one point, he describes self-awareness as “the attribute of consciousness we happen to possess over all creatures,” but at another point, he insists that animals “talk to each other” in “complex ways,” even communicating propositions such as “We should move on,” which would surely imply that they possess awareness of their own and other animals’ minds.

Sullivan briefly refers to the mirror test in his article, when he writes: “Birds and chimps and dolphins have been made to look at themselves in mirrors.” The much-ballyhooed Cambridge Declaration on Consciousness elaborates: “Magpies in particular have been shown to exhibit striking similarities to humans, great apes, dolphins, and elephants in studies of mirror self-recognition.” I should add that behavioral scientist Gordon Gallup Jr., who first developed the mirror test in 1970 as a way of assessing primates’ self-awareness, is doubtful that dolphins are genuinely capable of passing the test. In 2011, he stated: “The evidence for mirror self recognition in dolphins is tenuous. It’s not substantive.” What’s more, the number of elephants said to have passed the mirror test is very small. Readers can view the evidence for magpies passing the test here.

However, even Sullivan doesn’t think too much of this evidence: he refers to it as “a measure, if somewhat arbitrary, of self-awareness.” Philosopher Michael P. T. Leahy identifies the central problem with mirror tests in his provocative book, Against Liberation (Routledge, revised paperback edition, 1994) when he observes that all they show is that “the creatures recognise their own bodies” (p. 146). However, “for self-consciousness to get a foothold it would be necessary to show that they were aware of recognising themselves; which is awareness of a different order.”

I should mention that most animals do not recognize themselves in mirrors, even after repeated exposure to mirrors. Dogs, for instance, treat the image they see in a mirror as if it were another individual, as can be seen from this video. Cats also fail to recognize themselves in mirrors. This is strong prima facie evidence that they lack self-awareness. Psychology professor John Pilley has claimed that Chaser, a border collie capable of recognizing about 1,000 words, has the same level of cognition as a human toddler. But as early as 18 months, half of all children recognize the reflection in the mirror as their own (Lewis, M. and Brooks-Gunn, J., Social cognition and the acquisition of self, New York: Plenum Press, 1979, p. 296). Chaser doesn’t, and he never will.

Researchers Penn and Povinelli have concluded that the ability to see the world from another individual’s perspective appears to be confined to human beings, and there is no good evidence that chimpanzees or any other non-human animals possess it:

The available evidence suggests that chimpanzees, corvids and all other non-human animals only form representations and reason about observable features, relations and states of affairs from their own cognitive perspective. We know of no evidence that non-human animals are capable of representing or reasoning about unobservable features, relations, causes or states of affairs or of construing information from the cognitive perspective of another agent. Thus, positing an fToM [folk theory of mind – VJT], even in the case of corvids, is simply unwarranted by the available evidence… (Penn and Povinelli, 2007, PDF. p. 7)

In their 2007 paper, Penn and Povinelli proposed two carefully controlled experiments which could provide evidence of a “theory of mind” in non-human animals. Even adult chimpanzees who were used to interacting with human beings failed the first experiment proposed by the authors, whereas 18-month-old human infants passed the same test in flying colors.

Summing up the current state of animal cognition research, Penn and Povinelli conclude in their 2009 paper, On Becoming Approximately Rational: The Relational Reinterpretation hypothesis by Derek C. Penn and Daniel J. Povinelli (in S. Watanabe, A. P. Blaisdell, L. Huber and A. P. Young (eds.), Rational animals, irrational humans, Tokyo: Keio University Press, 2009, pp. 23-44):

…[A]lthough there is abundant evidence that apes and monkeys act as if they are taking the visual perspective of others into account (e.g., Flombaum & Santos, 2005; Hare et al., 2006), there is no evidence that they are actually representing or reasoning about others’ subjective visual experience as distinct from the observable behavioral cues causally related to others’ actions in the world (Penn & Povinelli, in press). Nor is there any evidence that nonhuman primates understand that others have a subjective visual experience analogous to their own (Povinelli et al., 2000).

All of the evidence collected to date suggests that chimpanzees only represent others’ goals and intentions in terms of external states of the environment and observable behavioral cues but do not understand that others have internal mental representations of goals and unobservable intentions which causally guide others’ behavior (cf. Tomasello et al., 2005). (Penn and Povinelli, 2009, PDF, pp. 17-18)

Regarding the alleged ability of some birds belonging to the crow family (corvids) to attribute mental states to other individuals, Penn and Povinelli argue that a more parsimonious interpretation of the evidence makes more sense:

In all of the experiments with corvids cited above, it suffices for the birds to associate specific competitors with specific cache sites and to reason in terms of the information they have observed from their own cognitive perspective: e.g. ‘Re-cache food if a competitor has oriented towards it in the past’, ‘Attempt to pilfer food if the competitor who cached it is not present’, ‘Try to re-cache food in a site different from the one where it was cached when the competitor was present’, etc. The additional claim that the birds adopt these strategies because they understand that ‘The competitor knows where the food is located’ does no additional explanatory or cognitive work. (Penn and Povinelli, 2007, p. 6)

Penn, Holyoak and Povinelli conclude that while corvids are highly remarkable birds, there is no evidence that they are aware of each others’ mental states:

The best evidence for a ToM [theory of mind] system in a non-primate comes from the work of Emery, Clayton and colleagues (Emery & Clayton 2001; 2004b; in press)…. Results such as these leave no doubt that corvids are remarkably intelligent creatures, able to keep track of the social context of specific past events, as well as the what, when, and where information associated with those events (Clayton et al. 2001). But nothing in the results reported to date suggests that corvids actually reason about their conspecifics’ mental states – or even understand that their conspecifics have mental states at all – as distinct from their conspecifics’ past and occurrent behaviors and the subjects’ own knowledge of past and current states of affairs (Penn & Povinelli 2007b; Povinelli et al. 2000; Povinelli & Vonk 2003; 2004). (Penn, Holyoak and Povinelli, 2008, p. 120)

(d) Only modern human beings are capable of creating symbols

|

The peace symbol was originally designed in 1958 by British artist Gerald Holtom, for the British nuclear disarmament movement. Human beings are the only animals that create symbols. Image courtesy of Wikipedia and Schuminweb.

We human beings have an odd way of perceiving the world around us. Other organisms seem to react more or less directly to the stimuli they receive from the environment, albeit with wildly varying degrees of subtlety and complexity. In witness of our place within the living world we react directly too, as when we place our hand on a hot plate, or duck to avoid a hurled object. But at a higher level we are constantly recreating the world in our heads. Once that object has whizzed by, we start wondering why. We do this by decomposing the continuum of our surroundings into a mass of individual mental symbols, which we then combine and recombine to produce the intellectual constructs to which we react. And given the same facts, it’s quite likely that each of us will produce a slightly – or even vastly – different construct. Of course, nowadays it’s almost impossible to pick up a behavioral periodical without learning that one of the great apes or some other denizen of Nature has just been observed to exhibit yet another behavior that we had once believed unique to ourselves; and indeed cognitive psychologists have recently reported in this very Journal (Bluff et al., 2007) that New Caledonian crows share with humans and chimpanzees the ability to form and use stick tools – though the authors are wisely reluctant to conclude that parallel cognitive processes are involved in all three cases. Wisely, among other things, because it is important to distinguish between “symbolic” behaviors and those that are merely “intelligent.” Our symbolic mode of reasoning is not simply like that of our undoubtedly intelligent (i.e. responsively complex) precursors and relatives, only more so; it is not the result of adding just a little more intelligence, as if filling up a glass. It is instead qualitatively different, operating on a different algorithm. Clearly, the intelligence of human beings can be dissected without too much difficulty into the same categories as those used by cognitive scientists to study other primates: memory, attention, inference, representation, and so forth. But the key point is that, even where some kind of continuum can be discerned among primates including ourselves, we Homo sapiens don’t simply have more of the same; what is critical is how we integrate these elements.

…[T]he vastly varying cultures of modern humans are qualitatively different from anything we see amongst the great apes, principally because much of what makes human cultures unique lies in the abstract belief systems on which they are based, rather than on simple direct imitation. And on another cognitive level, what is truly different about human beings is that, based on our symbolic abilities, we have a generalized and apparently inexhaustible capacity for generating new behaviors when presented with new stimuli. (Tattersall, 2008, pp. 99-100)

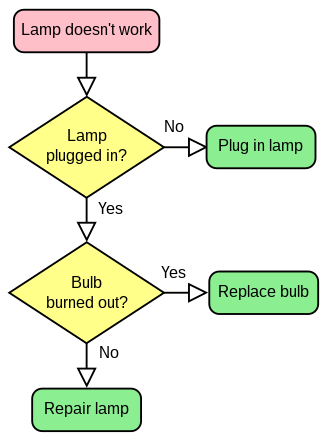

(e) Only human beings can understand abstract rules

|

A simple flowchart for troubleshooting a broken lamp. Only human beings appear to be capable of understanding abstract rules. Image courtesy of Booyabazooka, Wapcaplet and Wikipedia.

There is now an extensive body of research indicating that non-human animals are incapable of the following mental feats:

…[R]ole-based (i.e., “algebraic”) rules, as Marcus (2001) points out, … are the hallmarks of human thought and language. To date, there is no evidence for this kind of rule learning in any nonhuman animal. (Penn, Holyoak and Povinelli, 2008, p. 114)

…[T]here is a conspicuous absence of evidence that any nonhuman animal can reason about scale models, maps, or higher-order spatial relations in a human-like fashion. (Penn, Holyoak and Povinelli, 2008, p. 115)

Given the ubiquity and importance of hierarchical relations in human thought, the lack of any similar ability in nonhuman animals would therefore constitute a marked discontinuity between human and nonhuman minds. (Penn, Holyoak and Povinelli, 2008, p. 117)

Seed et al.’s (2006) results add to the growing evidence that corvids are quite adept at using stick-like tools (see, e.g., Weir & Kacelnik 2007). But as Seed et al. (2006) point out, these results also suggest that rooks share a common cognitive limitation with nonhuman primates: they do not understand “unobservable causal properties” such as gravity and support; nor do they reason about the higher-order relation between causal relations in an analogical or theory-like fashion. (Penn, Holyoak and Povinelli, 2008, p. 119)

Recently, there has been much discussion in the blogosphere about a 2012 study entitled, “New Caledonian crows reason about hidden causal agents”, in the Proceedings of the National Academy of Sciences (doi: 10.1073/pnas.1208724109, PNAS September 17, 2012) by Alex Taylor, Rachael Miller, and Russell Gray, which allegedly shows that crows have a tendency to attribute the movements of an inanimate object (e.g. a stick) to a causal agent whom they know to be in the vicinity, even when that agent is hidden from view, and that crows react with fear when they witness the movements of an inanimate object in the absence of any nearby causal agent. The authors of the study conclude that crows are capable of reasoning about a hidden causal agent.

For my part, I think that this is highly doubtful, for reasons which I have explained in my recent post, Are crows capable of reasoning about hidden causal agents? Five reasons for skepticism. First, as the authors of the study note, the same ability that the crows possess is also found in seven-month-old human infants. Most people would say that children of that age are not yet capable of reasoning, as they haven’t acquired a language. A second reason for skepticism is that although New Caledonian crows take care of their young for a period of two years (which is very long for a bird), the tool-making abilities of crows are not acquired through teaching from their parents. Third, the notion of a cause is quite a sophisticated concept, which even philosophers have a hard time explaining. It is therefore highly doubtful whether crows, whose warblings lack the vital properties of productivity, recursivity, and displacement which characterize language as such, have a concept of causation. Fourth, even if we were to generously grant that crows can somehow grasp the notion of a cause, it is quite another thing to claim that they possess the notion of a causal agent – that is, a being who deliberately performs voluntary actions, such as pushing a stick from behind a curtain. Evidence cited above by primate researchers Derek Penn and Daniel Povinelli in their paper, On the lack of evidence that non-human animals possess anything remotely resembling a ‘theory of mind’ (Philosophical Transactions of the Royal Society B, 362, 731-744, doi:10.1098/rstb.2006.2023) suggests that the awareness of other intentional agents is a uniquely human trait. A final reason for being leery of claims that crows can reason about hidden causal agents is the absence of rigorous testing of the claim that hiddenness played any role in the “reasoning” of the crows in the experiment reported by Taylor and his colleagues.

(f) Only human beings can go deeper than mere perceptions

|

Sunrise at Cua Lo, Vietnam, 16 July 2007. Only human beings can understand the proposition that the Sun is bigger than it looks, because only human beings are capable of distinguishing between appearance and reality. Image courtesy of Handyhuy and Wikipedia.

In Book III of his Nicomachean Ethics, Aristotle remarked that “we imagine the sun to be a foot in diameter though we are convinced that it is larger than the inhabited part of the earth.” He was referring here to our capacity to distinguish between appearance and reality – an ability which is, as far as we know, unique to human beings. Other animals live entirely in the world of appearances. As Penn, Holyoak and Povinelli (2008) put it:

… [O]nly humans form general categories based on structural rather than perceptual criteria, find analogies between perceptually disparate relations, draw inferences based on the hierarchical or logical relation between relations, cognize the abstract functional role played by constituents in a relation as distinct from the constituents’ perceptual characteristics, or postulate relations involving unobservable causes such as mental states and hypothetical physical forces. There is not simply a consistent absence of evidence for any of these higher-order relational operations in nonhuman animals; there is compelling evidence of an absence. (Penn, Holyoak and Povinelli, 2008, p. 110)

In short, the ability to reason about higher-order, analogical relations in a systematic and productive fashion appears to be an integral aspect of human causal cognition… In stark contrast to the human case, there is no compelling evidence that nonhuman animals form tacit theories about the unobservable causal mechanisms at work in the world, seek out explanations for anomalous causal relations, reason diagnostically about unobserved causes, or distinguish between genuine and spurious causal relations on the basis of their prior knowledge of abstract causal mechanisms. Indeed, there is consistent evidence of an absence across a variety of protocols (see, e.g., Penn & Povinelli 2007a; Povinelli 2000; Povinelli & Dunphy-Lelii 2001; Visalberghi & Tomasello 1998). (Penn, Holyoak and Povinelli, 2008, p. 118)

…[B]ased on the available empirical evidence, there appears to be a significant gap between the relational abilities of modern humans and all other extant species – a gap at least as big, we argued, as that between human and nonhuman forms of communication. Among extant species, only humans seem to be able to reason about the higher-order relations among relations in a systematic, structural, and role-based fashion. Ex hypothesi, higher-order, role-based relational reasoning appears to be a uniquely human specialization… (Penn, Holyoak and Povinelli, 2008: Authors’ response, p. 154)

To sum up: we have looked at six areas in which there are good scientific grounds for saying that the human mind is in a class of its own. I do not claim that the evidence I have assembled here is compelling, but it is certainly powerful. Readers will note that I have deliberately avoided making vague claims like, “Only human beings possess culture,” “Only human beings possess art” or “Only human beings posses morality,” as the terms “art,” “culture” and “morality” are difficult to define, which makes the statements hard to evaluate scientifically. Instead, I have confined myself to evidence coming from the laboratory.

The next topic I propose to discuss is the brain. Are there any grounds here to support Sullivan’s claim that there is a continuum of consciousness in animals?

Chapter 2 – The neurological evidence against a “continuum of consciousness”

As we have seen, Sullivan contends that all animals, including ourselves, occupy a continuum of consciousness. In order to convey just how wide of the mark the “continuum” metaphor is, I’d like to tell a little story, which I’ll call the parable of the plain.

The Parable of the Plain

|

Modern high-rise buildings tower above the shanties crowded along the Martin Pena Canal in this 1973 photo of Puerto Rico, United States. In a similar fashion, when we examine the minds of animals, we discover sharp discontinuities, rather than the smooth continuum portaryed by John Jeremiah Sullivan: the vast majority of the 7.7 million-odd species of animals lack consciousness altogether, and of the few thousand species that do possess it, no more than a few dozen possess self-consciousness – and that’s a very generous estimate. What’s more, there are good grounds for placing humans in a special category of their own. Image courtesy of John Vachon, the National Archives and Records Administration, the Environmental Protection Agency and Wikipedia.

Imagine that you live in a large city out in the desert, with a total population of 7,700,000 people. The vast majority of these people live on a plain, in shanties. However, a small but sizable proportion live underground, in caves, while about 15,000 people – perhaps 50,000 by an extremely generous count – live on a plateau overlooking the plain. These people live in brick houses, of varying size and quality. On top of the plateau itself, there is an even tinier elevated area or mesa, on top of which 60 people live, at the most – and perhaps fewer than a dozen. These people live in Hollywood-style mansions. Finally, there’s a guy living in a huge tower that’s elevated above the mesa, giving him a panoramic view of the whole city.

Would anyone say that there’s a continuum of wealth in this city? Obviously not: as we can see, there are sharp discontinuities of wealth between the various inhabitants, who fall into four distinct income categories. The situation is the same when we look at the 7,700,000 species of animals. The vast majority – including many insects – use “internal maps” of some sort to navigate their way around their world, but without any subjective feeling of “what it is like” when they navigate. A smaller number, like the underground cave dwellers, are not even this fortunate: they have senses, but no internal maps. (Corals would fall into this category.) The number of species of animals endowed with consciousness – defined broadly as a feeling of “what it is like” – probably comes to no more than 15,000 – if we include all species of mammals and birds, and perhaps 800 species of cephalopods. Even if we generously include all vertebrates, we get a figure of no more than 50,000 or so. The number of self-aware species cannot possibly exceed 60, even if we include all corvids, dolphins, elephants and great apes. And finally, there’s the human animal. Certainly, all of these species of animals can communicate with other individuals – even humble bacteria can do that. But would anyone say that they’re all lying on the same spectrum?

Why scientists believe animal consciousness comes in two discrete varieties

|

Midline structures in the brainstem and thalamus necessary to regulate the level of brain arousal. Small, bilateral lesions in many of these nuclei cause a global loss of consciousness. Image (courtesy of Wikipedia) taken from Christof Koch (2004), The Quest for Consciousness: A Neurobiological Approach, Roberts, Denver, CO, with permission from the author under license.

Sullivan would have his readers believe that all animal consciousness falls along a continuum. Sullivan may be surprised to realize that neuroscientists disagree with his “continuum” metaphor: in their work, they classify consciousness into two sharply distinct varieties.

Professor James D. Rose (Department of Zoology and Physiology, University of Wyoming) has conducted an exhaustive survey of the current scientific literature on consciousness. A few years ago, he summarized current scientific findings on consciousness in a widely cited article, entitled, The Neurobehavioral Nature of Fishes and the Question of Awareness and Pain (Reviews in Fisheries Science, 10(1): 1-38, 2002). According to Professor Rose, neuroscientists customarily distinguish between two kinds of consciousness: primary consciousness, or moment-to-moment awareness of sensory experiences and feelings; and higher-order consciousness, or self-awareness:

Although consciousness has multiple dimensions and diverse definitions, use of the term here refers to two principal manifestations of consciousness that exist in humans (Damasio, 1999; Edelman and Tononi, 2000; Macphail, 1998): (1) “primary consciousness” (also known as “core consciousness” or “feeling consciousness”) and (2) “higher-order consciousness” (also called “extended consciousness” or “self-awareness”). Primary consciousness refers to the moment-to-moment awareness of sensory experiences and some internal states, such as emotions. Higher-order consciousness includes awareness of one’s self as an entity that exists separately from other entities; it has an autobiographical dimension, including a memory of past life events; an awareness of facts, such as one’s language vocabulary; and a capacity for planning and anticipation of the future. Most discussions about the possible existence of conscious awareness in non-human mammals have been concerned with primary consciousness, although strongly divided opinions and debate exist regarding the presence of self-awareness in great apes (Macphail, 1998). (2002, PDF, Section IV, p. 5)

The same distinction is made in a paper entitled, Criteria for consciousness in humans and other mammals (Consciousness and Cognition, 14 (2005), 119–139), co-authored by A. K. Seth, B. J. Baars and David B. Edelman. David Edelman is a prominent signatory of the 2012 Cambridge Declaration on Consciousness. The Edelman cited in the passage below is his father, Dr. Gerald Edelman:

Consciousness is often differentiated into primary consciousness, which refers to the presence of a reportable multimodal scene composed of perceptual and motor events, and higher-order consciousness, which involves referral of the contents of primary consciousness to interpretative semantics, including a sense of self and, in more advanced forms, the ability to explicitly construct past and future scenes (Edelman, 1989).

Already, then, we can see that there are problems with Sullivan’s claim that animals occupy a continuum of consciousness. Instead, the evidence indicates that animals fall into three distinct categories: those that lack consciousness altogether; those that possess primary consciousness but not higher-order consciousness; and those which, like ourselves, possess higher-order consciousness.

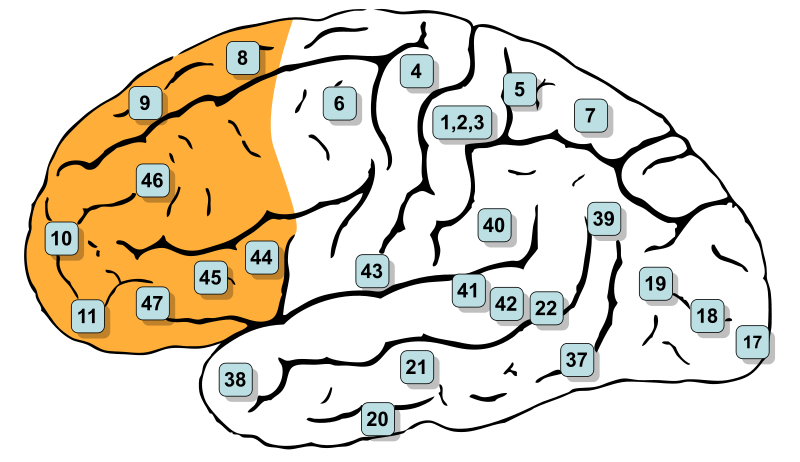

(a) Primary consciousness

What are the neural requirements for primary consciousness?

|

Anatomical subregions of the cerebral cortex. The neocortex is the outer layer of the cerebral hemispheres. It is made up of six layers, labelled I to VI (with VI being the innermost and I being the outermost). Consciousness requires widely distributed brain activity that is simultaneously diverse, temporally coordinated, and of high informational complexity. The mammalian neocortex satisfies these functional criteria because of its unique structural features. Only mammals possess a neocortex. However, a homologous structure also exists in birds. No other animals – not even the octopus – possess anything of comparable complexity. Image (courtesy of Wikipedia) taken from Patrick Hagmann et al. (2008) “Mapping the Structural Core of Human Cerebral Cortex,” PLoS Biology 6(7): e159. doi:10.1371/journal.pbio.0060159.

Primary consciousness has three distinguishing features at the neurological level, according to a paper by Anil K. Seth, Bernard J. Baars and Cambridge Declaration signatory David B. Edelman, titled, Criteria for consciousness in humans and other mammals (Consciousness and Cognition, 14 (2005), 119–139):

Physiologically, three basic facts stand out about consciousness.

2.1. Irregular, low-amplitude brain activity

Hans Berger discovered in 1929 that waking consciousness is associated with low-level, irregular activity in the raw EEG, ranging from about 20–70 Hz (Berger, 1929). Conversely, a number of unconscious states—deep sleep, vegetative states after brain damage, anesthesia, and epileptic absence seizures—show a predominance of slow, high-amplitude, and more regular waves at less than 4 Hz (Baars, Ramsoy, & Laureys, 2003). Virtually all mammals studied thus far exhibit the range of neural activity patterns diagnostic of both conscious states…

2.2. Involvement of the thalamocortical system

In mammals, consciousness seems to be specifically associated with the thalamus and cortex (Baars, Banks, & Newman, 2003)… To a first approximation, the lower brainstem is involved in maintaining the state of consciousness, while the cortex (interacting with thalamus) sustains conscious contents. No other brain regions have been shown to possess these properties… Regions such as the hippocampal system and cerebellum can be damaged without a loss of consciousness per se.

2.3. Widespread brain activity

Recently, it has become apparent that conscious scenes are distinctively associated with widespread brain activation (Srinivasan, Russell, Edelman, & Tononi, 1999; Tononi, Srinivasan, Russell, & Edelman, 1998c). Perhaps two dozen experiments to date show that conscious sensory input evokes brain activity that spreads from sensory cortex to parietal, prefrontal, and medial-temporal regions; closely matched unconscious input activates mainly sensory areas locally (Dehaene et al., 2001). Similar findings show that novel tasks, which tend to be conscious and reportable, recruit widespread regions of cortex; these tasks become much more limited in cortical representation as they become routine, automatic and unconscious (Baars, 2002)…

Together, these first three properties indicate that consciousness involves widespread, relatively fast, low-amplitude interactions in the thalamocortical core of the brain, driven by current tasks and conditions. Unconscious states are markedly different and much less responsive to sensory input or endogenous activity.

In his article, The Neurobehavioral Nature of Fishes and the Question of Awareness and Pain (Reviews in Fisheries Science, 10(1): 1-38, 2002), Professor James D. Rose (2002) explains the vital importance of the neocortex for conscious awareness:

Primary consciousness appears to depend greatly on the functional integrity of several cortical regions of the cerebral hemispheres especially the “association areas” of the frontal, temporal, and parietal lobes (Laureys et al., 1999, 2000a-c). Primary consciousness also requires the operation of subcortical support systems such as the brainstem reticular formation and the thalamus that enable a working condition of the cortex. However, in the absence of cortical operations, activity limited to these subcortical systems cannot generate consciousness (Kandel et al., 2000; Laureys et al., 1999, 2000a; Young et al., 1998). (2002, PDF, Section IV, pp. 5-6)

A critical point in this analysis is the fact that a large part of the activity occurring in our brain is unavailable to our conscious awareness (Dolan, 2000; Edelman and Tononi, 2000; Koch and Crick, 2000; Libet, 1999; Merikel and Daneman, 2000). This is true of some types of cortical activity and is true for all brainstem and spinal cord activity. We are unaware of activity confined to primary sensory cortex (Koch and Crick, 2000; Lamme and Roelfsma, 2000; Laureys et al., 2000c; Libet, 1999; Rees et al., 2000). We also have no conscious contact with the massive numbers of neurons in our cerebellum, despite the fact that these neurons are intensely active, controlling many aspects of movement and posture. Likewise, we are unaware of the activity of neurons in our hypothalamus, whose firing regulates our heart rate, blood pressure, and neuroendocrine function. (Rose, 2002, PDF, p. 13)

The evidence that the neocortex is critical for conscious awareness applies to both types of consciousness. Evidence showing that neocortex is the foundation for consciousness also has led to an equally important conclusion: that we are unaware of the perpetual neural activity that is confined to subcortical regions of the central nervous system, including cerebral regions beneath the neocortex as well as the brainstem and spinal cord (Dolan, 2000; Guzeldere et al., 2000; Jouvet, 1969; Kihlstrom et al., 1999; Treede et al., 1999). (Rose, 2002, PDF, Section IV, p. 5)

Why is having a neocortex so important for consciousness?

In the passages cited above, I quoted from several neuroscientists (including Cambridge Declaration signatory David Edelman) who testified that having a neocortex is absolutely essential for consciousness. Readers may be wondering why this region of the brain is so important. It turns out that it has several unique neurological properties. Professor James D. Rose describe these properties in his article, The Neurobehavioral Nature of Fishes and the Question of Awareness and Pain (Reviews in Fisheries Science, 10(1): 1-38, 2002):

The reasons why neocortex is critical for consciousness have not been resolved fully, but the matter is under active investigation. It is becoming clear that the existence of consciousness requires widely distributed brain activity that is simultaneously diverse, temporally coordinated, and of high informational complexity (Edelman and Tononi, 1999; Iacoboni, 2000; Koch and Crick, 1999; 2000; Libet, 1999). Human neocortex satisfies these functional criteria because of its unique structural features: (1) exceptionally high interconnectivity within the neocortex and between the cortex and thalamus and (2) enough mass and local functional diversification to permit regionally specialized, differentiated activity patterns (Edelman and Tononi, 1999). These structural and functional features are not present in subcortical regions of the brain, which is probably the main reason that activity confined to subcortical brain systems can’t support consciousness. Diverse, converging lines of evidence have shown that consciousness is a product of an activated state in a broad, distributed expanse of neocortex. Most critical are regions of “association” or homotypical cortex (Laureys et al., 1999, 2000a-c; Mountcastle, 1998), which are not specialized for sensory or motor function and which comprise the vast majority of human neocortex. In fact, activity confined to regions of sensory (heterotypical) cortex is inadequate for consciousness (Koch and Crick, 2000; Lamme and Roelfsema, 2000; Laureys et al., 2000a,b; Libet, 1997; Rees et al., 2000). (Rose, 2002, PDF, p. 6)

The dozen-odd signatories of the 2012 Cambridge Declaration on Consciousness have declared that “The absence of a neocortex does not appear to preclude an organism from experiencing affective states.” This statement is probably correct, as far as it goes. It would be a mistake to assume, however, that affective states (or in common parlance, emotions) are necessarily conscious states. At least some of our human emotions are unconscious. Thus the mere existence of something resembling emotions in animals cannot, by itself, be taken as evidence of consciousness.

What are the behavioral indicators for consciousness?

Accurate report is the key behavioral criterion used by neuroscientists to determine the presence of consciousness, as Professor James Rose explains in his article, The Neurobehavioral Nature of Fishes and the Question of Awareness and Pain (Reviews in Fisheries Science, 10(1): 1-38, 2002):

Although consciousness has been notoriously difficult to define, it is quite possible to identify its presence or absence by objective indicators. This is particularly true for the indicators of consciousness assessed in clinical neurology, a point of special importance because clinical neurology has been a major source of information concerning the neural bases of consciousness. From the clinical perspective, primary consciousness is defined by: (1) sustained awareness of the environment in a way that is appropriate and meaningful, (2) ability to immediately follow commands to perform novel actions, and (3) exhibiting verbal or nonverbal communication indicating awareness of the ongoing interaction (Collins, 1997; Young et al., 1998). Thus, reflexive or other stereotyped responses to sensory stimuli are excluded by this definition… (2002, PDF, p. 5).

Thus for neuroscientists, locomotion and reactions to noxious stimuli do not qualify as criteria for consciousness. In his article, Rose points out that patients in a persistent vegetative state, who lack consciousness, are capable of a wide range of responsive behaviors, which nevertheless fall short of meeting the criteria for consciousness. Non-primate mammals whose cortex has been removed may even be capable of moving around. This does not mean, however, that they are conscious:

Cerebral cortex destruction leaves a human in a persistent vegetative

state in which all conscious awareness is abolished (Figure 3). Sleep-wake cycles and reactions to noxious stimuli persist in such cases due to mediation of these processes by lower levels of the central nervous system (Jouvet, 1969; Young et al., 1998). Although many of the more stereotyped behaviors of humans and other mammals are generated by brainstem and spinal systems, these behaviors are still very dependent on support by the cerebral hemispheres for effective functioning (Berntson and Micco, 1976; Grill and Norgren, 1978; Huston and Borbely, 1974). Non-primate mammals are capable of a greater range of functional behavior after the destruction of the cerebral hemispheres. These animals exhibit locomotion, postural orientation, elements of mating behavior, and fully developed behavioral reactions to noxious stimuli (Berntson and Micco, 1976; Rose, 1990; Rose and Flynn, 1993). However, unlike fish with similar brain damage, these behaviors are not really functional and such mammals cannot survive without supportive care, including assisted feeding. (2002, PDF, p. 11)

Which animals are likely to be conscious?

Readers may be wondering which animals are regarded by neuroscientists as candidates for consciousness. The short answer is that primary consciousness is only likely to be found in mammals and birds, which together make up a mere 15,000 (or just 0.2%) of the 7.7 million-odd species of animals. As we have seen, consciousness requires widely distributed brain activity that is simultaneously diverse, temporally coordinated, and of high informational complexity. The neocortex satisfies these functional criteria because of its unique structural features. Only mammals possess a neocortex. However, a homologous structure also exists in birds. The 2012 Cambridge Declaration on Consciousness is therefore on secure ground when it states:

Birds appear to offer, in their behavior, neurophysiology, and neuroanatomy a striking case of parallel evolution of consciousness… Mammalian and avian emotional networks and cognitive microcircuitries appear to be far more homologous than previously thought. Moreover, certain species of birds have been found to exhibit neural sleep patterns similar to those of mammals, including REM sleep and, as was demonstrated in zebra finches, neurophysiological patterns, previously thought to require a mammalian neocortex.

It should be pointed out, however, that the attribution of consciousness to birds is neurologically plausible, precisely because structures homologous to the mammalian neocortex have been found in the avian brain. No other animals, apart from birds, possess brain structures capable of widely distributed brain activity that is simultaneously diverse, temporally coordinated, and of high informational complexity, as in the mammalian neocortex. It is therefore unlikely that other creatures besides mammals and birds are conscious.

There is, however, a possibility that cephalopods may be conscious. David B. Edelman, Bernard J. Baars and Anil K. Seth defend this view, in an article entitled, Identifying hallmarks of consciousness in non-mammalian species (Consciousness and Cognition 14, 2005, 169–187):

Apart from their extraordinary behavioral repertoires, perhaps the most suggestive finding in favor of precursor states of consciousness in at least some members of Cephalopoda is the demonstration of EEG patterns, including event related potentials, that look quite similar to those in awake, conscious vertebrates (Bullock & Budelmann, 1991)… It is possible that the optic, vertical, and superior lobes of the octopus brain are relevant candidates and that they may function in a manner analogous to mammalian cortex…

Clearly, a strong case for even the necessary conditions of consciousness in the octopus has not been made. Nevertheless, the present evidence on cephalopod behavior and physiology is by no means sufficient to rule out the possibility of precursors to consciousness in this species.

It should be noted that whereas the brain of birds possesses structures which are homologous to the mammalian neocortex, the brain of a cephalopod contains structures which are merely analogous. Consequently, the case for the existence of consciousness in these animals is much weaker than for birds.

It should also be noted that the authors cited above are referring to just one group of cephalopods: the 300-odd species of octopuses (order Octopoda). Nevertheless, even if we were to generously allow that all cephalopods are conscious, that would increase the number of conscious species of animals by a mere 800, bumping up the total to less than 16,000, out of 7.7 million species of animals.

The authors of the 2012 Cambridge Declaration on Consciousness go much further, however. They suggest that insects may also possess consciousness, and cite as evidence the fact that “neural circuits supporting behavioral/electrophysiological states of attentiveness, sleep and decision making appear to have arisen in evolution as early as the invertebrate radiation, being evident in insects and cephalopod mollusks (e.g., octopus).” We have already seen that the Cambridge Declaration carries little weight in the scientific community, as it was signed by less than a dozen neuroscientists. In any case, the authors’ logic on this point is very poor: attentiveness and consciousness are not the same thing, and decision-making can take place even in the absence of consciousness: all it requires is a brain that is capable of processing information relayed by the senses, updating its internal “map of the world”, and adjusting its movements accordingly. What would be much more convincing is a demonstration that these insects were capable of following commands, relayed via signals (e.g. flashing lights), to perform novel body movements.

I conclude that while there are good grounds for ascribing consciousness to mammals and birds, and very tentative grounds for ascribing it to cephalopods, it is extremely unlikely that other animals, such as fish or insects, are conscious.

Is there a sharp dividing line between animals that are conscious and animals that are not?

The philosopher Daniel Dennett, in his book, Kinds of Minds (Phoenix paperback, London, 1997) argues that sentience comes in every conceivable shade, without any threshold between sentient and non-sentient beings. By contrast, the distinguished psychologist Nicholas Humphrey argues that consciousness is an all-or-nothing affair in his best-selling book, A History of the Mind (Vintage edition, London, 1993), where he contends that an animal is capable of conscious if and only if it possesses a short, high-fidelity sensory feedback loop that supports reverberant activity. Who’s right?

In the course of my Ph.D. research, I found that there seem to be four behaviors which are unique to mammals and birds, which are the only classes of animals considered by most neurologists to be potentially capable of primary consciousness. These four behaviors are as follows:

(i) a clear-cut distinction between REM sleep that animals undergo during conscious dreams, and slow-wave sleep;

(ii) the ability to simultaneously access and integrate information from multiple sensory channels;

(iii) awareness of Piagetian object constancy; and