One of the currently popular objections to the concept of functionally specific complex organisation and associated information (FSCO/I) and its super-set Complex Specified Information (CSI) is that these are unscientific ill-founded, logically circular concepts. The objection is actually goundless but it is easy to lose sight of the true balance on the merits in the midst of the spark, flash and smoke of rhetoric. Accordingly it is reasonable to set them in the context of general and scientific inductive reasoning, and its factual basis. I therefore recently set out some of that context in summary in VJT’s thread on seeking agreement on CSI, at no 7. Clipping, with adjustments and figures added:

_______________

>> It seems to me that there are several issues here that need to be taken as balancing points:

1: All significant scientific findings and especially explanations are inherently provisional and subject to empirical tests, as they are cases of inductive reasoning, and therefore are subject to correction or replacement on future analysis or findings.

2: This holds for the design inference across chance and/or mechanical necessity vs design . . . understood as intelligently directed configuration. This may be immediately seen form the per aspect design inference explanatory filter as has been discussed at UD for many years now:

3: We also need to come to the prior evidence-led recognition of a basic commonplace fact of engineering, which can be summed up:

a: Many systems are complex based on multiple interacting parts that

b: are wired up on in effect a wiring diagram that imposes

c: fairly tight constraints on configs that exhibit relevant function, vs a much larger number of other possible clumped or scattered configs of the same components. (That is, islands of function in much wider config spaces of possible but non-functional configs, are real. Contemplate the properly assembled Abu 6500 C3 reel vs a shaken up bag of its parts, if you doubt that the informational wiring diagram makes a difference.)

d: The wiring diagram is highly informational, which can at first level be roughly quantified on the number of y/n q’s that are to be answered to specify acceptable configs (up to tolerances etc).

e: This can be descriptively titled functionally specific complex organisation and associated information, FSCO/I. Where, CSI is an abstract super-set, based on generalising the specification to a detachable description that identifies a deeply isolated xzone T in a field of possible configs, W. As Dembski states in No Free Lunch:

p. 148: “The great myth of contemporary evolutionary biology is that the information needed to explain complex biological structures can be purchased without intelligence. My aim throughout this book is to dispel that myth . . . . Eigen and his colleagues must have something else in mind besides information simpliciter when they describe the origin of information as the central problem of biology.

I submit that what they have in mind is specified complexity [[cf. here below], or what equivalently we have been calling in this Chapter Complex Specified information or CSI . . . . Biological specification always refers to function . . . In virtue of their function [[a living organism’s subsystems] embody patterns that are objectively given and can be identified independently of the systems that embody them. Hence these systems are specified in the sense required by the complexity-specificity criterion . . . the specification can be cashed out in any number of ways [[through observing the requisites of functional organisation within the cell, or in organs and tissues or at the level of the organism as a whole] . . .”

p. 144: [[Specified complexity can be defined:] “. . . since a universal probability bound of 1 [[chance] in 10^150 corresponds to a universal complexity bound of 500 bits of information, [[the cluster] (T, E) constitutes CSI because T [[ effectively the target hot zone in the field of possibilities] subsumes E [[ effectively the observed event from that field], T is detachable from E, and and T measures at least 500 bits of information . . . ”

4: I am not satisfied that many objectors to the design inference on FSCO/I are appropriately responsive to the basic points already made, and it seems that there is a problem of selective hyperskepticism at work.

5: From the emergence of evidence on biochemistry and molecular biology, especially from the elucidation of DNA from 1953 on, it became clear that FSCO/I and its subset, digitally coded functionally specific complex information, are present in the living cell. Thus by the 1970′s leading OOL researchers Orgel and Wicken went on record:

ORGEL, 1973:

. . . In brief, living organisms are distinguished by their specified complexity. Crystals are usually taken as the prototypes of simple well-specified structures, because they consist of a very large number of identical molecules packed together in a uniform way. Lumps of granite or random mixtures of polymers are examples of structures that are complex but not specified. The crystals fail to qualify as living because they lack complexity; the mixtures of polymers fail to qualify because they lack specificity. [[The Origins of Life (John Wiley, 1973), p. 189.]

WICKEN, 1979:

‘Organized’ systems are to be carefully distinguished from ‘ordered’ systems. Neither kind of system is ‘random,’ but whereas ordered systems are generated according to simple algorithms [[i.e. “simple” force laws acting on objects starting from arbitrary and common- place initial conditions] and therefore lack complexity, organized systems must be assembled element by element according to an [[originally . . . ] external ‘wiring diagram’ with a high information content . . . Organization, then, is functional complexity and carries information. It is non-random by design or by selection, rather than by the a priori necessity of crystallographic ‘order.’ [[“The Generation of Complexity in Evolution: A Thermodynamic and Information-Theoretical Discussion,” Journal of Theoretical Biology, 77 (April 1979): p. 353, of pp. 349-65. (Emphases and notes added. Nb: “originally” is added to highlight that for self-replicating systems, the blue print can be built-in.)]

(Notice, very carefully, please: “Organization, then, is functional[ly specific] complexity and carries information.” This is the root of the descriptive term, FSCO/I. CSI is then readily seen as the superset that extends the concept beyond biofunction or function of technological entities or text strings in programs or communications, to an abstract general concept rooted in thermodynamics thought. )

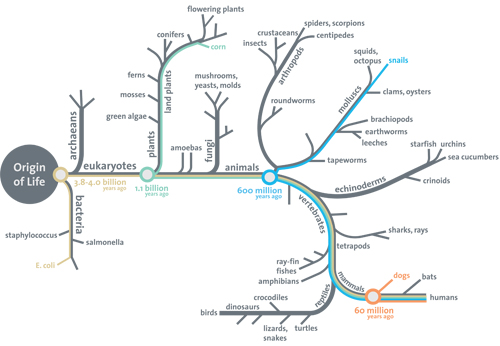

6: That set of remarks must be understood in light of the context outlined above. The origin of biofunctional, complex specific, interactive, information rich organisation is at the root of the tree of life and its branching.

6: That set of remarks must be understood in light of the context outlined above. The origin of biofunctional, complex specific, interactive, information rich organisation is at the root of the tree of life and its branching.

7: As a simple comparison for strings of y/n q’s to specify states, 500 – 1,000 H/T coins have 3.27*10^150 – 1.07*10^301 possibilities as configs. The former overwhelms the number of search operations possible for the 10^57 atoms of the sol system each searching 10^14 attempts per second, for 10^17 s roughly as one straw to a cubical haystack as thick as our galaxy. For the latter, the haystack to be compared to one straw would swallow up the observed cosmos of some 90 bn LY across. Blind chance led needle in haystack searches of such stacks will be maximally sparse and unlikely to be successful. Matters not if you scatter a dust, carry our random walks or combine the two, etc. Too much stack, too little search:

8: So, we have an observable phenomenon in life that, even without attempted explicit detailed precise quantification of improbability, is a formidable challenge to any mechanism that appeals to chance-led non foresighted processes as engines of innovation hoping to generate FSCO/I.

9: Where OOL is pivotal, because all there is by way of plausible chance and necessity hyps is the physics, chemistry and thermodynamics of Darwin’s warm salty pond, or a volcano vent or a cold comet or a gas giant moon etc. And in particular, appeals to the magic of differential reproductive success in niches have to first account for the origin of code-using von Neumann self replicators [vNSRs] added to metabolising automata based on homochiral proteins in gated encapsulated cells, in reasonable environments, in a reasonable time and scope of resources on empirically demonstrated capacity of credible forces and materials of nature.

10: Such simply has never been done.

11: And, as we saw ab0ove, that is the ROOT of the evolutionary materialist tree of life.

12: Where, there is only one empirically plausible, routinely observed, needle in haystack sparse search challenge answering known source of FSCO/I. Namely, intelligently directed configuration, aka design. (That’s what configured this comment post, as text strings in English. It is what designed and built the PC etc you are reading this on. It did the same for the Abu 6500 C3 reel, and more.)

13: Without explicit calculations beyond the ones generally indicated, we are already in a position to see that FSCO/I is an inductively strong and reliable sign of design as cause. (FSCO/I as understood, does not require irreducible complexity, as redundancies may be involved, etc. IC is a subset — with a set of well matched parts that are each and all necessary for the core function to exist — but it is not the only one. And, by abstracting away from biofunction or even interactive functionality to define specification, we arrive at a superset, complex specified information. Where the possibility of interactive function is enough.)

14: Just to indicate a bit, consider a naively simplistic cell, of 100 proteins of average length 100 AAs, where instead of 4.32 bits per AA on the choice of 20 alternatives, let things be so loose — this is implausibly loose — there is but one y/n required to specify on average, maybe is this hydrophilic or hydrophobic; any h-phil or h-phob AA would do in the same locus on the chain. Such proteins do the work of the cell. Already that is 10,000 bits, which is vastly beyond the FSCO/I threshold. Recall, for each bit beyond 1,000 the config space cardinality W DOUBLES. 9,000 extra doublings beyond 1.07*10^301 configs, here. (The straw to haystack calc on the 10^111 or so possible acts of 10^80 atoms at 10^14 steps per second, for 10^17 s, would stand as a straw to a cubical haystack rather larger than our cosmos of some 90 bn LY across. Sparse, predictably futile blind search.)

15: In short, design sits at the table for OOL, and therefore for everything beyond too, shifting the balance of reasonable plausibilities thereafter drastically.

16: Where, if we need a particular reason to justify that, we may wish to consider the challenge of origin of thousands of protein clusters across AA space, constituting thousands of islands of function that are deeply isolated, as VJT pointed out in the OP.

17: In this context, I do not buy the concept being pushed by objectors, that one must calculate probabilities, and especially must do so directly while putting up a list of arbitrary chance driven hypotheses that they refrain from offering.

18: That is irresponsible burden of proof shifting. The normal empirically warranted explanation of FSCO/I is design. Indeed, it is a common-sense sign of design, used routinely in all sorts of circumstances including when you infer an author you probably have not ever actually seen from the text of this post. The objectors wish to dismiss that without showing empirically backed warrant, which is selectively hyperskeptical.

19: Instead, I strongly suggest that once very sparse sampling is evidently on the table, and large config spaces confront FSCO/I, it is those who would put up an alternative who need to warrant on empirical evidence that they have mechanisms capable of creating FSCO/I without intelligently guided configuration. This is the vera causa test.

20: There is no good reason to believe this test has been met by the objectors, and the fairly obvious defects in the many suggested counter-examples (which are typically not currently discussed after a few dozen were shot down, typically proving to be cases in point of design) speak loud and clear on how weak their case is.

21: Further, we have something else that is connected to probability, which is readily observed and/or estimated. Information content.

22: Which, as outlined above plainly points to config spaces well beyond reasonable sparse needle in haystack search. Where also, apart from y/n q’s or the equivalent estimates (which are commonplace in information practice, including in Shannon’s original paper) we may make stochastic studies that bring out redundancies etc and give us informational estimates anchored in how the available set of possibilities has been used by whatever has emerged across time. For instance the ASCII symbols are not equi-probable in English text and as a rule e is about 1/8 of English text, where also in most cases q will be followed by u, etc.

23: Where also FSCO/I — a fact not a speculation — naturally comes in islands of function well matched, properly configured parts that interact to achieve function which constrains acceptable configs sharply relative to the number that would be feasible that re clumped or scattered), for which we can observe empirical indications for biology in the cell based on distribution of proteins in AA sequence space. Constrained by requisites of folding and functioning in biological cellular environments.

24: Where the above suffices to show that debate talking points and assertions of circularity are groundless. The improbability of finding islands of function deeply isolated in config spaces of very large size on blind search is not a matter of question-begging but of the nature of the configuration constraints imposed by function, vs otherwise possible clumped or scattered configs. It is an induction backed by trillions of observed cases and linked sparse search for a needle in a very big haystack.

25: Yes, one must be fairly careful in wording, but that is besides the point of the fundamental empirical issue at stake. Until I see signs of objectors taking the issue of configuration constraints to achieve organised interactive function seriously [and I find that conspicuously, consistently absent — for years], I see no reason whatsoever to entertain objections based on assertions of circularity, given what has been outlined above, yet again.>>

_______________

So, the circularity objection becomes clearly groundless once we recognise the inductive nature, and the empirically grounded basis of both the design inference and the concept FSCO/I and its superset CSI. This post is FTR, and discussion can be entertained here on. END