Let us follow an example being discussed in UD comment threads in recent days, of comparing two piles of “dirt”. (U/D, I add — on advice, a sample from ES, as a PS.)

CASE A: The volcanic dome of Montserrat’s Soufriere Hills Volcano, a few miles south of where I am composing this post . . .

CASE B: Another pile of “dirt” . . .

Q: Is there an observable, material difference between these two piles that can allow an observer to infer as to causal source, even if s/he has not seen the causal process in action directly?

A: Yes, and it is patent. A child will instantly and reliably recognise the difference, as will the most primitive unreached people group person. Namely (and using reasonable and easily understood senses of terms), functionally specific, complex organisation and/or associated information. FSCO/I, a known, reliable sign of design as cause and something for which there is no needle in haystack plausible explanation but design, once one understands the scope of configuration space confronting such a search and the limitations of atomic and temporal resources facing our solar system of ~10^57 atoms, or our observed cosmos of ~10^80 atoms and the available time of ~10^17 s since the Big bang event, where the fastest rate for chemical interactions is about 10^14/s.

Where, we may remind ourselves of the needle in haystack sampling/search challenge facing such:

But, it will be typically objected, we only need to make incremental changes to cumulatively climb the hill of fitness!

But, it will be typically objected, we only need to make incremental changes to cumulatively climb the hill of fitness!

In fact the challenge as shown dominates, one needs to first find the islands of function in the relevant config space, without oracles telling you warmer/colder, as there is no functional feedback until one is actually within such an island as T. Where also, the requirement of multiple, well-matched, properly arranged and coupled component parts to achieve function . . . above, to represent a fantasy castle as a sculpture made with sand particles . . . will confine one to relatively tiny fractions of the space of possible configurations on a beach or elsewhere. That’s the basic reason that there are no volcano domes in the shape of sand castles.

In the case of a solar system scale search, we have some 10^57 atoms, interacting across 10^17 s, with an upper reasonable rate of 10^14 interactions per second. If we were to give each such atom a tray of 500 ordinary H/T coins and if they were flipped and “read” every 10^-14 s, we would be able to sample only a small, almost infinitesimal fraction of the space of possibilities of 500 coins. A picture of the challenge would be that if the samples were comparable in scope to a straw, the config space would be a cubical haystack 1,000 Light years across, comparably thick as the central bulge of our barred spiral galaxy — light that set out on its journey when William of Normandy was landing on the shores of England, would not yet have quite finished traversing that distance, where also light from the sun, 92 mn miles away takes about 8 minutes to reach us.

Were such a haystack superposed on our neighbourhood, and were such a sample taken through blind chance and mechanical necessity, with all but absolute certainty — with practical certainty — it would pick up only straw. As has been highlighted any number of times.

Of course, we know that , routinely, designers create FSCO/I-rich entities all around us.

And, such can be quantified using a metric related to the thinking of Dr Dembski:

Chi_500 = I*S – 500, bits beyond the solar system threshold

I is any reasonable information carrying capacity metric, most easily understood as the length of a structured sequence of Yes/No questions that must be answered to specify a relevant configuration, in bits. (An ASCII character has seven bits, the eighth in the byte storing it is used as a parity check.)

S is a dummy variable that defaults to zero and indicates thereby that chance is the default explanation for highly contingent outcomes. (Mechanical necessity not being a good explanation for such: guavas or apples reliably initially fall at 9.8 N/kg when they drop from a tree and the Moon reliably goes around the earth with a centripetal acceleration explainable on the same flux of gravitational effect attenuated by additional distance per the rule on the area of spherical surfaces of radius r . . . )

Thus, normally, Chi_500 is locked to – 500. When there is good observably verifiable reason to identify a configuration as functionally specific, e.g. the Shimano X-SHIP gear train . . .

. . . then S goes to 1. The chain of structured Y/N questions (or the equivalent) then kicks in, and once it passes 500 bits, we have good needle in haystack search and inductive reason to see that Chi_500 above 1 is a reliable index of design as cause. So, we come to the substantially equivalent per aspect design inference flowchart/filter:

That is, we have an operational process linked to standard patterns of scientific, inductive reasoning, that allow us to reliably infer design on signs such as FSCO/I.

That is, we have an operational process linked to standard patterns of scientific, inductive reasoning, that allow us to reliably infer design on signs such as FSCO/I.

Where does this point?

Let’s try the ribosome, without which, there would be no effective cell based life:

This molecular nanomachine in the living cell manufactures proteins based on control mRNA tapes, using tRNA taxicab-position arm devices with CCA universal coupler end effectors, loaded with amino acids through “loading enzymes.”

For a more complete picture:

The control tape, coded, algorithmic process at work is entirely and relevantly similar to the old punched paper tape readers used in older computers and macro-scale NC machines (except that instead of holes, prong height is used similar to those in a Yale-type key-lock system):

For cell based life to be possible, that system had to have been there right from the beginning, OOL.

The remote past of OOL is unobservable, but we can observe its traces. Sound scientific reasoning is to identify causal factors known to be capable of such effects and to explain in light of such.

Here, that includes FSCO/I and in particular, digitally coded, algorithmic functionally specific complex information that easily rises above 500 – 1,000 bits, just for the protein tapes for many proteins. In aggregate, it is vastly beyond that.

This focuses the FSCO/I challenge on OOL.

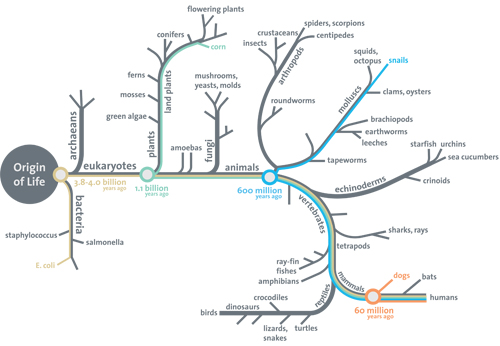

But, we also must face the challenge for origin of major body plans, here using the Smithsonian diagram I used in my still not adequately answered longstanding open challenge to explain OOL and origin of body plans on well grounded observations, using Darwinist or similar mechanisms:

Not only must origin of cells be accounted for but the specialisation of cell types into the tissues used in organs, multiplied by the co-ordinated functionality of the whole network of organs etc that makes a functional viable organism, on blind chance and mechanical necessity. This, in the gamut of — let’s be generous — our solar system. Just the genomes for body plans, on a back of the envelope calculation or a survey of genome sizes, will be 10 – 100+ mn bases at about 2 bits per base.

Where also there are thousands of basic protein groupings, deeply isolated in islands in amino acid string space. (Which mounts up at 4.32 bits per amino acid in the string, as there are basically 20 possibilities. [And yes, there are oddball exceptions, they do not materially affect this point.])

Mission impossible.

Why, then, is the blind chance and mechanical necessity view the dominant school of thought, in the teeth of the evidence on FSCO/I and its known causal origin?

Let us hear eminent Harvard biologist Richard Lewontin, in that well-known NYRB review of Sagan’s last book in Jan 1997, again:

. . . to put a correct view of the universe into people’s heads we must first get an incorrect view out . . . the problem is to get them to reject irrational and supernatural explanations of the world, the demons that exist only in their imaginations, and to accept a social and intellectual apparatus, Science, as the only begetter of truth [[–> NB: this is a knowledge claim about knowledge and its possible sources, i.e. it is a claim in philosophy not science; it is thus self-refuting]. . . .

It is not that the methods and institutions of science somehow compel us to accept a material explanation of the phenomenal world, but, on the contrary, that we are forced by our a priori adherence to material causes [[–> a major begging of the question . . . ] to create an apparatus of investigation and a set of concepts that produce material explanations, no matter how counter-intuitive, no matter how mystifying to the uninitiated. Moreover, that materialism is absolute[[–> i.e. here we see the fallacious, indoctrinated, ideological, closed mind . . . ], for we cannot allow a Divine Foot in the door.

[From: “Billions and Billions of Demons,” NYRB, January 9, 1997. Bold emphasis and notes added. In case this is likely to be dismissed as “quote-mining” — tantamount to an accusation of lying, kindly cf the fuller cite, notes and links as I have linked in introducing this cite.]

Sobering.

And in case that is seen as unrepresentative and idiosyncratic, here is the official position of the US National Science Teachers Association Board, in 2000:

The principal product of science is knowledge in the form of naturalistic concepts and the laws and theories related to those concepts . . . .

Although no single universal step-by-step scientific method captures the complexity of doing science, a number of shared values and perspectives characterize a scientific approach to understanding nature. Among these are a demand for naturalistic explanations supported by empirical evidence that are, at least in principle, testable against the natural world. [[–> Note the imposition of a priori evolutionary materialism right into the definition of science, twisting science into ideology and education into indoctrination; where also NSTA and NAS threatened the parents and students of Kansas with ostracism for failing to toe this line, across the 2000’s] Other shared elements include observations, rational argument, inference, skepticism, peer review and replicability of work . . . .

Science, by definition, is limited to naturalistic methods and explanations [[–> That ideological definition again] and, as such, is precluded from using supernatural elements [[–> A strawman tactic and red herring also, as the material issue is the contrast between nature and art on empirically reliable signs, as has been put on the table since Plato in The Laws, Bk X 2350 years ago] in the production of scientific knowledge. [[NSTA, Board of Directors, July 2000. Emphases and notes added.]

Seminal ID thinker Philip Johnson’s retort to such shenanigans, written in reply to Lewontin, is apt:

What will be the predictable Darwinist response?

To refuse to acknowledge the evidence, relevance and reasoning concerning FSCO/I.

Even, at the price of clinging to absurdities . . . and recall this case is motivated in part by the retort to a truckload of sand dumped in a pile, that this is designed. Hence the use of a very different pile of “dirt” that is unquestionably not made by a process of design, and is not manifesting FSCO/I.

It is time for fresh thinking and a fresh start. END

PS: ES provides a handy case in point (there are a great many extending over the course of literally years or going back to Thaxton et al 1984 and beyond, decades . . . ) at 83 in the desperate distractions DDD no 6 thread:

Notice, the highlighted refusal to acknowledge the blatant difference between the two piles, one a simple dumped pile of sand, and two, a sand castle? Above, I of course put in a volcano dome to address the distraction that a sand pile dumped from a truck is designed.

Phinehas

This is a much better question. The answer is that my way to describe the difference is to say it’s a difference in degree, incidental to the substance. This means it’s not an essential difference and there’s [no point at which the difference in degree] implies a new quality to the substance. It’s a purely quantitative difference throughout. [–> Correction added as per no 84]

Let’s say there’s a body of water. Water consists of drops. How many drops do you need to take away until it ceases to be water and only a drop is left? This is the classical paradox of the heap. Drops and water are really a continuum of the same thing, but different scales of the continuum are verbally termed differently, just like cold and warm are really both temperature. The difference is of degree, of quantity, not of quality.

Now, you presented me with two images of sand heaps (Mound of Dirt #1 and #2) and you said that there’s a difference that any child can see. Yes, any child can see it. However, this is equivalent to showing a sand particle to a child and you say

Claim #1: “This is a sand particle.”

and then you point to a large pile of sand and say

Claim #2: “This is a heap.”

and then you further say

Claim #3: “These two are obviously totally different things and everybody with eyes to see immediately knows the difference.”

You just might convince the child. But I am not a child. I am someone familiar with the paradox of the heap and I recognize how claim #3 cannot be taken too far without committing a logical fallacy. The difference between the sand particle and the heap is quantitative, not qualitative. The different words “drop” versus “water” or “sand particle” versus “heap” do not entail or imply a distinct substance or essence. The difference is merely verbal, incidental, inconsequential, only a matter of scale or degree, whereas the essential substance is the same. Therefore, when I say that the sand particle and the heap are essentially the same thing, and you reply that I am selectively hyperskeptical, then you will have gone overboard.

This is precisely the difference I see in your two images. There is no essential difference. The “obvious” difference of the shapes is a matter of degree, not of kind – both are heaps and both have shapes, and one heap can smoothly be re-shaped into the other. It’s analogous to a single body of water that assumes different shapes in different vessels – a huge difference in shapes of water, but no difference in the quality of water.

Of course I agree there’s a quantitative or incidental difference, but this is all I can say. If you say design implies a designer, then this applies equally to both heaps. Both heaps have some design. The simpler heap looks like cast off a truck, so it’s as man-designed as the sand castle. If it were a natural dune, then I’d say it’s designed by nature, which is also a common-sense phrase.