Our Physicist and Computer Scientist from Russia — and each element of that balance is very relevant — is back, with more. MOAR, in fact. This time, he tackles the “terror-fitted depths” of thermodynamics and biosemiotics. (NB: Those needing a backgrounder may find an old UD post here and a more recent one here, helpful.)

More rich food for thought for the science-hungry masses, red hot off the press:

_________________

>>On the Second Law of Thermodynamics in the context of the origin of life

Rudolf Clausius (1822-1888)

This note was motivated by my discussions with Russian interlocutors. One of UD readers here has asked me to produce a summary of those discussions, which I am happy to do now. I hope it will be interesting to all UD readers.

Unfortunately, sometimes one can see erroneous interpretations of thermodynamics results, especially, those concerning the famous second law. In discussions with my Russian readers I encountered somewhat forthright statements about the alleged sufficiency of thermodynamics itself in proving the existence of God and even claims that the concept of thermodynamic fluctuations of macrostate is all wrong. One anonymous reader has put it like this: thermodynamics has been polluted with fluctuations.

To show that such claims are incorrect, I briefly quote the various open sources on thermodynamics and elaborate on them a bit.

At the same time, I will also try to show that having in one’s hands only argumentation from thermodynamics per se, is insufficient to demonstrate the incorrectness of a materialist view on the origin of life (which view is necessarily reductionist). Even though I will focus my attention only on thermodynamics, I believe this is true for any exclusively physicalistic view on life as motion of the particles of matter, to put it in the words of Howard Pattee. I think that in order to succeed in showing the inadequacy of reductionist views regarding the origin of life, one should take a system level view. Only at a system level, can one fully appreciate the irreducible complexity of life, as I attempt to argue below.

While in the first part of my note I expose some frequent errors in interpreting thermodynamics mainly by theists, its second part is aimed at dismantling some materialist objections. I do not claim to be an expert in thermodynamics, so any constructive criticism of my views here is welcome.

I apologize in advance that some of my links are to Russian sources.

[–> actually, this is an asset here at UD; the ID debate in the Russian language zone of cyberspace demonstrates beyond responsible doubt, that the issue is not just an over-spilling of American politics post some 1980’s USSC decision. NCSE, et al, kindly, take due note; KF]

They are there mainly because they exist in the original Russian version of this note.

Classical thermodynamics and fluctuations of state

Let us start with a quote from here (translation mine):

In contrast to thermodynamics, statistical mechanics deals with a specific class of processes — fluctuations whereby a system changes its state from a more probable to a less probable, which consequently reduces its entropy. The presence of fluctuations demonstrates that the second law which states that in an isolated thermodynamic system entropy does not reduce, holds only statistically, i.e. on average over large enough intervals of time.

So? Of course, the second law holds. Eventually all entropic differentials in an isolated thermodynamic system will be nullified over time and its entropy will reach its maximum, in full accordance with the second law. However, there are two things I wanted to comment on.

1. An extrapolation of the classical thermodynamics results on to the entire universe is unwarranted.

We cannot really extend the logic of classical thermodynamics to the entire universe (see here and here). Rudolf Clausius came to the conclusion about the heat death of the universe based on specific assumptions of his theory also assuming that the universe is an isolated thermodynamic system. However, the universe is not a thermodynamic system at all since the assumptions such as the existence of thermodynamic equilibrium, the additivity of energy, ergodicity, do not hold for it as a whole (see here for details). Contemporary physics regards the heat death of the universe as only a hypothesis that is valid only based on the classical theory due to Clausius. But we know today that thermodynamic systems may depart very far from equilibrium, so far that applying the classical theory to such processes is not valid.

[A bit of context may help, here the Hertzsprung-Russel view of H-rich gas balls:]

[This is generally seen as a context in which based on the balance of gravity and radiation pressure stars form, unfold across a life cycle and die, some with a supernova bang, others with a white dwarf whimper. So, across time the observed cosmos moves to ever more degradation of energy, plausibly — but not unquestionably — ending in a heat death scenario:]

[This is generally seen as a context in which based on the balance of gravity and radiation pressure stars form, unfold across a life cycle and die, some with a supernova bang, others with a white dwarf whimper. So, across time the observed cosmos moves to ever more degradation of energy, plausibly — but not unquestionably — ending in a heat death scenario:]

[Issues over fluctuations and cycles etc as ES raises below then come to bear.]

2. Even though the second law is universal and fundamental, local short-term fluctuations of state do occur since the second law is formulated statistically for large enough numbers of molecules and long enough periods of time.

As one textbook stated, the second law does not only admit fluctuations but in fact predicts them.

Small local fluctuations of macrostate are consequently a reality, which does not go contrary to the universality of the second law. For example, we can observe fluctuations of temperature, density or entropy. This is a manifestation of the law of large numbers. Locally, there can and will be departures from the mathematical expectations but on the whole the law of large numbers levels off all such local departures from the theoretical mean. As a result, the mean for a sample will tend to its theoretical value as you make it more and more representative. The same happens with entropic fluctuations. Even in isolated thermodynamic systems entropy at its max value for the whole system may fluctuate locally. When we integrate over the entire volume, the influence of those fluctuations is found to be negligible while the entropy on the whole behaves in accordance with the second law.

Here lies one of the frequent misunderstandings between us and materialists, at least in the Russian segment of the internet. They are flagging up frequent incorrect interpretations of the second law, and rightly so. Unfortunately, it must be stated that based on thermodynamics alone you cannot say that their reductionist claim is wrong. E.g. we cannot say that evolution as such is contrary to the second law.

So, can there be fluctuations? Yes! But materialists go on further and consider life such a fluctuation. It is persistent due to replication but its persistence will eventually deteriorate. Yes, materialists say, there will be a time when all life will eventually cease to exist (i.e. when there is no Sun around anymore). That is all in fine accord with the second law. And yet their conclusion about life is fallacious. However, the right argumentation that we can prove them wrong with is not found at the level of thermodynamics.

Life viewed at a system level

The bad news is that in order to get our heads around the origin of life at least conceptually, physics is not enough. If I pierce a computer network cable with voltage measuring equipment, I will, of course, detect voltage jumps. What I will not be able to do without bringing on the table all that the computer network is doing, is understand the meaning of these voltage jumps. Without the system level knowledge, the computer will remain a black box for me:

Materialists are wrong not in saying state fluctuations occur. They (at least those of them who subscribe to the reductionist picture of life as exclusively chemistry) are wrong in considering life’s origin a spontaneous fluctuation. They get inside the cell and say: look, it is all chemistry! Of course, who said it isn’t chemistry?! Living cells are material objects! They, as any other material body, consist of particles of matter subject to natural regularities.

But the point is that life is not only physics or chemistry! Life is about functional organization (not mere order) whereby its parts are integrated into a whole and act in such a way that the whole persists long enough without disintegration by maintaining its autonomy, metabolizing, replicating and responding to stimuli. In order to see that materialists commit a fallacy (or knowingly play word games) it is necessary to walk up a level of scientific abstraction and examine what kind of ‘fluctuation’ life is.

[Cf. a brief discussion of fluctuations, here. This shows a basic result (bear in mind the Avogadro Number is 6.02 *10^23 molecular scale particles), which we may clip per fair use:]

It turns out that this fluctuation is indeed special even to the point of putting away as inadequate the language of statistical mechanics! Here is why. In order to act against the tendency of entropy to increase, life must be organized so that it replicates with minimal information losses. This means, according to Shannon’s theorem for a noisy channel, that, provided the channel capacity is not exceeded during transfer, minimal losses are achieved when information is encoded using a discrete digital code: the genetic code meets that requirement!

[Background:]

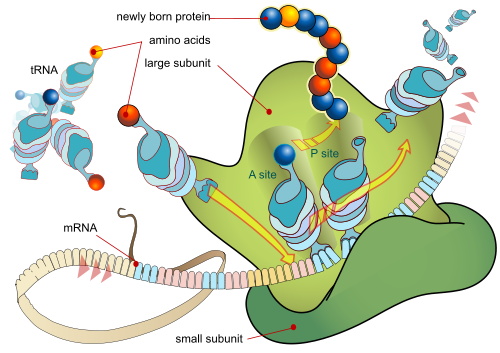

[Protein Synthesis:]

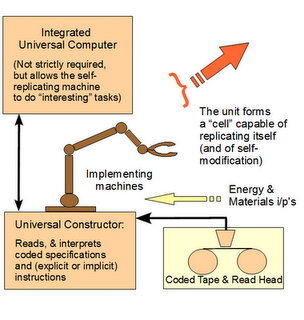

Furthermore, using code means this ‘fluctuation’ should not only write its own description into memory but it also must be able to also encode memory itself.

In order words, to replicate, a non-homogenous system (in contrast to homogenous systems such as crystals) should be able to read/write its own symbolic description from/to memory. This means that a living cell should have a system for information translation. What a spontaneous fluctuation! Clever indeed…

Fluctuations do not build adapters!

As [longtime UD commenter and now occasional contributor] Mung has aptly put it once (alluding to Francis Crick’s famous phrase ‘life is a frozen accident’), accidents do not build adapters! Mung was referring to the adaptor hypothesis that was formulated by Crick in 1955 and so brilliantly validated afterwards. During genetic code translation, life uses adaptors responsible for maintaining unambiguous correspondence between the nucleotide instructions written on messenger RNAs and their amino acid meanings expressed in synthesized protein macromolecules.

Imagine loads of nano-meter plugs being inserted in their respective sockets at a high rate (the rate is variable with a max at about 20 amino acid residues attached to a polypeptide per second) such that the ‘wrong’ amino acid which does not correspond to the RNA codon being processed will not be inserted into the protein molecule.

This means only one thing: engineering foresight must have been at play here apart from mere regularities of nature. Indeed, it was necessary to make sure that the formal correspondence, also known as the genetic code, between a token (mRNA codon) and its referent amino acid, should be maintained. This correspondence carries absolutely no bias towards or against any physical aspect of its implementation! The only possibility for this to have happened is by intelligence. No other way! Non-living matter is void of any foresight or decision making capabilities and it, consequently, could not be accountable for the organization of life!

In the whole of the observed universe things similar to life are doable only by human intelligence. Examples of sign processing systems are limited to: mathematics, art, languages, ciphers, motorway code and the other human-made information processing systems, on the one hand, and … biological codes, on the other. Nothing else…

Consequently, we can hypothesize that biological codes also have intelligent origin.

Even today the entire human technology collectively cannot match the grand design and implementation of life. So far, we were able to reverse-engineer some parts of this true piece of art and wonderfully organized artifact.

To create life, from the point of view of systems theory and information theory, it was necessary to organize the complex {code+protocol+translator}, – the whole of it and at once, – simply because code without its complementary translator is useless rubbish and, likewise, a translator without code a meaningless pile of junk. Furthermore, a protocol is a set of rules i.e. a non-physical thing, a logical correspondence of material entities that have no physical or chemical attraction or bias of any sort to one another. How could that arise by purely physicalistic means?! Total nonsense!

No physical fluctuation of macrostate – of entropy, density, pressure, temperature – can achieve it. The first ever living organism already was not reducible to its constituents. A gradual path for its own assembly starting at a spontaneous fluctuation of state is non-existent if this world. It is a myth. Nature only permits the creation of information processing systems being itself indifferent to information processing; indifferent in the same sense as a spherical mass is at equilibrium on a horizontal plane without friction.

If we take a look at how life is organized, we shall see that its organization is purposefully directed to counteract entropic increases. Death is the unavoidable end of this wrestling as far as an individual living organism is concerned. Before dying though an organism passes on life to the next generation which is organized in the same way. «The fight against the second law» is realized as replication. Although every new organism and even the replication mechanism itself are subject to degradation over time (the latter at a much slower rate than the former), the heart of life, so to speak, is in replication!

[Cf. the von Neumann kinematic self replicator (c. 1948), vNSR:]

[Also, Mignea on cell based self replication (2012):]

[Also, Mignea on cell based self replication (2012):]

Nothing of the sort can be observed in inanimate nature as a complex. Various individual elements of this complex are observed, such is crystal growth/replication for instance. However, for a crystal to grow information translation and codes are not necessary. Crystals grow mechanically, as matrix bulk-copying of layers upon layers of lattice, in line with the minimum total potential energy principle (see my previous OP). In contrast, the genetic code cannot be explained solely by this principle since the essence of translation is in the processing of tokens that only evoke physical effects without determining what these effects should be! Tokens impose boundary conditions on the system dynamics.

As a matter of fact, biosemiotics as a discipline that describes sign processing in biological systems, views a token, not a gene, as a unit of life. According to biosemiotics (cf. here and here), life is physics coupled with specific symbolic boundary conditions, which includes organized behaviour for working with symbolic memory.

This is where our materialist interlocutors are in error or decidedly playing word games.

Some of them, who are educated enough to appreciate the predicament for their worldview, find nothing better than questioning the objectivity of information as a scientifically detectable phenomenon. That is understandable: if you think of this, they have no other option. Playing the fool is a lot more comfortable than having to acknowledge the obvious i.e. that life has intelligent origin!

So, non-living matter is missing the important ingredient that is key for the organization of life: it is absolutely void of any information translation. That must be the emphasis in our discussions with naturalists. Unfortunately, from the point of view of mere thermodynamics, life is indistinguishable from non-life: in both there are physical interactions of particles; in both non-living and living systems energy is spent and entropy tends to maximum. After all, both life and non-life use the same chemical elements from the same periodical system.>>

_________________

As promised, rich food for thought.

Let us now discuss what our Russian colleagues have to say. END