A friend writes to say that nonsensical materialism is now being marketed to engineers, via IEEE, the largest professional engineering society in the world, with over 365 000 members.

A friend writes to say that nonsensical materialism is now being marketed to engineers, via IEEE, the largest professional engineering society in the world, with over 365 000 members.

This article, “I, Rodney Brooks, am a robot”, appeared in the IEEE Spectrum which is the magazine that goes to all members:

I am a machine. So are you.

Of all the hypotheses I’ve held during my 30-year career, this one in particular has been central to my research in robotics and artificial intelligence. I, you, our family, friends, and dogs—we all are machines. We are really sophisticated machines made up of billions and billions of biomolecules that interact according to well-defined, though not completely known, rules deriving from physics and chemistry. The biomolecular interactions taking place inside our heads give rise to our intellect, our feelings, our sense of self.

Accepting this hypothesis opens up a remarkable possibility. If we really are machines and if—this is a big if—we learn the rules governing our brains, then in principle there’s no reason why we shouldn’t be able to replicate those rules in, say, silicon and steel. I believe our creation would exhibit genuine human-level intelligence, emotions, and even consciousness.

There is no single, simple set of rules that governs the operations of the human brain or the mind that inhabits it.

It would make as much sense to say, “I am a tree” as “I am a robot.” In some ways, more. Trees are life forms, like humans. There are at least some qualities that we share with trees (we need water, nutrients, and oxygen, and we grow, reproduce and die. We have roots and branches. And the older we are, the harder it is to move us without excessive damage.

Still, we are not trees. And we certainly are not robots.

The fact that we can say “I am” anything at all, or “I am not” that thing certainly apprises us that we are not trees or robots. Philosophers call it the “hard problem” of consciousness, the sense of self.

As Mario and I pointed out in The Spiritual Brain, the “computer” theory of how the human mind works is badly in need of an early retirement.

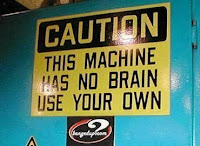

Note: I don’t know where the sign is from, but am told it is somewhere in Britain.

Also from The Mindful Hack

How not to study science …

God is not dead yet – but some haven’t gotten the memo

And from Colliding Universes,

Berlinski: Creation of everything out of nothing – a clinical level of self-delusion?

And what if the Large Hadron Collider doesn’t find the Higgs boson … ? Philosophy time!

Philosopher: God is not dead, and physics arguments are one of the reasons

Stephen Hawking, miffed over science funding cuts, to move to Ontario, Canada?

And from the Post-Darwinist,

When people laugh, fascists fear for their livelihoods

God is not dead yet, and in fact …

I must reserve a ticket for the Canuck Comics’ rally for freedom

Why would Brazilians want to hear from a chemist who thinks there is design in nature?

Enron and Darwinism – a perfect fit?

Trying to understand intelligent design? I see a hatchet in your future.

Look, you have lots of reasons to avoid pulling the quackgrass this weekend. Pull some of it anyway. It gets uglier every time you look at it. The act of looking at it unpulled makes it uglier.

<