(ID Foundations series so far: 1, 2, 3, 4 )

In a current UD discussion thread, frequent commenter MarkF (who supports evolutionary materialism) has made the following general objection to the inference to design:

. . . my claim is not that ID is false. Just that is not falsifiable. On the other hand claims about specific designer(s)with known powers and motives are falsifiable and, in all cases that I know of, clearly false.

The objection is actually trivially correctable.

Not least, as we — including MF — are designers who routinely leave behind empirically testable, reliable signs of design, such as posts on UD blog in English that (thanks to the infinite monkeys “theorem” as discussed in post no 4 in this series) are well beyond the credible reach of undirected chance and necessity on the gamut of the observed cosmos. For instance, the excerpt just above uses 210 7-bit ASCII characters, which specifies a configuration space of 128^210 ~ 3.26 * 10^442 possible bit combinations. The whole observable universe, acting as a search engine working at the fastest possible physical rate [10^45 states/s, for 10^80 atoms, for 10^25 s: 10^150 possible states] , could not scan as much as 1 in 10^ 290th of that.

That is, any conceivable chance and necessity based search on the scope of our cosmos would very comfortably round down to a practical zero. But MF as an intelligent and designing commenter, probably tossed the above sentences off in a minute or two.

That is why such functionally specific, complex organisation and associated information [FSCO/I] are credible, empirically testable and reliable signs of intelligent design.

But don’t take my word for it.

A second UD commenter, Acipenser (= s[t]urgeon), recently challenged BA 77 and this poster as follows, in the signs of scientism thread:

195: What does the Glasgow Coma scale measure? The mind or the body?

206: kairosfocus: What does the Glasgow Coma scale measure? Mind or Body?

This is a scale of measuring consciousness that as the Wiki page notes, is “used by first aid, EMS, and doctors as being applicable to all acute medical and trauma patients.” That is, the scale tests for consciousness. And –as the verbal responsiveness test especially shows — the test is an example of where the inference to design is routinely used in an applied science context, often in literal life or death situations:

Fig. A: EMT’s at work. Such paraprofessional medical personnel routinely test for the consciousness of patients by rating their capacities on eye, verbal and motor responsiveness, using the Glasgow Coma Scale, which is based on an inference to design as a characteristic behaviour of conscious intelligences. (Source: Wiki.)

In short, the Glasgow Coma Scale [GCS] is actually a case in point of the reliability and scientific credibility of the inference to design; even in life and death situations.

Why do I say that?

The easiest way to show that is to excerpt my response to Acipenser, at 210 (and continuing to 211) in the same thread:

Now, on the Glasgow scale, there may be a few surprises for you, as this is actually a case where applied science is routinely using a design inference. Now, a good first point of reference is Wiki:

Glasgow Coma Scale or GCS is a neurological scale that aims to give a reliable, objective way of recording the conscious state of a person for initial as well as subsequent assessment. A patient is assessed against the criteria of the scale, and the resulting points give a patient score between 3 (indicating deep unconsciousness) and either 14 (original scale) or 15 (the more widely used modified or revised scale).

GCS was initially used to assess level of consciousness after head injury, and the scale is now used by first aid, EMS, and doctors as being applicable to all acute medical and trauma patients. In hospitals it is also used in monitoring chronic patients in intensive care . . . .

The scale comprises three tests: eye [NB: 1 – 4, behaviourally anchored judgemental rating scale [BARS] applying the underlying Rasch rating model commonly used as a metric in many fields where a judgement or inference needs to be quantified, and familiar from the Likert type scale], verbal [1 – 5] and motor [1 – 6] responses. The three values separately as well as their sum are considered. The lowest possible GCS (the sum) is 3 (deep coma or death), while the highest is 15 (fully awake person).

1 –> This scale is exercised by responsible, ethically obligated and educated medical and paramedical practitioners.

2 –> It is applied to embodied intelligent creatures who under normal circumstances will be alert, verbally responsive and able to move their bodies at will, and whose eye pupils will respond to light, and whose eye-tracks betray a major current focus of consciousness. This is background knowledge.

3 –> In this context, we may make reference to the Smith Model, assessing the embodied human being as a MIMO bio-cybernetic system, where mind is viewed as higher order controller.

4 –> On the Smith MIMO cybernetic model, the head is a major sensory turret, and hosts the front-end I/O processor. Damage to the head implicating that processor would therefore directly affect both sensor and effector capacity.

5 –> So, implications of such damage for a primary sensor suite, the eyes, and two major sensor effector suites, the auditory and vocal systems, would serve as a pattern of signs that can be assessed on the warranted inference model introduced as a background for my ongoing ID Foundations series:

I: [si] –> O, on W

(I an observer, note a pattern of signs, and infer an underlying objective condition or state of affairs or object, on a warrant)

6 –> So, here we see inference to signified from sign, on a warrant.

7 –> Going further, let us observe behaviour at levels 2, 4 and 5 on the verbal scale, where the issue is whether the subject is able to utter speech that is coherent, accurate and contextually responsive:

2: Incomprehensible sounds

4: Confused, disoriented

5: Oriented, converses normally

8 –> Speech, especially when set in context as language and as a situationally aware response of an intelligent person, is a strong indicator of functionally specific complex organisation, and indeed, encodes verbal, symbolic code in phonemes composed on rules of language. Speech expresses FSCI.

9 –> Thus, where situationally responsive and well composed speech is present, we have good reason to infer that we are dealing with a functional intelligence. And that is the normal condition of fully conscious human beings.

10 –> So, from the degree of falling short of such, we may infer to a breakdown in the relevant systems, here, related to head or CNS injury. With certain other signs, we may go on to infer worse than mere unconsciousness, death . . .

So, where it counts, with life and death in the stakes, here we find a design inference on FSCI routinely at work as a scientifically well-grounded basis for inferring conscious, deliberate behaviour. (Acipenser has not responded to these remarks to date in the original thread; s/he is invited to comment below.)

Similarly, as we saw for MF above, when we see a contextually responsive text string in threads at UD, we routinely and reliably infer that the thread is the product of an intelligent commenter, not a burst of lucky noise. This latter is logically and physically possible, but because functional sequence complexity is so rare in sufficiently large configurations paces to be relevant, we infer abductively on inference to the best (current) explanation, that the most plausible [though of course falsifiable], empirically and analytically warranted cause of a post at UD is an intelligent poster. MF’s posts are evidence — on inference from sign to signified — that a certain intelligent poster, MF exists and has acted.

Magic step: enter, stage, left, the origins science question.

Q: What happens when we try to extend this inference on FSCO/I as a sign pointing to intelligent cause as the signified underlying state of affairs that best explains it, to the context of FSCO/I observed in say the living cell, and thus, the cause of the origin and body plan level diversification of life?

A: If we are to be consistent, we should be willing to accept the uniformity principle, that like causes like, when we have a tested, empirically credible sign. That is, if FSCO/I of at least 500- 1,000 bits of information storage capacity is at work, that functions in a specific fashion [not just any sequence will do, it has to fit into a specification, or an algorithm or the rules of a language, etc], is a reliable sign of intelligence, we should be willing to accept that the sign of FSCO/I points to an act of design as its most credible cause. This, unless we can show good reason that a designer is impossible in the situation; which, however otherwise improbable, would force us to infer to lucky noise as the best remaining explanation.

The process of protein translation in the living cell’s ribosome, is a good case in point:

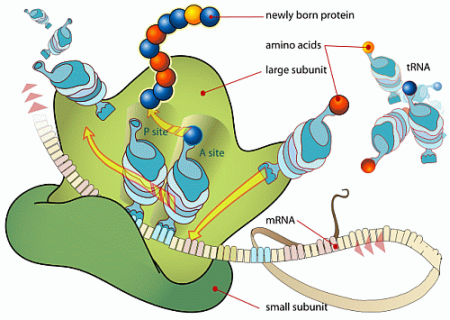

Fig. B: Protein translation in action [Also, cf a medically oriented survey here.] (Courtesy, Wikipedia)

Here, we see a ribosome in action, with the mRNA digitally coded tape triggering successive amino acid additions to the growing protein, as tRNA “taxi” molecules lock to the successive three-letter genetic code codons, and then serving as position-arm devices with the loaded AA’s, that click together. This, until a stop codon triggers cessation and release of the protein. That protein is then folded, perhaps agglomerated with other units, possibly activated and put to work in [or out of] the cell.

On a simple calculation, since each base in the mRNA has four possible states, the three-letter codon has 4^3 = 64 possible values, as are assigned in the standard D/RNA codon table (or its minor variants). 500 bases, or just under 170 codons, is beyond the 1,000 bit storage capacity threshold.

A typical protein is about 300 base pairs, and there are thousands in most life forms.

So, if we trust the sign of FSCO/I, we have good reason to infer that he living cell as we observe it is a designed entity.

That this is a serious point has long been recognised by origin of life investigators, as we may see from J SW Wicken’s famous 1979 remark that has so often featured in this series:

‘Organized’ systems are to be carefully distinguished from ‘ordered’ systems. Neither kind of system is ‘random,’ but whereas ordered systems are generated according to simple algorithms [[i.e. “simple” force laws acting on objects starting from arbitrary and common- place initial conditions] and therefore lack complexity, organized systems must be assembled element by element according to an [originally . . . ] external ‘wiring diagram’ with a high information content . . . Organization, then, is functional complexity and carries information. It is non-random by design or by selection, rather than by the a priori necessity of crystallographic ‘order.’ [“The Generation of Complexity in Evolution: A Thermodynamic and Information-Theoretical Discussion,” Journal of Theoretical Biology, 77 (April 1979): p. 353, of pp. 349-65. (Emphases and notes added. Nb: “originally” is added to highlight that for self-replicating systems, the blue print can be built-in. Also, since complex organisation can be analysed as a wiring network, and then reduced to a string of instructions specifying components, interfaces and connecting arcs, functionally specific complex organisation [FSCO] as discussed by Wicken is implicitly associated with functionally specific complex information [FSCI]. )]

J S Wicken hoped that something like natural selection could explain the source of the functionally specific and complex organisation with associated information [FSCO/I] in life forms, but as post no 4 in this series shows, the infinite monkeys theorem blocks this as a practical possibility, once we recognise that a designer is possible at all in a given situation.

This brings up the root problem in current origins science. Adherents of evolutionary materialism — who happen to dominate relevant scientific, academic, educational, media and policy institutions in our day — are implicitly imposing an a priori materialism. [Cf. the NSTA stance as was discussed here, and underlying analysis here.]

So, we are back to Lewontin’s a priori imposition of evolutionary materialism:

It is not that the methods and institutions of science somehow compel us to accept a material explanation of the phenomenal world, but, on the contrary, that we are forced by our a priori adherence to material causes to create an apparatus of investigation and a set of concepts that produce material explanations, no matter how counter-intuitive, no matter how mystifying to the uninitiated. Moreover, that materialism is absolute, for we cannot allow a Divine Foot in the door.

[From: “Billions and Billions of Demons,” NYRB, January 9, 1997. Bold emphasis added. Cf discussion here.]

To this, leading ID thinker Philip Johnson’s rebuttal is apt:

So, in the end, we have to ask ourselves whether science is to be redefined as the best evolutionary materialist account of the origins and operations of the cosmos, from hydrogen to humans, or whether we will insist instead on freedom in science and science education.

For, science at its best is or should be:

. . . an unfettered (but ethically and intellectually responsible) progressive pursuit of the truth about our world, based on observation, experiment, analysis, theoretical modelling and informed, reasoned discussion.