Jeff Shallit charges Jonathan Wells with incompetence for claiming that duplicating a gene does not increase the available genetic information. To justify this charge, Shallit notes that a symbol string X has strictly less Kolmogorov information than the symbol string XX. Shallit, as a computational number theorist, seems stuck on a single definition of information. Fine, Kolmogorov’s theory implies that duplication leads to a (slight) increase in information. But there are lots and lots of other definitions of information out there. There’s Fisher information. There’s Shannon information. There’s Jack Szostak’s functional information.

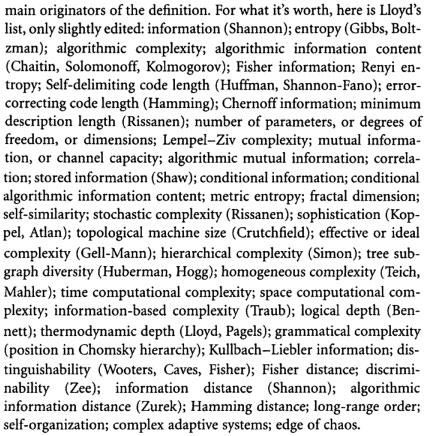

Information, when quantified, typically takes the form of a complexity measure. Seth Lloyd has catalogued numerous different types of complexity measures used by mathematicians, engineers, and scientists. Here are a few that he shared with John Horgan:

Note that one of the forms of information on this list is “algorithmic information content,” which is the one that Shallit attributes to Jonathan Wells. But with so many information/complexity measures floating around, why in the world does Shallit think that this is the one that Wells intended? A charitable interpretation of Wells’s remarks would suggest that he was thinking of nothing more complicated that duplicating X by X means that the probability of X given X is 1, implying that all the uncertainty from X has been removed, implying in turn that its information is zero since information can, in one incarnation, be taken as a measure of uncertainty.

The latter type of information is the one I used in my book INTELLIGENT DESIGN: THE BRIDGE BETWEEN SCIENCE AND THEOLOGY, and it’s likely that Wells got it from me since I consider that very problem of duplication in it. So Shallit should probably be going after me. But if he were to do that, he should also go after my latest work on information theory, which can readily be found at www.evoinfo.org. Why doesn’t he? Several years back Shallit actually tracked down my home phone number and called me, urging that I ramp up my popular information theory arguments, develop them with full mathematical rigor, and get them published in the appropriate peer-reviewed literature. Well, I’m doing it. And what is Shallit doing? He’s prefers setting up strawmen and chopping them down.

At one point I was interested in Shallit’s critique, but no more. He has proven himself an extremely narrow computational number theorist who shoehorns everything mathematical that ID people do into his own little world of algorithmic information theory — like the drunk who’s looking for a coin under a street light because there’s no light down the street where he dropped the coin. Shallit was one of my teachers at the University of Chicago. I liked him at the time. But his addiction to substituting insult for civil discourse and sound argument has, it appears, severely constricted his intellectual reach.