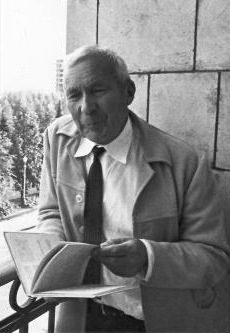

ID theorists make considerable use of probability calculations, and modern probability owes a lot to Andrei Kolmogorov. In a recent article in Nautilus, math history lecturer Slava Gerovitch tells us,

It is oddly appropriate that a chance event drove Kolmogorov into the arms of probability theory, which at the time was a maligned sub-discipline of mathematics. Pre-modern societies often viewed chance as an expression of the gods’ will; in ancient Egypt and classical Greece, throwing dice was seen as a reliable method of divination and fortune telling. By the early 19th century, European mathematicians had developed techniques for calculating odds, and distilled probability to the ratio of the number of favorable cases to the number of all equally probable cases. But this approach suffered from circularity—probability was defined in terms of equally probable cases—and only worked for systems with a finite number of possible outcomes. It could not handle countable infinity (such as a game of dice with infinitely many faces) or a continuum (such as a game with a spherical die, where each point on the sphere represents a possible outcome). Attempts to grapple with such situations produced contradictory results, and earned probability a bad reputation.

…

Kolmogorov drew analogies between probability and measure, resulting in five axioms, now usually formulated in six statements, that made probability a respectable part of mathematical analysis. The most basic notion of Kolmogorov’s theory was the “elementary event,” the outcome of a single experiment, like tossing a coin. All elementary events formed a “sample space,” the set of all possible outcomes. For lightning strikes in Massachusetts, for example, the sample space would consist of all the points in the state where lightning could hit. A random event was defined as a “measurable set” in a sample space, and the probability of a random event as the “measure” of this set. For example, the probability that lightning would hit Boston would depend only on the area (“measure”) of this city. Two events occurring simultaneously could be represented by the intersection of their measures; conditional probabilities by dividing measures; and the probability that one of two incompatible events would occur by adding measures (that is, the probability that either Boston or Cambridge would be hit by lightning equals the sum of their areas).

…

However, in his chequered career, he seems to have sometimes lost the plot:

To measure the artistic merit of texts, Kolmogorov also employed a letter-guessing method to evaluate the entropy of natural language. In information theory, entropy is a measure of uncertainty or unpredictability, corresponding to the information content of a message: the more unpredictable the message, the more information it carries. Kolmogorov turned entropy into a measure of artistic originality. His group conducted a series of experiments, showing volunteers a fragment of Russian prose or poetry and asking them to guess the next letter, then the next, and so on. Kolmogorov privately remarked that, from the viewpoint of information theory, Soviet newspapers were less informative than poetry, since political discourse employed a large number of stock phrases and was highly predictable in its content. The verses of great poets, on the other hand, were much more difficult to predict, despite the strict limitations imposed on them by the poetic form. According to Kolmogorov, this was a mark of their originality. True art was unlikely, a quality probability theory could help to measure. More.

Of course, it would work for propaganda o surprises there. But somehow, this is just not the way to go about assessing literature, the work of intelligent agents.

Follow UD News at Twitter!