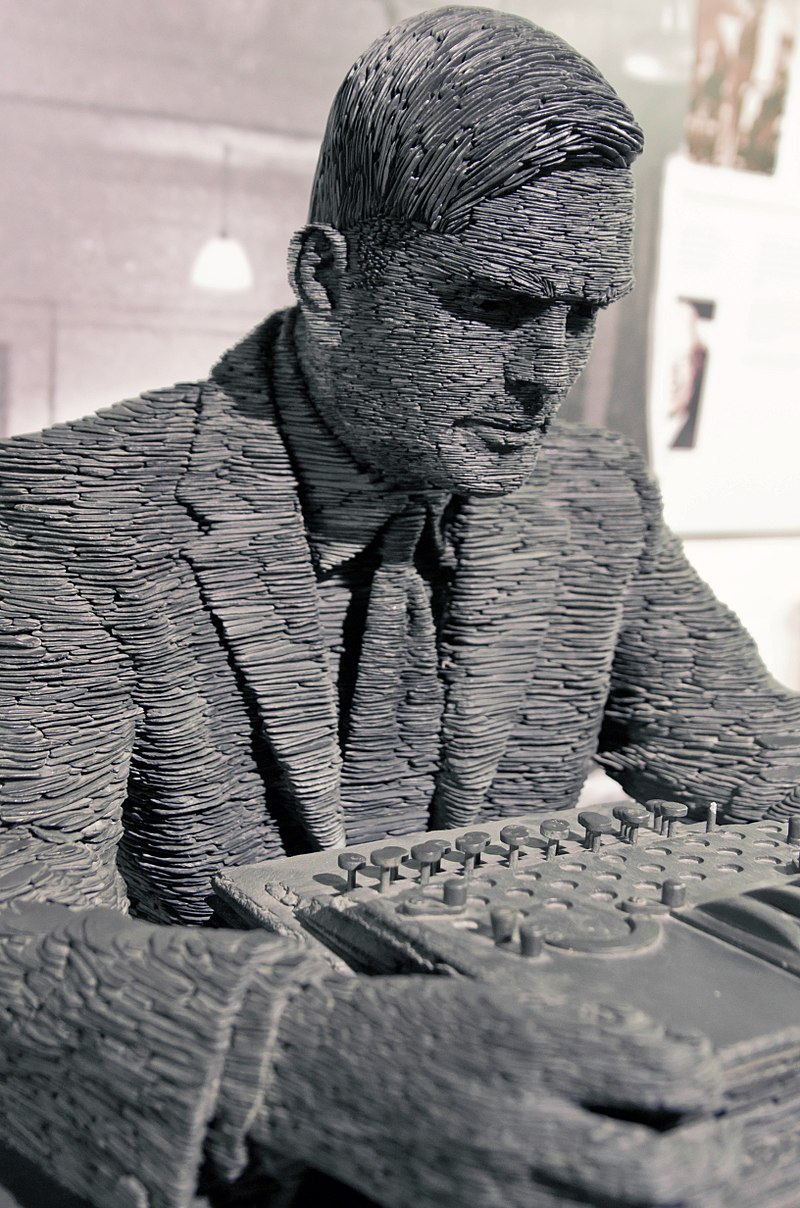

Antoine Taveneau CC– BY-SA 3 0

And Alan Turing tried to live with it:

Maybe that’s not the story you heard, but …

It is indeed a strange quirk in intellectual history that Turing seems to have flip-flopped on this issue, almost politician-like, yet no one seems to have noticed. Gödel, for his part, remained cagey about the strong version of his result, noting only that a disjunction must therefore be true: we are either inconsistent machines or minds. Gödel must surely have jested here because inconsistency in the mathematical sense means that anything can be proven, making human thinking worthless—moons might be made of cheese then. Gödel seems to have stopped short of believing in his own Platonism, or at least proving that it must be true. But the evidence suggests strongly that viewing the mind as a big computer is probably wrong.

Science, at any rate, is something we do, looking in from the outside as it were. Whether we are ultimately caught up inside the very systems we devise to describe and explain is a philosophical question. It seems to me anyway, and indeed to the Turing of the 1930s, and most assuredly to Kurt Gödel the Platonist, that we sit outside the systems we make, the webs we weave so to speak, always on the precipice of the discovery of new truths. That’s a belief that makes sense of evidence, to be sure. It has the additional salutary consequence of making sense of our own possibilities and future.

Analysis, “The mind can’t be just a computer” at Mind Matters News

See also: Human intelligence as a halting oracle (Eric Holloway)

and

Things exist that are unknowable (Robert J. Marks)