Not so fast says economics prof Gary Smith: The failure of computer programs to recognize a rudimentary drawing of a wagon reveals the vast differences between artificial and human intelligence.

He reminds us that the label “artificial intelligence” is a misnomer. The ways in which computers process data are not at all the ways in which humans interact with the world.

When we were young, we might have seen a cardboard box with four sides and a bottom and been told that this is a box, so we created a single-member category. Then we learn that a box with a top is still a box. So is a box made out of wood or plastic. Some boxes are large; some are small. Some are empty; some are full. Some are red; some are white. Our mental box category is fluid and flexible.

Then we learn that a box with wheels and a handle is called a wagon, and we create a new mental category that encompasses the wagons we encounter of different sizes and colors, made of different materials, and used for different purposes.

Computers do nothing of the sort. They are shown millions of pictures of boxes, wagons, horses, stop signs, and more, and create mathematical representations of the pixels. Then, when shown a new image, they create a mathematical representation of these pixels and look for matches in their database. The process is brittle—sometimes yielding impressive matches, other times giving hilarious mismatches.

News, “Artificial Unintelligence” at Mind Matters News

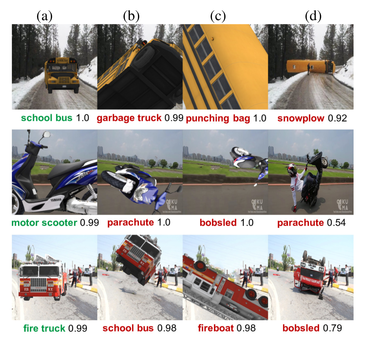

Sometimes the mismatches are not hilarious. Here are some attempted identifications of a schoolbus:

It’s not as though bigger computers can simply solve this problem. We can refine the machine vision systems using more and more pix but we can’t teach the machine what humans know naturally.

See also: Six limitations of artificial intelligence as we know it. You’d better hope it doesn’t run your life, as Robert J. Marks explains to Larry Linenschmidt.