|

Over at Why Evolution Is True, Professor Jerry Coyne is gleefully celebrating the impending demise of the Discovery Institute and of Intelligent Design. But before he pops the champagne, I wonder if he would care to answer two questions I’d like to ask him, in relation to some remarks he made on Casey Luskin’s recent announcement that he would be leaving Discovery Institute to further his studies.

1. Professor Coyne, you wrote that “the early claim that ENCODE showed that 80% of our genome had a function was incorrect, and there remains a huge portion of the human genome that’s nonfunctional,” and you added: “And even if 80% of the human genome were functional, how does that prove the existence of an intelligent designer? What about the other 20%? Did the Great Designer screw up there?”

Before I pose my question, I’m going to make a concession right up-front that will raise a few eyebrows: after reviewing the arguments, I’m inclined to believe that the critics of ENCODE’s bold claim were mostly right, and that the proportion of our genome which is functional is probably between 10 and 20%. (And as you correctly point out, Professor, even ENCODE has now backtracked from its claim.)

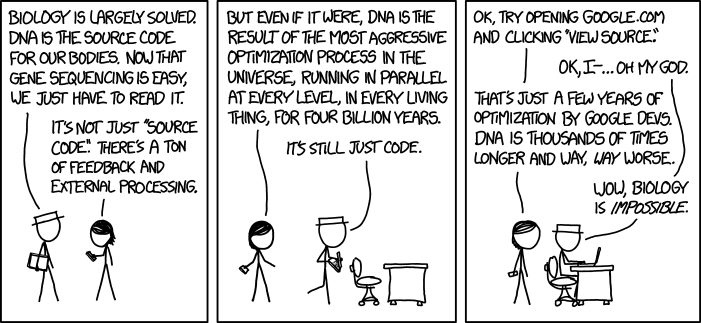

I’d now like to draw your attention to a WEIT post titled, DNA: optimised source code?, written by Professor Matthew Cobb, who is a regular contributor to your Website. (I commented on the post here.) The post discussed the following cartoon from xkcd.

|

Professor Cobb argued that since at least 85% our genome is junk, our DNA should be viewed as the mindless product of a series of historical accidents. But then he made a startling admission (the first bold emphasis is mine – VJT):

On a final note, in some cases, within this amazing noise, there are also astonishing examples of complexity which do indeed appear to be the result of optimisation – and they would boggle the mind of anyone, not just a cocky computer scientist in a hat. In Drosophila there is a gene called Dscam, which is involved in neuronal development and has four clusters of exons (bits of the gene that are expressed – hence exon – in contrast to the apparently inert introns).

Each of these exons can be read by the cell in twelve, forty-eight, thirty-three or two alternative ways. As a result, the single stretch of DNA that we call Dscam can encode 38,016 different proteins. (For the moment, this is the record number of alternative proteins produced by a single gene. I suspect there are many even more extreme examples.)

Evidently Professor Cobb agrees with agnostic Bill Gates, who wrote twenty years ago: “Human DNA is like a computer program but far, far more advanced than any software ever created.” (The Road Ahead, Penguin: London, Revised Edition, 1996, p. 228.)

So my first question is: if (i) Nature contains systems which accomplish a feat (namely, coding for complex structures) in a manner which is far better than what our best computer scientists can do, and (ii) despite diligent searching, scientists have failed to observe any cases in Nature of unguided processes generating a new code from scratch, then why isn’t it reasonable to infer (at least provisionally) that these systems were designed by a super-human Intelligence? You tell me, Professor.

Regarding your remarks on junk DNA, I’d also like to draw your attention to something I wrote on my post in response to Professor Cobb:

Even if Professor Cobb is right about junk DNA – and I’m inclined to think he is (for reasons I’ll discuss in another post) – that’s beside the point. At most, it shows is that DNA which doesn’t code for anything wasn’t designed. But my question is: what about the DNA which does code for proteins, and which does so in a manner that boggles the ingenuity of our brightest minds? Professor Cobb, it seems, is missing the wood for the trees here.

Junk DNA might be described as degenerate code – but there has to be a code in the first place, before it can degenerate. The existence of junk DNA cannot be used as an argument against design: all it establishes is that the designer of our DNA – whether out of benign neglect, laziness, illness, or ignorance that something has gone amiss – doesn’t always fix the code he created, when it becomes corrupted. Accordingly, junk DNA cannot be used as a legitimate argument against the proposition that the DNA in our cells which codes for genes was designed.

My second question, Professor Coyne, relates to your remarks on epigenetics, which Casey Luskin cited in his latest post as his “Exhibit B” of a prediction made by Intelligent Design that had been fulfilled:

Exhibit B: The burgeoning field of epigenetics has also validated ID’s prediction of new layers of information, code, and complex regulatory mechanisms in life. We’ve seen discoveries of new DNA codes (e.g., multiple meanings for synonymous codons), as well as the histone code, the RNA splicing code, the sugar code, and others. It’s a great time to be an ID proponent!

In response, you wrote:

Umm. . . the “new layer of information” that ID predicted was DIVINE information, not epigenetics. And the part of epigenetics that does add “information” — the epigenetic modifications of DNA already encoded in the genome — have been known for a long time. As for those “Lamarckian” modifications induced by the environment, well, that “information” is erased after a couple of generations, and so has no evolutionary import.

May I point out for the record that epigenetics can be defined as “the study of changes in organisms caused by modification of gene expression rather than alteration of the genetic code itself,” and that the use of the term “epigenetic” to describe processes that are not heritable is highly controversial. But let that pass.

I’d now like to draw your attention to a passage from Dr. Stephen Meyer’s book, Darwin’s Doubt (HarperOne, New York, 2013), concerning the role of epigenetic information in embryonic body-plan formation:

These different sources of epigenetic information in embryonic cells pose an enormous challenge to the sufficiency of the neo-Darwinian mechanism. According to neo-Darwinism, new information, form, and structure arise from natural selection acting on random mutations arising at a very low level within the biological hierarchy — within the genetic text. Yet both body-plan formation during embryological development and major morphological innovation during the history of life depend upon a specificity of arrangement at a much higher level of the organizational hierarchy, a level that DNA alone does not determine. If DNA isn’t wholly responsible for the way an embryo develops — for body-plan morphogenesis — then DNA sequences can mutate indefinitely and still not produce a new body plan, regardless of the amount of time and the number of mutational trials available to the evolutionary process. Genetic mutations are simply the wrong tool for the job at hand.

Even in a best-case scenario — one that ignores the immense improbability of generating new genes by mutation and selection — mutations in DNA sequence would merely produce new genetic information. But building a new body plan requires more than just genetic information. It requires both genetic and epigenetic information — information by definition that is not stored in DNA and thus cannot be generated by mutations to the DNA. It follows that the mechanism of natural selection acting on random mutations in DNA cannot by itself generate novel body plans, such as those that first arose in the Cambrian explosion. (pp. 281-282.)

So my second question for you is: will you concede that neo-Darwinism is unable to account for the origin of the epigenetic information needed to create novel body-plans (which must have occurred before the Cambrian explosion took place), and that natural selection therefore doesn’t explain all cases of apparent design in nature, falsifying your previous claim that it’s the “only game in town” for producing adaptations?

Incidentally, are you aware of any good evidence that epigenetic information is not divine in origin? If so, please elaborate.

Best wishes for your retirement,

Vincent Torley