UB writes:

UB, only way thread, 164: >>My apologies to Origenes, he had asked for my comment, but I was away . . . . I am no expert of course, but thank you for asking me to comment. Frankly you didn’t need my opinion anyway. When you ask “What is the error in supposing something?” you likely already know there is no there there. And someone seriously asking you (like some odd prosecution of your logic) to enumerate what exactly is the biological error or the chemical error in the proposition of something that has never before been seen or recorded in either biology or chemistry — well whatever.

Deacon begins by asking the question, what is necessary and sufficient to treat a molecule as a sign. He is 50 to 150 years late on that question (depending on how one wants to look at it). In any case (setting aside for the moment his reliance on “uncharacterized” chemistry) he doesn’t get to where he is going, and he tells you as much in his Conclusions. He says his exercise “falls well short” of the origin of the code, but he reckons that his exercise offers something more basic. Regardless of what one might feel about proposing unknown chemistry as a “proof of principle”, his paper doesn’t offer the pathway implied by the title of the paper (a title that Deacon chose to honor the work of Howard Pattee, How does a molecule become a message Pattee, 1969). From my perspective, even with the admitted reliance on unknown chemistry, Deacon still doesn’t get from dynamics to descriptions and doesn’t shed any particular light on the problem.

I might suggest you look at Howard Pattee’s own response to Deacon’s paper. I do not know where it is available or if it is behind a paywall somewhere, but I have a copy here in front of me. It has a little bit of a cool tone to it. He begins the paper with (first sentence) “Deacon speculates on the origin of interpretation of signs using autocatalytic origin of life models and Peircean terminology” and in the very next sentence takes a rather direct contrary position.

He begins by offering some background:

The focus of my paper “How does a molecule become a message?” (Pattee 1969) that Deacon (2021) has honored, was a search for the simplest language in which messages were both heritable and open-ended. I was trying to satisfy Von Neumann’s condition for evolvable self-replication. He argued that it is necessary to have a separate non-dynamic description that (1) resides in memory, (2) can be copied, and (3) can instruct a dynamic universal constructor. (I replaced “description” with “message” simply for alliteration.) I concluded (Pattee 1969, 8): “A molecule becomes a message only in the context of a larger system of physical constraints which I have called a ‘language’ in analogy to our normal usage of the concept of message.” A language consists of a small, fixed set of symbols (an alphabet) and rules (a grammar) in which the symbols can be catenated indefinitely to produce an unlimited number of meaningful or functional sequences (messages).

… and then goes on to offer some ancillary corrections before addressing Deacons model in full (i.e. “Before discussing Deacon’s main thesis, I need to respond to his misleading history of molecular biology”). He then discusses the (three-dimensional) structuralist and the (one-dimensional) informationalist camps in the OoL field, and then under the heading “Deacon’s Model” he concludes:

There are three well-known problems with autocatalytic cycle models: (1) limited information capacity (What are the symbol vehicles?), (2) instability of multiple dynamic cycles (error catastrophe), and (3) no known transition to the present nucleic acid-to-protein genetic code. The only known way to mitigate problems (1) and (2) is to solve (3), that is, to transition from dynamic catalysts to a symbol-code-construction system. Deacon recognizes these problems and his solution to (3) is to “offload” autogen catalyst information to RNA-like template molecules:

“Offloading (or transfer of constraints) is afforded because complementary structural similarities between catalysts and regions of the template molecule facilitate catalyst binding in a particular order that by virtue of their positional correlations biases their interaction probabilities.”

Deacon’s offloading is the inverse of the Central Dogma’s information flow from inactive one-dimensional sequences to three-dimensional active catalysts. Deacon’s offloading information flow is from three-dimensional active catalysts to one-dimensional inactive sequences. His offloading speculations require many vague chemical steps with unknown probabilities of abiotic occurrence. Deacon claims that these are “chemically realistic” steps, but he gives no example or evidence of this inverse process. Adding to the chemical vagueness of offloading, Deacon applies the Peircean vocabulary, icon, index, and symbol, and the immediate, dynamic and final interpretants. This Peircean terminology does not help explain or support a chemistry of offloading, nor does it make clearer how molecules become signs.

It appears to me that speculation of unknown chemistry, mixed with language like “proofs”, is an recognizable problem among both experts and laypeople alike.

Note: Just so no one is mistaken, Howard Pattee is a unguided origin of life proponent, but he strongly believes that the speculation of answers must have a foot in chemical and physical reality. In other words, he believes that genetic symbols have their grounding directly in the folded proteins they specify, but also acknowledges that the triadic sign-relationship (symbol, constraint, referent) is required for the specification of those proteins from a transcribable memory. He doesn’t pretend to have an answer to the problem of the transition from dynamics to descriptions, and he doesn’t write papers like Terrance Deacon.

All of this is highly relevant and worth being on headlined record.

We may note from Wikipedia’s confessions:

In information theory and computer science, a code is usually considered as an algorithm that uniquely represents symbols from some source alphabet, by encoded strings, which may be in some other target alphabet. An extension of the code for representing sequences of symbols over the source alphabet is obtained by concatenating the encoded strings.

Before giving a mathematically precise definition, this is a brief example. The mapping

C = { a ↦ 0 , b ↦ 01 , c ↦ 011 }

is a code, whose source alphabet is the set { a , b , c } and whose target alphabet is the set { 0 , 1 }. Using the extension of the code, the encoded string 0011001 can be grouped into codewords as 0 011 0 01, and these in turn can be decoded to the sequence of source symbols acab.

Using terms from formal language theory, the precise mathematical definition of this concept is as follows:

let S and T be two finite sets, called the source and target alphabets, respectively.

A code C : S → T∗ is a total function mapping each symbol from S to a sequence of symbols over T.

The extension C′ of C, is a homomorphism of S∗ into T∗ , which naturally maps each sequence of source symbols to a sequence of target symbols.

In short, as Wikipedia noted, a code is “a system of rules to convert information—such as a letter, word, sound, image, or gesture—into another form, sometimes shortened or secret, for communication through a communication channel or storage in a storage medium.”

The example of a storage medium that pops up with the link on “code medium”? DNA:

More from Lehninger:

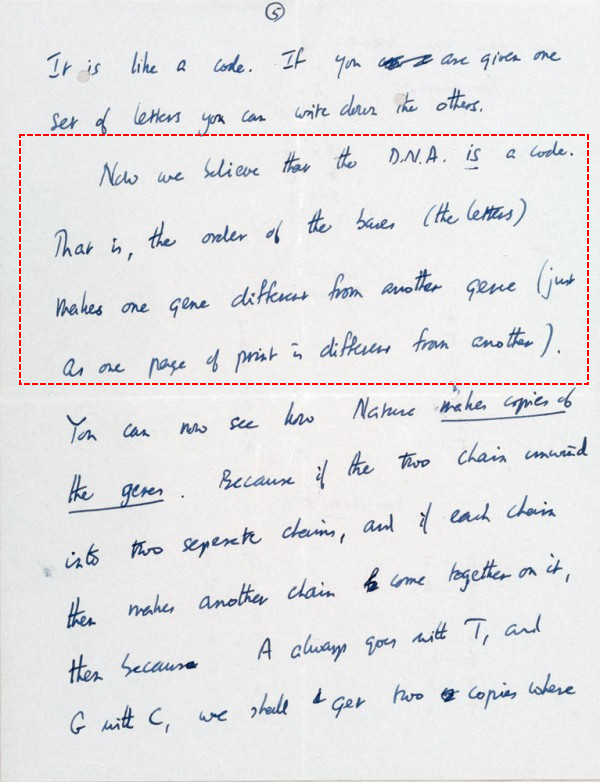

And, from Crick, in his March 19, 1953 letter to his son, notice, how in the first three sentences on p. 5 of this $6 million letter, he shifts from “is like a code” to “is a code”:

Languages, of course, are symbolic systems that express meaningful, functional information through the organisation of representative elements. These can be sounds, glyphs, gestures and more.

Thanks to UB, we have food for thought. END

PS, regrettably, as JVL injected a personality, I think I must also headline UB’s response to the accusation of closed mindedness:

JVL: “ I’m not sure Upright BiPed will grace us with his opinion. He tends to avoid having to admit he might be wrong.

JVL, I gave you researcher’s names, the dates of experiments, and the experimental results. You were forced to agree with all of it. If you’d now like to assert that I’ve made an error in that history, by all means, point it out. I don’t believe you can, and I don’t believe you will. It has to be remembered here that your core position is that the design inference at the origin of life — clearly recorded in the history of science and experiment — is summarily invalidated because the proponents of an unguided OoL simply don’t believe it. Your position (a well-known logical fallacy) deliberately separates conclusions from evidence and destroys science as a methodological approach to knowledge.

I trust, we can now move on to address substance on the merits, instead.