On August 7th, News started a discussion on time’s arrow (which ties to the second law of thermodynamics). I found an interesting comment by FF:

FF, 4: >> It’s always frustrating to read articles on time’s arrow or time travel. In one camp, we have the Star Trek physics fanatics who believe in time travel in any direction. In the other camp, we have those who believe only in travel toward the future. But both camps are wrong. It is logically impossible for time to change at all, in any direction. We are always in the present, a continually changing present. This is easy to prove. Changing time is self-referential. Changing time (time travel) would require a velocity in time which would have to be given as v = dt/dt = 1, which is of course nonsense. That’s it. This is the only proof you need. And this is the reason that nothing can move in spacetime and why Karl Popper called spacetime, “Einstein’s block universe in which nothing happens.” . . . >>

That set me to thinking, and I responded. The connexions involved in that response in turn lead to the core framework of the design inference. Accordingly, I think this goes to logic and first principles, given the fundamental character of physics and particularly of thermodynamics and its bridge to information:

KF, 6: >>a set of puzzles.

U/D May 8, 2021, let’s illustrate fine tuning, from Lewis and Barnes:

Obviously, we inhabit what we call now, which changes in a successive, causally cumulative pattern. Thus we identify past, present (ever advancing), future. In that world, there are entities that while they may change, have a coherent identity: be-ings.

As a part of change, energy flows and does so in a way that gradually dissipates its concentrations, hence time’s arrow. This flows from the statistical properties of phase spaces for systems with large [typ 10^18 – 26 and up] numbers of particles. Thus we come to the overwhelming trend towards clusters of microstates with dominant statistical weight. We can typify by pondering a string of 500 to 1,000 coins and their H/T possibilities. If you want a more “physical” analogue, try a paramagnetic substance with as many elements in a weak B-field that specifies alignment with or against its direction. We then see that overwhelmingly near 50-50 in H/T or With/Against dominates in a sharply peaked binomial distribution.

Thus, we may contemplate the 10^57 atoms of the Sol system (or the ~ 10^80 of the observed cosmos), each acting as an observer of a string of 500 coins (or, 1,000):

Thus we see the binomial distribution of coin-flipping possibilities, here based on 10,000 actual tosses iterated 100,000 times, also indicating just how tight the peak is, mostly being between 4,850 and 5,150 H:

Thus we see the roots of discussions on fluctuations:

Note, not coincidentally, sqrt (10^4) = 10^2, or 100.

(Compare the bulk of the 10,000 coin toss plot on 100 k repetitions, centred on 5000h, with +/- 100 capturing the main part. Obviously possibilities run from 0 H to 10,000 H but there is a sharply peaked clustering about the “average”.)

[INTRODUCING FSCO/I . . .

Functionally specific, complex organisation and/or associated information]

And, if the pattern of changes is not mechanically forced and/or intelligently guided, the resulting bit patterns overwhelmingly will have no particularly meaningful or configuration-dependent functionally specific, information-rich configuration. Indeed, this is another way of putting the key design inference that:

. . . on this sort of statistical analysis,

[A:] functionally specific, complex organisation and/or associated information (FSCO/I, the relevant subset of CSI)

[U/D Aug 2 2022]. . . a descriptive phrase and abbreviation for configuration based function of considerable complexity where relatively minor disturbance of the arrangement or coupling of parts would destroy function or garble meaning, 500 – 1,000 bits of descriptive complexity being a useful threshold, as our sol system has 10^57 atoms and the observed cosmos 10^80, where fast atomic interactions are perhaps 10^-14 s, in a cosmos of about 10^17s, setting upper bounds for realistic atomic events of 10^88 to 10^111. Where, configuration spaces for 500 to 1,000 bits are 3.27*10^150 to 1.07*10^301, i.e. we see search challenge for blind, needle in haystack searches. As a typical example we may ponder a fishing reel exploded diagram, or text in this post, or of course AA chain code algorithms stored in D/RNA. Kindly see the more recent discussion on ID as design inference, research programme and movement of supporters, here. ]

. . . is a highly reliable index of intelligently directed configuration as material causal factor.

Where, too, [B:] discussion on such a binomial distribution is WLOG, as [C:] any state or configuration, in principle — and using some description language and universal constructor to give effect — can be specified as a sufficiently long bit string. Trivially, try AutoCAD and NC instructions for machines.

Framing the term further, we may ponder Orgel, 1973, i.e. the concept FSCO/I is prior to the emergence of the modern ID research programme a decade plus later:

living organisms are distinguished by their specified complexity. Crystals are usually taken as the prototypes of simple well-specified structures, because they consist of a very large number of identical molecules packed together in a uniform way. Lumps of granite or random mixtures of polymers are examples of structures that are complex but not specified. The crystals fail to qualify as living because they lack complexity; the mixtures of polymers fail to qualify because they lack specificity . . . .

[HT, Mung, fr. p. 190 & 196:] These vague idea can be made more precise by introducing the idea of information. Roughly speaking, the information content of a structure is the minimum number of instructions needed to specify the structure.

[–> this is of course equivalent to the string of yes/no questions required to specify the relevant J S Wicken “wiring diagram” for the set of functional states, T, in the much larger space of possible clumped or scattered configurations, W, as Dembski would go on to define in NFL in 2002, also cf here,

— here and

— here

— (with here on self-moved agents as designing causes).]

One can see intuitively that many instructions are needed to specify a complex structure. [–> so if the q’s to be answered are Y/N, the chain length is an information measure that indicates complexity in bits . . . ] On the other hand a simple repeating structure can be specified in rather few instructions. [–> do once and repeat over and over in a loop . . . ] Complex but random structures, by definition, need hardly be specified at all . . . . Paley was right to emphasize the need for special explanations of the existence of objects with high information content, for they cannot be formed in nonevolutionary, inorganic processes [–> Orgel had high hopes for what Chem evo and body-plan evo could do by way of info generation beyond the FSCO/I threshold, 500 – 1,000 bits.] [The Origins of Life (John Wiley, 1973), p. 189, p. 190, p. 196.]

J S Wicken, in a similar remark, wrote:

‘Organized’ systems are to be carefully distinguished from ‘ordered’ systems. Neither kind of system is ‘random,’ but whereas ordered systems are generated according to simple algorithms [i.e. “simple” force laws acting on objects starting from arbitrary and common- place initial conditions] and therefore lack complexity, organized systems must be assembled element by element according to an [originally . . . ] external ‘wiring diagram’ with a high information content . . . Organization, then, is functional complexity and carries information. It is non-random by design or by selection, rather than by the a priori necessity of crystallographic ‘order.’ [“The Generation of Complexity in Evolution: A Thermodynamic and Information-Theoretical Discussion,” Journal of Theoretical Biology, 77 (April 1979): p. 353, of pp. 349-65. (Emphases and notes added. Nb: “originally” is added to highlight that for self-replicating systems, the blue print can be built-in.)]

The source of the descriptive phrase and abbreviation FSCO/I is obvious.]

U/D May 8, 2021: For convenience, I insert a map of deeply isolated islands of function based on configuration, in a large configuration space:

So too, we see how design by injection of active information moves to an initial island then from one to the next . . . the first search challenge is to find beach-heads of function in vast seas of non function, not hill climbing within such an island. However, that secondary challenge — given possibility of plateaus and valleys in fitness landscapes, should not be overlooked:

I have often used a fishing reel as a simple illustration of how FSCO/I manifests itself in complex, mutually adapted parts arranged and coupled together to work . . . and, yes, ABU of Sweden, designers and manufacturers of this famous family of reels, started out as clock makers (Taxi-Cab Meters):

In that context, it is well worth excerpting Paley from Ch 2 of his famous book, where he gave his full example, the self-replicating watch . . . no, it was not just stumbling across a stone vs finding an ordinary watch, as he discussed in in Ch 1 of his Natural Theology. There is considerable, sobering, overlooked food for thought regarding the design inference here, especially as we then fast forward 150 years to von Neumann’s kinematic self-replicator:

Suppose, in the next place, that the person who found the watch [in a field and stumbled on the stone in Ch 1 just past, where this is 50 years before Darwin in Ch 2 of a work Darwin full well knew about] should after some time discover that, in addition to

[–> here cf encapsulated, gated, metabolising automaton, and note, “stickiness” of molecules raises a major issue of interfering cross reactions thus very carefully controlled organised reactions are at work in life . . . ]

all the properties [= specific, organised, information-rich functionality] which he had hitherto observed in it, it possessed the unexpected property of producing in the course of its movement another watch like itself

[–> i.e. self replication, cf here the code using von Neumann kinematic self replicator that is relevant to first cell based life]

. . . — the thing is conceivable [= this is a gedankenexperiment, a thought exercise to focus relevant principles and issues]; that it contained within it a mechanism, a system of parts — a mold, for instance, or a complex adjustment of lathes, baffles, and other tools — evidently and separately calculated for this purpose

[–> it exhibits functionally specific, complex organisation and associated information; where, in mid-late C19, cell based life was typically thought to be a simple jelly-like affair, something molecular biology has long since taken off the table but few have bothered to pay attention to Paley since Darwin]

. . . . The first effect would be to increase his admiration of the contrivance, and his conviction of the consummate skill of the contriver. Whether he regarded the object of the contrivance, the distinct apparatus, the intricate, yet in many parts intelligible mechanism by which it was carried on, he would perceive in this new observation nothing but an additional reason for doing what he had already done — for referring the construction of the watch to design and to supreme art

[–> directly echoes Plato in The Laws Bk X on the ART-ificial (as opposed to the strawman tactic “supernatural”) vs the natural in the sense of blind chance and/or mechanical necessity as serious alternative causal explanatory candidates; where also the only actually observed cause of FSCO/I is intelligently configured configuration, i.e. contrivance or design]

. . . . He would reflect, that though the watch before him were, in some sense, the maker of the watch, which, was fabricated in the course of its movements, yet it was in a very different sense from that in which a carpenter, for instance, is the maker of a chair — the author of its contrivance, the cause of the relation of its parts to their use [–> i.e. design].

. . . . We might possibly say, but with great latitude of expression, that a stream of water ground corn ; but no latitude of expression would allow us to say, no stretch cf conjecture could lead us to think, that the stream of water built the mill, though it were too ancient for us to know who the builder was. What the stream of water does in the affair is neither more nor less than this: by the application of an unintelligent impulse to a mechanism previously arranged, arranged independently of it and arranged by intelligence, an effect is produced, namely, the corn is ground. But the effect results from the arrangement. [–> points to intelligently directed configuration as the observed and reasonably inferred source of FSCO/I] The force of the stream cannot be said to be the cause or the author of the effect, still less of the arrangement. Understanding and plan in the formation of the mill were not the less necessary for any share which the water has in grinding the corn; yet is this share the same as that which the watch would have contributed to the production of the new watch

. . . . Though it be now no longer probable that the individual watch which our observer had found was made immediately by the hand of an artificer, yet doth not this alteration in anywise affect the inference, that an artificer had been originally employed and concerned in the production. The argument from design remains as it was. Marks of design and contrivance are no more accounted for now than they were before. In the same thing, we may ask for the cause of different properties. We may ask for the cause of the color of a body, of its hardness, of its heat ; and these causes may be all different. We are now asking for the cause of that subserviency to a use, that relation to an end, which we have remarked in the watch before us. No answer is given to this question, by telling us that a preceding watch produced it. There cannot be design without a designer; contrivance, without a contriver; order [–> better, functionally specific organisation], without choice; arrangement, without any thing capable of arranging; subserviency and relation to a purpose, without that which could intend a purpose; means suitable to an end, and executing their office in accomplishing that end, without the end ever having been contemplated, or the means accommodated to it.

Arrangement, [–> complex] disposition of parts, subserviency of means to an end, relation of instruments to a use, imply the presence of intelligence and mind. No one, therefore, can rationally believe that the insensible, inanimate watch, from which the watch before us issued, was the proper cause of the mechanism we so much admire m it — could be truly said to have constructed the instrument, disposed its parts, assigned their office, determined their order, action, and mutual dependency, combined their several motions into one result, and that also a result connected with the utilities of other beings. All these properties, therefore, are as much unaccounted for as they were before. Nor is any thing gained by running the difficulty farther back, that is, by supposing the watch before us to have been produced from another watch, that from a former, and so on indefinitely. Our going back ever so far brings us no nearer to the least degree of satisfaction upon the subject. Contrivance is still unaccounted for. We still want a contriver. A designing mind is neither supplied by this supposition nor dispensed with. If the difficulty were diminished the farther we went back, by going back indefinitely we might exhaust it. And this is the only case to which this sort of reasoning applies. “Where there is a tendency, or, as we increase the number of terms, a continual approach towards a limit, there, by supposing the number of terms to be what is called infinite, we may conceive the limit to be attained; but where there is no such tendency or approach, nothing is effected by lengthening the series . . . , And the question which irresistibly presses upon our thoughts is. Whence this contrivance and design ? The thing required is the intending mind, the adapted hand, the intelligence by which that hand was directed. This question, this demand, is not shaken off by increasing a number or succession of substances destitute of these properties; nor the more, by increasing that number to infinity. If it be said, that upon the supposition of one watch being produced from another in the course of that other’s movements, and by means of the mechanism within it, we have a cause for the watch in my hand, namely, the watch from which it proceeded — I deny, that for the design, the contrivance, the suitableness of means to an end, the adaptation of instruments to a use, all of which we discover in the watch, we have any cause whatever. It is in vain, therefore, to assign a series of such causes, or to allege that a series may be carried back to infinity; for I do not admit that we have yet any cause at all for the phenomena, still less any series of causes either finite or infinite. Here is contrivance, but no contriver; proofs of design, but no designer. [Paley, Nat Theol, Ch 2]

I further add, that I find it almost inconceivable that for 150 years since Darwin, we have hardly ever heard this part of Paley’s argument. Paley anticipated von Neumann by 150 years and should get full marks for posing the challenge of self-replicating machinery and what that ADDITIONALITY further points to.

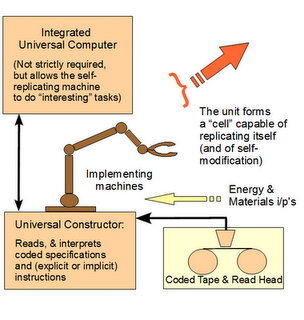

So, duly awakened to Paley’s full argument, let us now consider a von Neumann kinematic self replicator [vNSR] as both a machine with universal fabrication in principle and as a functional, information-rich highly organised component of the living cell with its own origin to be explained as the basis for reproduction in cell based life:

As a result, we see that Orgel is right:

>living organisms are distinguished by their specified complexity. Crystals are usually taken as the prototypes of simple well-specified structures, because they consist of a very large number of identical molecules packed together in a uniform way. Lumps of granite or random mixtures of polymers are examples of structures that are complex but not specified. The crystals fail to qualify as living because they lack complexity; the mixtures of polymers fail to qualify because they lack specificity . . . .

[HT, Mung, fr. p. 190 & 196:]

These vague idea can be made more precise by introducing the idea of information. Roughly speaking, the information content of a structure is the minimum number of instructions needed to specify the structure.

[–> this is of course equivalent to the string of yes/no questions required to specify the relevant J S Wicken “wiring diagram” for the set of functional states, T, in the much larger space of possible clumped or scattered configurations, W, as Dembski would go on to define in NFL in 2002, also cf here,

— here and

— here

— (with here on self-moved agents as designing causes).]

One can see intuitively that many instructions are needed to specify a complex structure. [–> so if the q’s to be answered are Y/N, the chain length is an information measure that indicates complexity in bits . . . ] On the other hand a simple repeating structure can be specified in rather few instructions. [–> do once and repeat over and over in a loop . . . ] Complex but random structures, by definition, need hardly be specified at all . . . . Paley was right to emphasize the need for special explanations of the existence of objects with high information content, for they cannot be formed in nonevolutionary, inorganic processes [–> Orgel had high hopes for what Chem evo and body-plan evo could do by way of info generation beyond the FSCO/I threshold, 500 – 1,000 bits.] [The Origins of Life (John Wiley, 1973), p. 189, p. 190, p. 196.]>

Note, here, on protein synthesis:

. . . in the wider context of cellular metabolism (protein Synthesis is in the upper left corner, as an action of ribosomes):

. . . contrasted with, say, a petroleum refinery:

. . . where also, let us note the use of coded, alphanumeric strings . . . language, expressing algorithms . . . in the synthesis of proteins, as Yockey went on to observe:

Thus, we come to the heart of why the design inference on FSCO/I is so reliable: trillions of observed cases of its origin; uniformly, caused by intelligently directed configuration.

So, the design inference has underpinnings in the informational view of statistical thermodynamics, on which entropy is seen as a metric of average missing information to specify particular microstate, given only the macro-observable state. Thus, too, degree of randomness of a particular configuration is strongly tied to required “minimum” description length. A truly random configuration essentially has to be quoted to describe it. A highly orderly (so, repetitive) one can be specified as: unit cell, repeated n times in some array. Information-rich descriptions are intermediate and are meaningful in some language: language is naturally somewhat compressible but is not reducible to repeating unit cells.

Consequently, the design inference on FSCO/I is robust.

We also recognise a space-time matrix and presence of fields so that there is a context of interactions of beings in the common world.

However, simultaneity turns out to be an observer-relative phenomenon, energy and information face a speed limit, c. Further, massive bodies of planetary, stellar or galactic scale sufficiently warp the space-time fabric to have material effects. Along the way, the micro scale is quantised, leading also to a world of virtual particles below the Einstein energy-time uncertainty threshold that shapes field interactions and may have macro effects up to the level of cosmic inflation and even bubbles expanding into sub-cosmi.

There are also issues of rationally free mind rising above what computing substrates can do as dynamic-stochastic systems, interacting with brains through quantum influences.

Reality is complex yet somehow unified — the first metaphysical problem contemplated as a philosophical general issue in our civilisation, the one and the many.

And that in turn points to the importance of the world-root that springs up into the causal-temporal, successive, cumulative order that we inhabit. >>

Walker and Davies add further insights:

In physics, particularly in statistical mechanics, we base many of our calculations on the assumption of metric transitivity, which asserts that a system’s trajectory will eventually [–> given “enough time and search resources”] explore the entirety of its state space – thus everything that is phys-ically possible will eventually happen. It should then be trivially true that one could choose an arbitrary “final state” (e.g., a living organism) and “explain” it by evolving the system backwards in time choosing an appropriate state at some ’start’ time t_0 (fine-tuning the initial state). In the case of a chaotic system the initial state must be specified to arbitrarily high precision. But this account amounts to no more than saying that the world is as it is because it was as it was, and our current narrative therefore scarcely constitutes an explanation in the true scientific sense.

We are left in a bit of a conundrum with respect to the problem of specifying the initial conditions necessary to explain our world. A key point is that if we require specialness in our initial state (such that we observe the current state of the world and not any other state) metric transitivity cannot hold true, as it blurs any dependency on initial conditions – that is, it makes little sense for us to single out any particular state as special by calling it the ’initial’ state. If we instead relax the assumption of metric transitivity (which seems more realistic for many real world physical systems – including life), then our phase space will consist of isolated pocket regions and it is not necessarily possible to get to any other physically possible state (see e.g. Fig. 1 for a cellular automata example).

[–> or, there may not be “enough” time and/or resources for the relevant exploration, i.e. we see the 500 – 1,000 bit complexity threshold at work vs 10^57 – 10^80 atoms with fast rxn rates at about 10^-13 to 10^-15 s leading to inability to explore more than a vanishingly small fraction on the gamut of Sol system or observed cosmos . . . the only actually, credibly observed cosmos]

Thus the initial state must be tuned to be in the region of phase space in which we find ourselves [–> notice, fine tuning], and there are regions of the configuration space our physical universe would be excluded from accessing, even if those states may be equally consistent and permissible under the microscopic laws of physics (starting from a different initial state). Thus according to the standard picture, we require special initial conditions to explain the complexity of the world, but also have a sense that we should not be on a particularly special trajectory to get here (or anywhere else) as it would be a sign of fine–tuning of the initial conditions. [ –> notice, the “loading”] Stated most simply, a potential problem with the way we currently formulate physics is that you can’t necessarily get everywhere from anywhere (see Walker [31] for discussion). [“The “Hard Problem” of Life,” June 23, 2016, a discussion by Sara Imari Walker and Paul C.W. Davies at Arxiv.]

Again, food for thought. END

PS: I followed up by searching and commented at 2 below. On reflection what is there is so pivotal that I append it as integral to the OP. And no, I do not have to endorse or even like the corpus of a thinker’s work in order to acknowledge where s/he has made a truly striking, powerfully penetrating point. We are invited to not despise “prophesyings,” but to test all things and hold on to the good. In that spirit:

KF, 2 below: >> . . . I find an interesting discussion on the one and the many:

One of the most basic and continuing problems of man’s history is the question of the one and the many and their relationship. The fact that in recent years men have avoided discussion of this matter has not ceased to make their unstated presuppositions with respect to it determinative of their thinking.

Much of the present concern about the trends of these times is literally wasted on useless effort because those who guide the activities cannot resolve, with the philosophical tools at hand to them, the problem of authority. This is at the heart of the problem of the proper function of government, the power to tax, to conscript, to execute for crimes, and to wage warfare. The question of authority is again basic to education, to religion, and to the family. Where does authority rest, in democracy or in an elite, in the church or in some secular institution, in God or in reason? . . . The plea that this is a pluralistic culture is merely recognition of the problem-not an answer. The problem of authority is not answerable to reason alone, and basic to reason itself are pre-theoretical suppositions or axioms1 which represent essentially religious commitments. And one such basic commitment is with respect to the question of the one and the many.2 The fact that students can graduate from our universities as philosophy majors without any awareness of the importance or centrality of this question does not make the one and the many less basic to our thinking. The difference between East and West, and between various aspects of Western history and culture, rests on answers to this problem which, whether consciously or unconsciously, have been made. Whether recognized or not, every argument and every theological, and philosophical, political, or any other exposition is based on a presupposition about man, God, and society-about reality. This presupposition rules and determines the conclusion; the effect is the result of a cause. And one such basic presupposition is with reference to the one and the many.

[ By R. J. Rushdoony April 24, 2017 Adapted from The One and the Many: Studies in the Philosophy of Order and Ultimacy ]

The one and the many, unity in coherent (and hopefully intelligible) diversity as a cosmos is absolutely pivotal. Including, on the force of the design inference i/l/o time’s arrow but also onward issues of the root of reality, the necessary and independent core from which all the diversity we see springs.

So, this question we must tackle, tackle in a fundamental way.>>