The recent Engineering and ID conference was obviously fruitful. I find it — HT: JohnnyB — helpful to compose Mignea’s schematic for self-replication, and discuss it a bit in the context of the origin of self-replicating entities given von Neumann’s requisites of a successful kinematic self-replicator. [Henceforth, vNSR.]

Let me extract from the just updated discussion in the IOSE course, Unit 2:

_________

>>John von Neumann’s self-replicator (1948 – 49) is a good focal case to study. Ralph Merkle gives a good motivating context:

[[T]he costs involved in the exploration of the galaxy using self replicating probes would be almost exclusively the design and initial manufacturing costs. Subsequent manufacturing costs would then drop dramatically . . . . A device able to make copies of itself but unable to make anything else would not be very valuable. Von Neumann’s proposals centered around the combination of a Universal Constructor, which could make anything it was directed to make, and a Universal Computer, which could compute anything it was directed to compute. This combination provides immense value, for it can be re- programmed to make any one of a wide range of things . . . [[Self Replicating Systems and Molecular Manufacturing, Xerox PARC, 1992. (Emphases added.)]

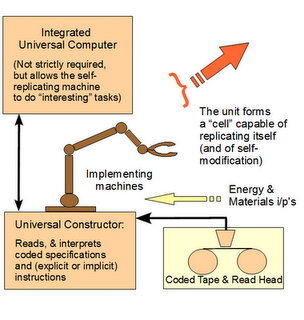

Fig. G.2: A schematic, 3-D/“kinematic” von Neumann-style self-replicating machine. [[NB: von Neumann viewed self-replication as a special case of universal construction; “mak[[ing] anything” under programmed control.] (Adapted, Tempesti. NASA’s illustration may be viewed here. and the Cairns-Smith model here.)

Fig. G.2 (b): Mignea’s schematic of the requisites of kinematic self-replication, showing duplication and arrangement then separation into daughter automata. This requires stored algorithmic procedures, descriptions sufficient to construct components, means to execute instructions, materials handling, controlled energy flows, wastes disposal and more. (Source: Mignea, 2012, slide show; fair use. Presentation speech is here.)

Von Neumann’s thought on a kinematic — physically acting (not a mere computer simulation) — self replicator that has the key property of additionality [[i.e it is capable of doing something of interest, AND is able to replicate itself on a stored, code description and an implementing facility] may be summarised in brief, as De Freitas and Merkle quite nicely do for us:

Von Neumann [[3] concluded that the following characteristics and capabilities were sufficient for machines to replicate without degeneracy of complexity:

o Logical universality – the ability to function as a general-purpose computing machine able to simulate a universal Turing machine (an abstract representation of a computing device, which itself is able to simulate any other Turing machine) [[310, 311]. This was deemed necessary because a replicating machine must be able to read instructions to carry out complex computations.

o Construction capability – to self-replicate, a machine must be capable of manipulating information, energy, and materials of the same sort of which it itself is composed.

o Constructional universality – In parallel to logical universality, constructional universality implies the ability to manufacture any of the finitely sized machines which can be formed from specific kinds of parts, given a finite number of different kinds of parts but an indefinitely large supply of parts of each kind.

Self-replication follows immediately from the above, since the universal constructor* must be constructible from the set of manufacturable parts. If the original machine is made of these parts, and it is a constructible machine, and the universal constructor is given a description of itself, it ought to be able to make more copies of itself . . . .

Von Neumann thus hit upon a deceptively simple architecture for machine replication [[3]. The machine would have four parts – (1) a constructor “A” that can build a machine “X” when fed explicit blueprints of that machine; (2) a blueprint copier “B”; (3) a controller “C” that controls the actions of the constructor and the copier, actuating them alternately; and finally (4) a set of blueprints f(A + B + C) explicitly describing how to build a constructor, a controller, and a copier. The entire replicator may therefore be described as (A + B + C) + f(A + B + C) . . . .

Von Neumann [[3] also pointed out that if we let X = (A + B + C + D) where D is any arbitrary automaton, then (A + B + C) + f(A + B + C + D) produces (A + B + C + D) + f(A + B + C + D), and “our constructing automaton is now of such a nature that in its normal operation it produces another object D as well as making a copy of itself.” In other words, it can create useful non-self products in addition to replicating itself and has become a productive general-purpose manufacturing system. Alternatively, it has the potential to evolve by incorporating new features into itself.

. . . . Now therefore, following von Neumann generally, such a machine capable of doing something of interest with an additional self-replicating facility uses . . .

Also, parts (ii), (iii) and (iv) are each necessary for and together are jointly sufficient to implement a self-replicating machine with an integral von Neumann universal constructor.

That is, we see here an irreducibly complex set of core components that must all be present in a properly organised fashion for a successful self-replicating machine to exist. [[Take just one core part out, and self-replicating functionality ceases: the self-replicating machine is irreducibly complex (IC).].

This irreducible complexity is compounded by the requirement (i) for codes, requiring organised symbols and rules to specify both steps to take and formats for storing information, and (v) for appropriate material resources and energy sources.>>

________

As we multiply these requisites by the Mignea steps, and implied irreducibly complex, quite specific functional relationships we easily see why it is utterly implausible for such a system to arise and work based on chance collocations of detritus in our proverbial warm little Darwinian pond, on the gamut of the observed cosmos. The above will run past 1,000 bits of FSCO/I so fast that that barrier is a triviality. Indeed, we also begin to see that the 100,000 – 1 mn bits of stored information in the simplest genomes is a significant underestimate of the information at work. Much of the relevant information is going to be in how the components are organised and communicate.

Moreover, symbolic digital codes, algorithmic processes and execution units already point to purpose, planning and linguistic/logical processing of information.

Before cell based life on earth with such a self-replicating facility existed and as an integral part of the possibility of its existence.

Furthermore, while DeFreitas and Merkle briefly say that the vNSR “has the potential to evolve by incorporating new features into itself,” that is not so simple. For complex new function has to be integrated with the above, and requires 10 – 100 million bits of fresh information dozens of times over for the world of life’s new body plans , that too requires complex functionally integrated information and organisation that can be assembled from a zygote or the equivalent by replication, specialisation and higher level functional organisation.

Those who imagine that this can be had on the cheap incremental accident by accident that then is fixed by differential reproductive success, should be required to empirically show this in a realistic case before going further. Otherwise, this is simply the spinning of just so stories in absence of empirical accountability.

On top of all of that, the above shows that the much derided Paley had a point when, in his Natural Theology, Ch 2, he argued by way of the thought exercise of a self-replicating, time-keeping watch:

In short, at the root of the Darwinian Tree of Life, we find a structure and system that point strongly to design. Design is not only in the door, but sitting at the table as a serious alternative from the outset. And that sharply changes our estimation of the credibility of design as candidate best explanation at higher levels in the tree.

Perhaps, then —pace Dawkins, Lewontin, Coyne, US NAS & NSTA et al — we should be willing to reflect on whether the reason biological systems, from molecular scale to that of the whole organism have so strong an appearance of being designed is because that is just what they are. Designed. END