A type of reasoning critical in the sciences:

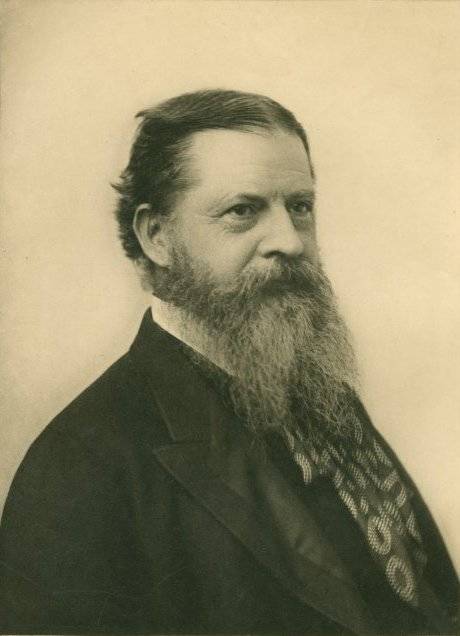

Abductive reasoning, originally developed by an American philosopher Charles Sanders Peirce (1839–1914), is sometimes called an “inference to the best explanation,” as in the following example:

“One morning you enter the kitchen to find a plate and cup on the table, with breadcrumbs and a pat of butter on it, and surrounded by a jar of jam, a pack of sugar, and an empty carton of milk. You conclude that one of your house-mates got up at night to make him- or herself a midnight snack and was too tired to clear the table. This, you think, best explains the scene you are facing. To be sure, it might be that someone burgled the house and took the time to have a bite while on the job, or a house-mate might have arranged the things on the table without having a midnight snack but just to make you believe that someone had a midnight snack. But these hypotheses strike you as providing much more contrived explanations of the data than the one you infer to.” –

IGOR DOUVEN, “ABDUCTION” AT STANFORD ENCYCLOPAEDIA OF PHILOSOPHYNotice that the conclusion is not, strictly, a deduction and there is not enough evidence for an induction either. We simply choose the simplest explanation that accounts for all the facts, keeping in mind the possibility that new evidence may force us to reconsider our view.

Now, why can’t computers do that? William J. Littlefield II says that they would get stuck in an endless loop

“A type of reasoning AI can’t replace” at Mind Matters News

Abduction is the kind of reasoning ID uses.

Further reading on computers and thought processes from Eric Holloway:

The flawed logic behind thinking computers:

Part I: A program that is intelligent must do more than reproduce human behavior

Part II: There is another way to prove a negative besides exhaustively enumerating the possibilities

and

Part III: No program can discover new mathematical truths outside the limits of its code

Will artificial intelligence design artificial superintelligence?

Artificial intelligence is impossible

and

Human intelligence as a Halting Oracle