Further to astronomer Hugh Ross on degrees of certainty in science, from Christie Aschwanden, Five Thirty-Eight’s lead science writer:

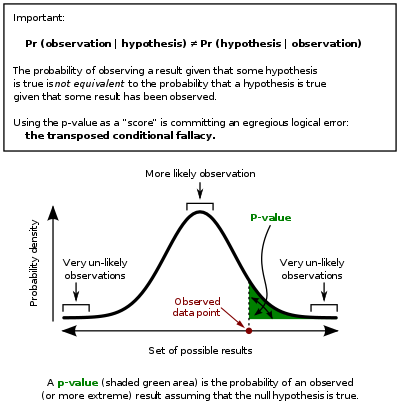

P-values have taken quite a beating lately. These widely used and commonly misapplied statistics have been blamed for giving a veneer of legitimacy to dodgy study results, encouraging bad research practices and promoting false-positive study results.

But after writing about p-values again and again, and recently issuing a correction on a nearly year-old story over some erroneous information regarding a study’s p-value (which I’d taken from the scientists themselves and their report), I’ve come to think that the most fundamental problem with p-values is that no one can really say what they are. More.

Here’s the theory, from Dummies, but apparently no one finds it easy to understand in practice:

For example, suppose a pizza place claims their delivery times are 30 minutes or less on average but you think it’s more than that. You conduct a hypothesis test because you believe the null hypothesis, Ho, that the mean delivery time is 30 minutes max, is incorrect. Your alternative hypothesis (Ha) is that the mean time is greater than 30 minutes. You randomly sample some delivery times and run the data through the hypothesis test, and your p-value turns out to be 0.001, which is much less than 0.05. In real terms, there is a probability of 0.001 that you will mistakenly reject the pizza place’s claim that their delivery time is less than or equal to 30 minutes. Since typically we are willing to reject the null hypothesis when this probability is less than 0.05, you conclude that the pizza place is wrong; their delivery times are in fact more than 30 minutes on average, and you want to know what they’re gonna do about it! (Of course, you could be wrong by having sampled an unusually high number of late pizza deliveries just by chance.)

See also: Nature: Banning p-values not enough to rid science of shoddy statistics

and

Rob Sheldon explains p-value vs. R2 value in research, and why it matters

Oh, and Steven Weinberg defends “Whiggish” history of science Actually, science has nothing over these other endeavours when the question can be decided by evidence.

Follow UD News at Twitter!