Andre just asked me:

can you please embed a flowchart of how communication works for [XXXX] …

You know the one that goes like this

input encoder medium decoder output.

I don’t think [XXXX] understands the problems such a system has with accidental processes nor does he understand IC. Please KF. With a little bit of luck a light bulb might go on for him.

I don’t know how to embed an image in a comment here at UD, which — for cause — is quite restrictive as a WP blog.

Here is my slightly expanded version of the classic diagram used by Shannon (a version of what I usually used in the classroom, sometimes with modulator/demodulator rather than encoder/decoder*):

It’s not too hard to see how peer-level layers are involved like in a layer-cake, the above just puts the layer-cake on the side.

But just for completeness, here is a picture of the OSI network model with two intermediate nodes in a communication subnet:

In this, we see how at different layers, there is a virtual direct communication between peers but this is actually accomplished by interfaces up and down the stacks with physical transmission being at the lowest level.

This can be made generic, as the coded-encoder pairs can be multi-layer.

Let’s add, a generic layer-cake model framework:

Likewise, the process logic imposes irreducible complexity: once we have a code and need to transmit and receive then decode, the basic core units to carry out the tasks will be necessary.

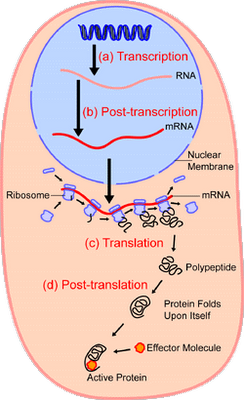

We may then compare the protein synthesis process, first in overview:

. . . then as a video:

[vimeo 31830891]

. . . noting a comparison to punched paper tape that brings out the role of functionally specific complex organisation and associated information:

And if you don’t think this is relevant, have a little chat with Dr Hubert P Yockey, in his Yockey, Information Theory, Evolution, and the Origin of Life, Cambridge University Press, MA, 2005, I annotate:

And if you don’t think this is relevant, have a little chat with Dr Hubert P Yockey, in his Yockey, Information Theory, Evolution, and the Origin of Life, Cambridge University Press, MA, 2005, I annotate:

U/D May 30: to aid in interpreting the Yockey analysis/Mapping, here is an annotated form of the Shannon model of 1948, which subsumes all functions in “transmitter” and “receiver” blocks:

(NB: Cf Royal Truman’s discussion, here.)

I think Gitt’s version of a layer-cake model is also instructive:

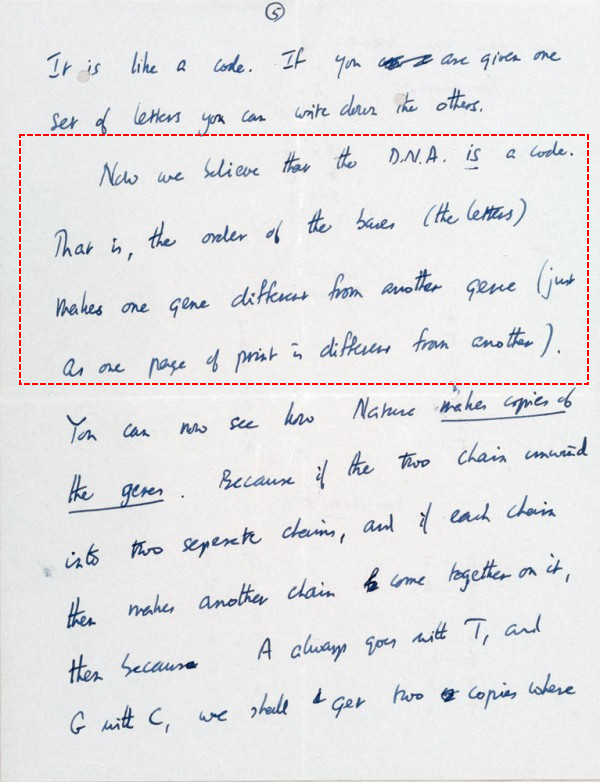

And finally, let me quote from Crick’s March 19, 1953 letter to his son, Michael:

I trust, these will help us understand what is involved in/implied by the discovery that coded information is involved in the world of cell based life, along with translation, storage, sending, numerically controlled assembly and linked functionally specific complex organisation and associated information.

Of course, I expect this to make but little impression on those determined to dismiss the evident coded information in the heart of the cell, but that will send a clear message to the rest of us as to what is really going on. END

* Oddly, one of those students is now a Minister with responsibility for telecommunications.

PS: Let’s clip an onward, instructive exchange of comments, XXXX having long since vanished once the above was put up:

G, 555: >> carpathian: There are actually no exceptions at all but the data carried by the medium, is completely unrelated to how it is delivered.

M: >>the stereochemical hypothesis

…the first models of the genetic code were all based on the stereochemical hypothesis, the idea that the coding rules are dictated by chemical relationships in three dimensions, whereas language is made of arbitrary conventions. Eventually, however, the stereochemical hypothesis had to be abandoned because it became clear that the rules of the genetic code are not the result of chemical necessity. In this sense they are as arbitrary as the rules of language, and this makes us realize that at the molecular level there is not only recursion but also arbitrariness.

– Marcello Barbieri, Code Biology>>

A: >>Do people know what protocol actually mean or are we going to differ on its meaning? >>

M: >>Hi Andre, We’re trying to define a protocol for how we will go about defining the meaning of protocol.

Any suggestions?>>

K: >>the general and relevant meanings are readily accessible and clips have been given. Relevant here is a Wiki clip:

In telecommunications, a communication protocol is a system of rules that allow two or more entities of a communication system to communicate between them to transmit information via any kind of variation of a physical quantity. [–> notice, generality, which goes beyond particular discrete-state cases, albeit such cases are particularly important in creating the concept behind the term] These are the rules or standard that defines the syntax, semantics and synchronization of communication and possible error recovery methods. Protocols may be implemented by hardware, software, or a combination of both.[1]

Communicating systems use well-defined formats (protocol) for exchanging messages. [–> Format, of course extends beyond discrete state, and in fact all real world signals are analogue [consider impacts of power supply glitches and the role of decoupling capacitors if you doubt me], certain imposed standards and thresholds. Each message has an exact meaning intended to elicit a response from a range of possible responses pre-determined for that particular situation. The specified behavior is typically independent of how it is to be implemented. Communication protocols have to be agreed upon by the parties involved.[2] To reach agreement, a protocol may be developed into a technical standard. A programming language describes the same for computations, so there is a close analogy between protocols and programming languages: protocols are to communications as programming languages are to computations.[3]

In recent times digital comms has dominated and handshaking too, so I think there is a tendency of overly specific focus. The blunder above by an objector, on User Datagram PROTOCOL (it’s right there in the name) is emblematic.

Wiki is again instructive, speaking against known ideological bias:

The User Datagram Protocol (UDP) is one of the core members of the Internet protocol suite. The protocol was designed by David P. Reed in 1980 and formally defined in RFC 768.

UDP uses a simple connectionless transmission model with a minimum of protocol mechanism. It has no handshaking dialogues, and thus exposes any unreliability of the underlying network protocol to the user’s program. There is no guarantee of delivery, ordering, or duplicate protection. UDP provides checksums for data integrity, and port numbers for addressing different functions at the source and destination of the datagram.

With UDP, computer applications can send messages, in this case referred to as datagrams, to other hosts on an Internet Protocol (IP) network without prior communications to set up special transmission channels or data paths. UDP is suitable for purposes where error checking and correction is either not necessary or is performed in the application, avoiding the overhead of such processing at the network interface level. Time-sensitive applications often use UDP because dropping packets is preferable to waiting for delayed packets, which may not be an option in a real-time system.[1] If error correction facilities are needed at the network interface level, an application may use the Transmission Control Protocol (TCP) or Stream Control Transmission Protocol (SCTP) which are designed for this purpose.

The protocol context is that of interaction and a standardised programme of correct behaviour. That’s why the term was borrowed from the context of diplomacy, courts of law and royal courts. There is no reason to confine it to discrete state cases — which in reality are also analogue anyway.

As my response to your diagram request shows, the logic of what is going on is primary, attached labels, secondary:

1 –> To communicate there must be a co-ordinated in-commonness of corresponding elements . . . hence

2 –> the natural emergence of layered peer units in the comms system. As well,

3 –> we naturally have start point and destination and wish to send an understandable message.

4 –> This leads to standards, specifications and co-ordination regarding:

– transduction,

– modulation and/or encoding,

– ports/interfaces [at all sorts of levels, well do I recall incoming/outgoing specs for TTL, CMOS & ECL logic and for UARTs],

– power amplification and coupling to a channel/medium,

– detection of a message at the receiving end,

– demod and decoding,

– presentation to the sink.

5 –> All of this naturally leads to a need for standards within a comms system, and standardisation naturally tends to spread where there is an incentive to be in mutual communication, e.g. the spreading of AM radio and stereophonic records [the fate of quadraphonic records is instructive on failure to meet reasonable accord]

6 –> Terms for such standards, such as codes, modulation systems and protocols are secondary to the underlying realities they describe.

7 –> The dividing line here is that

– codes address content that uses discrete state elements [e.g. alphanumeric characters, codons for genes, binary digits],

– protocols are concerned with setting up co-ordinated communication with due regard to the natural layer-cake effect, and

– mod/demod is concerned with encapsulating, sending, propagating and receiving then recovering signals in the midst of noise (and having regard to bandwidth and channel capacity issues).

8 –> The upshot is, that once communication becomes a significant, non-trivial task, standards and a complex framework of specific rules embedded in the organisation of functional elements leads to a system that is in itself information-rich.

9 –> That is, any complex communication system implies functionally specific complex [irreducibly so in fact — all of the core has to be there and has to be right for the whole to work] organisation and associated information, FSCO/I.

10 –> FSCO/I, per trillions of observed cases, has just one empirically known source, design; a point backed up by the needle-in-haystack blind chance and necessity search challenge. Regardless of dismissive rhetoric to the contrary.

11 –> And in the discrete signal case, we further deal with code, which is a manifestation of a phenomenon that in itself strongly points to verbalising intelligence as root cause.

12 –> Where, when we come across entities that manifest FSCO/I like this, the underlying FSCO/I is embedded in the organisation of the system and

13 –> it can therefore in principle be retrieved and measured by analysing the system and subsystems on node-arc networks and devising a reasonable structured chain of Y/N q’s to specify the description.

14 –> The chain length of y/n q’s then is an index in functionally specific bits, of the info content of the organisation.

15 –> Such holds for hardware and for software insofar as the latter is embedded in moving or stored signals.

So, we see how the underlying logic of communication systems of any significant complexity points to design as credible source, due to the embedded FSCO/I.

In the world of the cell, as Yockey summarised in his diagram (cf. the just linked) and as we can see in action in other diagrams and a video there, the protein synthesis system embeds a communication system pivoting on D/RNA as string data structure coded elements that hold regulatory and assembly instructions as well as the content of such.

Where, too, the proteins, functional RNAs etc that come from the code further show FSCO/I that is remote from the physical-chemical action steps involved in the communication and assembly process. (Think, string chaining –> folding –> agglomeration and activation –> biofunction.)

Next, for proteins and proteinaceous enzymes [I here distinguish ribozymes], the functionally relevant configs are deeply isolated in AA sequence space, and for that matter AA-AA peptide bonds are themselves not the only chemically relevant possibilities in play.

Now, it is quite evident that cumulatively such strongly points to intelligently directed highly skilled configuration as cause, i.e. design.

But, it is predictable that such a conclusion will be stoutly resisted by all sorts of rhetorical artifices, due to a priori commitment to evolutionary materialism.

If you doubt me, note Lewontin in his notorious 1997 NYRB remark:

It is not that the methods and institutions of science somehow compel us to accept a material explanation of the phenomenal world, but, on the contrary, that we are forced by our a priori adherence to material causes [[–> another major begging of the question . . . ] to create an apparatus of investigation and a set of concepts that produce material explanations, no matter how counter-intuitive, no matter how mystifying to the uninitiated. Moreover, that materialism is absolute [[–> i.e. here we see the fallacious, indoctrinated, ideological, closed mind . . . ], for we cannot allow a Divine Foot in the door. [Those tempted to cry the accusation, quote-mining should see the annotated fuller cite as linked]

Until that ideological bewitchment is exposed, highlighted as a crude fallacy, and becomes utterly untenable as a violation of the vision of seeking empirically warranted truth that gave science the credibility it had, there will be no willingness to receive anything counter to such ideological closed-mindedness, no matter how compelling.

Hence my emphasis on showing the facts and inviting the reasonable onlooker to see for him- or her- self what is going on.>>

No exceptions to non-baseband digital communications links such as ethernet exist? [–> cf. here & here] How so? point us to some knowledgeable source on that please.

This again in no way suggests that a protocol is present.

???? [–> i.e. once signals and messages must conform to a specific format for transmitter-receiver compatibility, a protocol, even if not formally specified by some authority, must exist]

The presence of information is not related in any way to the protocol.

why the hang up on protocols? This is nothing but deflection. I made a wide angle view statement that all machinery around the exchange of information is itself built on information. Whether that machinery is built on software/hardware or hardware only should present no either/or scenario to grapple with. This is all obfuscation based around software engineering which can be dispensed with. You made the statement that, vague as it reads, seems to say that a phonograph record and playback apparatus is not used in information transfer. Which if that is what is being said, is bogus.

Making the argument dependent on a software engineering point of view is obfuscation.>>