G2 has made an objection at 45 in the STP 3 thread on how UD is a philosophy-theology site, and how he sees no science advances. I think it worth the whole to highlight a response, as a headlined post supportive to the STP 3 thread; of course with the added features such as images. You are invited to comment there, from here on:

________________

>>G2:

I see your @ 45: Can we just accept that UncommonDescent is a philosopy/theology site ? Im still waiting for the big advances in ID.

Neat little dismissive rhetorical shot, nuh, it’s all over.

Not so fast.

If we are to reason accurately and soundly, we have to have the first principles of right reason set straight.

Where, as it turns out, there is a big problem of the evolutionary materialism-inclined and indoctrinated rejecting, evading or trying to self-servingly redefine such principles, which has come out here at UD [after literally years of back-forth exchanges on pivotal scientific issues raised by ID, such as the significance of FSCO/I as was originally raised by Orgel and Wicken in the 1970’s . . . your strawman dismissal fails . . . ] as a consequence of trying to figure out why we have had such intractable exchanges.

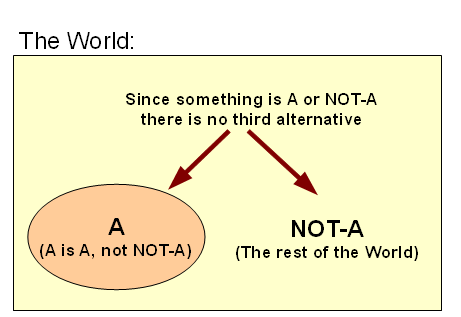

It was particularly seen that quantum theory was put forth as undermining the principles of distinct identity, non-contradiction/non confusion and the excluded middle state. That ended up in a new WAC with a unique extension that gives more details, linked in the opening paragraph of the OP. (It would have been helpful for you to have read the OP before trying a dismissive sniping rhetorical shot).

Let me therefore clip the opening remarks in the OP:

In our day, it is common to see the so-called Laws of Thought or First Principles of Right Reason challenged or dismissed. As a rule, design thinkers strongly tend to reject this common trend, including when it is claimed to be anchored in quantum theory.

Going beyond, here at UD it is common to see design thinkers saying that rejection of the laws of thought is tantamount to rejection of rationality, and is a key source of endless going in evasive rhetorical circles and refusal to come to grips with the most patent facts; often bogging down attempted discussions of ID issues.

The debate has hotted up over the past several days, and so it is back on the front burner . . .

I suggest to you, that matters of general logic emerge as important in times of scientific crisis, as well as matters where logic and epistemology of science overlap.

On that last, it seems that there is a lurking problem of understanding the nature of inductive reasoning and the status of inductive knowledge claims, especially in a context where abductive reasoning as an expression of induction, is pivotal. Notice, a crucial step in design thought (and in wider science), is the principle of inference on empirically tested reliable sign.

The Wiki article on Shoemaker-Levy 9 is helpful in this regard (and in clarifying how that which is real and objective, corrects our initial impressions and concepts):

Observers hoped that the impacts would give them a first glimpse of Jupiter beneath the cloud tops, as lower material was exposed by the comet fragments punching through the upper atmosphere. Spectroscopic studies revealed absorption lines in the Jovian spectrum due to diatomic sulfur (S2) and carbon disulfide (CS2), the first detection of either in Jupiter, and only the second detection of S2 in any astronomical object. Other molecules detected included ammonia (NH3) and hydrogen sulfide (H2S). The amount of sulfur implied by the quantities of these compounds was much greater than the amount that would be expected in a small cometary nucleus, showing that material from within Jupiter was being revealed. Oxygen-bearing molecules such as sulfur dioxide were not detected, to the surprise of astronomers.[19]

As well as these molecules, emission from heavy atoms such as iron, magnesium and silicon was detected, with abundances consistent with what would be found in a cometary nucleus. While substantial water was detected spectroscopically, it was not as much as predicted beforehand, meaning that either the water layer thought to exist below the clouds was thinner than predicted, or that the cometary fragments did not penetrate deeply enough.[20] The relatively low levels of water were later confirmed by Galileo’s atmospheric probe, which explored Jupiter’s atmosphere directly.

Notice, there were characterisations and models of the chemistry of Jupiter’s atmosphere. These come from models on planetary formation, and also from evaluations of spectroscopic studies — lines, bands etc that per Quantum results, serve as signs that tell us about molecular species, and from intensities we may make estimates of concentrations. Such studies were used to infer to the then not directly observed state of certain features of the Jovian atmosphere.

That is, we have certain traces and in this cases emanations (IR, Visible, UV spectra) that come from an object of interest. We have an empirically grounded theory of spectroscopy, that allows us to infer that certain causes reliably give off certain effects that can serve as signs of the action of those causes. Actually, that was so even before Quantum Physics explained the details of the spectra on fundamental investigations. For instance, it was known that particular elements give off particular spectral lines, and subtracting away those accounted for, some fresh unaccounted for lines were seen in the Sun’s spectrum. That is how a new element, helium — named after the Sun — was discovered before it was observed here on Earth.

Afterwards, there was a direct probe of Jupiter which was able to more directly observe the state.

All of this pivots on the ability to identify distinct objects, phenomena and states of affairs. Scientists invariably use the first principles of right reasoning in scientific investigations, as foundational to the reasoning processes involved. So, scientific investigations in principle cannot undermine such, without reduction to absurdity. This is not unique, it is so with all reasoned thought. The first principles are truly foundational and undeniably true.

How does all of this tie into the scientific advances made by design thinkers, and to how they are being received or rejected by the evolutionary materialism-dominated schools of thought, their publicists and the more popular level adherents and advocates who hang around in blogs etc?

First, the design inference is a reasoned exercise in inductive logic. As such, it will pivot on willingness to consistently follow canons of right reason, including the three laws of thought already highlighted and a fourth principle — that of sufficient reason (with the corollary, the principle of causality) that I like to put in the terms discussed by Schopenhauer:

FIRST PRINCIPLES OF RIGHT REASON:

— OLD BUSINESS —

1: LOI: A distinct thing, A, is what it is

2: LNC: A distinct thing, A, cannot at once be and not-be

3: LEM: A distinct thing, A, is or it is not, but not both or neither

— NEW BUSINESS —

FPRR, # 4 (per Schopenhauer): PRINCIPLE OF SUFFICIENT REASON (PSR): “Of everything [–> e.g., A] that is, it can be found why it is.” [Manuscript Remains, Vol. 4]

Sounds almost trivial, doesn’t it.

It isn’t.

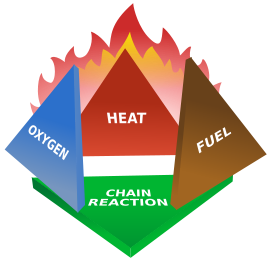

This means that when we see an object, phenomenon or state of affairs, we may properly ask: why is this so. Thence, we come to the point that many things have a beginning or may cease to be and are thus seen to be dependent on external factors, which we call causes. Indeed, taking the example of a fire, we see that if any one or more of heat, fuel, oxidiser and chain reaction are missing or interfered with a fire cannot start or will cease. (That is how fire fighters work.)

That is, we see on/off switch enabling causal factors.

These stipulate a cluster of necessary conditions for a fire to come to be, all must be “on” or the fire is not possible or sustainable.

Such extends to bigger things, including red balls, Jupiter, the Sun and solar system, the observed cosmos, and cell-based life in it. That which begins or may cease to be, is contingent on enabling on/off causal factors, i.e it is an effect and is caused. That’s the corollary to the principle of sufficient reason.

Let’s highlight it:

4a, Corr to PSR: PRINCIPLE OF CAUSALITY: That which begins or may cease to be, is contingent on enabling on/off causal factors, i.e. it is an effect and is caused.

As a further corollary, it is evident that for there to be an effect there must be a sufficient, adequate cluster of causal factors, including at least all the on/off enabling factors. Causal adequacy and sufficiency are pivotal to understanding the nature of phenomena, objects and states of affairs.

Let us highlight:

4b, Corr to PSR: PRINCIPLE OF REQUIRED ADEQUACY OF CAUSE FOR AN EFFECT TO OCCUR: for there to be an effect there must be a sufficient, adequate cluster of causal factors, including at least all the on/off enabling factors.

But, a subtler point lurks.

What of the possibility of entities that do not have enabling on/off factors? (That is, that are not contingent beings?)

We have arrived at the possibility of necessary beings, beings that have no external dependence on enabling factors, and as such would not have a beginning, and cannot cease from being. These would exist in all possible worlds, including the actual one we inhabit.

For instance, the truth asserted in “2 + 3 = 5″ is such a necessary being.

So also, we now see two modes of being (and imply a mode of non-being). There are contingent beings, necessary beings, and impossible beings. An example of the last would be a square circle, which is a contradiction in terms and inherently cannot exist.

Let’s highlight:

5: POSSIBILITY/ACTUALITY OF NECESSARY BEINGS: A serious candidate to be a necessary being will be credibly non-contingent, and will be either impossible or possible. If impossible, there is a reason why it cannot be; and if possible, there is at least one possible world in which it would be actual, but as such a serious candidate would be in all possible worlds if it is in any possible world (think about why) if not impossible, if possible then actual.

Yes, this is the much-despised philosophy in action, and it has implications for both theologies and anti-theologies.

Yes, this is the often derided and dismissed philosophy, but it is about how logic is at the root of any serious discussion, including those in science.

Yes, this is the often impatiently brushed aside philosophy, but it has direct implications for our scientific discussion of origins and causes of origins, relevant to origin of the cosmos, origin of cell-based life in it, and of body plans, including our own.

In short, it is not something we may wisely and safely ignore. And, ignorance of it may well lead us to think and stubbornly, irrationally cling to foolish things about important topics.

As one immediate application, our observed cosmos, credibly had a beginning and shows ever so many signs of being contingent and fine tuned in ways that support cell based life. That points to an underlying necessary being at the root of that cosmic reality that is causally sufficient and powerful enough to create such a cosmos — even in the face of multiverse speculations. (The comfortable days of being able to appeal to the Steady State theory as showing that it was possible that the observed cosmos as a whole is that necessary being are long gone.)

In terms of origin of cell based life and body plans, these four principles of right reason point to the significance of functionally specific complex organisation and associated information [FSCO/I] as an apt description of a phenomenon found in the living cell and in complex body plans, as a trace that comes from the origin of same, and so they point to the need for a causally adequate explanation for same.

Lest some be tempted to brush such aside as a figment of the imagination of a particularly idiotic ID — yes, we know the childish schoolyard taunts that are ever so common in hostile or outright hate sites — supporter, let me cite from the IOSE course that so many wish to brush aside as nonsense, on what three key origins research figures who are by no means to be seen as ID movement members or Creationists in any material sense of the term have had to say across the 1970′s and into the early 1980′s, i.e. immediately antecedent to and obviously enabling factors for the rise of the modern design theory from the mid 80′s on:

The observation-based principle that complex, functionally specific information/ organisation is arguably a reliable marker of intelligence and the related point that we can therefore use this concept to scientifically study intelligent causes will play a crucial role . . . For, routinely, we observe that such functionally specific complex information and related organisation come– directly [[drawing a complex circuit diagram by hand] or indirectly [[a computer generated speech (or, perhaps: talking in one’s sleep)] — from intelligence.

In a classic 1979 comment, well known origin of life theorist J S Wicken wrote:

‘Organized’ systems are to be carefully distinguished from ‘ordered’ systems. Neither kind of system is ‘random,’ but whereas ordered systems are generated according to simple algorithms [[i.e. “simple” force laws acting on objects starting from arbitrary and common- place initial conditions] and therefore lack complexity, organized systems must be assembled element by element according to an [[originally . . . ] external ‘wiring diagram’ with a high information content . . . Organization, then, is functional complexity and carries information. It is non-random by design or by selection, rather than by the a priori necessity of crystallographic ‘order.’ [[“The Generation of Complexity in Evolution: A Thermodynamic and Information-Theoretical Discussion,” Journal of Theoretical Biology, 77 (April 1979): p. 353, of pp. 349-65. (Emphases and notes added. Nb: “originally” is added to highlight that for self-replicating systems, the blue print can be built-in.)]

The idea-roots of the term “functionally specific complex information” [FSCI] are plain: “Organization, then, is functional[[ly specific] complexity and carries information.”

[–> Wicken, patently, is not an ID thinker nor a Creationist, FYI; nor is he a no-account bloggist who can be dismissed without further thought. So much for the figment of the imagination rhetoric we have seen in recent days, without the decency to retract such when corrected.]

Similarly, as early as 1973, Leslie Orgel, reflecting on Origin of Life, noted:

. . . In brief, living organisms are distinguished by their specified complexity. Crystals are usually taken as the prototypes of simple well-specified structures, because they consist of a very large number of identical molecules packed together in a uniform way. Lumps of granite or random mixtures of polymers are examples of structures that are complex but not specified. The crystals fail to qualify as living because they lack complexity; the mixtures of polymers fail to qualify because they lack specificity. [[The Origins of Life (John Wiley, 1973), p. 189.]

[–> again we see the trichotomy of basic causes, across mechanical necessity giving rise to order, randomness or chance and by implication the ART-ificial act of explicit intelligent design and/or selection]

Thus, the concept of complex specified information — especially in the form functionally specific complex organisation and associated information [FSCO/I] — is NOT a creation of design thinkers like William Dembski. Instead, it comes from the natural progress and conceptual challenges faced by origin of life researchers, by the end of the 1970′s.

Indeed, by 1982, the famous, Nobel-equivalent prize winning Astrophysicist (and life-long agnostic) Sir Fred Hoyle, went on quite plain public record in an Omni Lecture:

Once we see that life is cosmic it is sensible to suppose that intelligence is cosmic. Now problems of order, such as the sequences of amino acids in the chains which constitute the enzymes and other proteins, are precisely the problems that become easy once a directed intelligence enters the picture, as was recognised long ago by James Clerk Maxwell in his invention of what is known in physics as the Maxwell demon. The difference between an intelligent ordering, whether of words, fruit boxes, amino acids, or the Rubik cube, and merely random shufflings can be fantastically large, even as large as a number that would fill the whole volume of Shakespeare’s plays with its zeros. So if one proceeds directly and straightforwardly in this matter, without being deflected by a fear of incurring the wrath of scientific opinion, one arrives at the conclusion that biomaterials with their amazing measure or order must be the outcome of intelligent design. No other possibility I have been able to think of in pondering this issue over quite a long time seems to me to have anything like as high a possibility of being true.” [[Evolution from Space (The Omni Lecture[ –> Jan 12th 1982]), Enslow Publishers, 1982, pg. 28.]

So, we first see that by the turn of the 1980′s, scientists concerned with origin of life and related cosmology recognised that the information-rich organisation of life forms was distinct from simple order and required accurate description and appropriate explanation. To meet those challenges, they identified something special about living forms, CSI and/or FSCO/I. As they did so, they noted that the associated “wiring diagram” based functionality is information-rich, and traces to what Hoyle already was willing to call “intelligent design,” and Wicken termed “design or selection.” By this last, of course, Wicken plainly hoped to include natural selection.

But the key challenge soon surfaces: what happens if the space to be searched and selected from is so large that islands of functional organisation are hopelessly isolated relative to blind search resources?

[–> Since there is an astonishing attempt to dismiss the concept of such islands of co-ordinated function in configuration spaces, kindly cf. here]

For, under such “infinite monkey” circumstances, searches based on random walks from arbitrary initial configurations will be maximally unlikely to find such isolated islands of function. As the crowd-source Wikipedia summarises (in testimony against its ideological interest compelled by the known facts):

The text of Hamlet contains approximately 130,000 letters. Thus there is a probability of one in 3.4 × 10^183,946 to get the text right at the first trial. The average number of letters that needs to be typed until the text appears is also 3.4 × 10^183,946, or including punctuation, 4.4 × 10^360,783.

Even if the observable universe were filled with monkeys typing from now until the heat death of the universe, their total probability to produce a single instance of Hamlet would still be less than one in 10^183,800. As Kittel and Kroemer put it, “The probability of Hamlet is therefore zero in any operational sense of an event…”, and the statement that the monkeys must eventually succeed “gives a misleading conclusion about very, very large numbers.” This is from their textbook on thermodynamics, the field whose statistical foundations motivated the first known expositions of typing monkeys.[3]

So, once we are dealing with something that is functionally specific and sufficiently complex, trial-and error, blind selection on a random walk is increasingly implausible as an explanation, compared to the routinely observed source of such complex, functional organisation: design. Indeed, beyond a certain point, the odds of trial and error on a random walk succeeding fall to a “practical” zero . . .

In short, these derided or dismissed philosophical questions lie close to the heart of why it is that design theory results and advancing of the state of science are too often being stoutly resisted by those who should welcome them and carry them further forward.

Perhaps, the time has come to think again, G2?

KF >>

________________

So now, back to stirring the pot, from here on. END