Not so.

With all due respect, EL’s error here is a case of failure to think through the inductive logic of abductive inference to best explanation on a tested, reliable sign.

(And indeed the statistics of Type I/II error extend that to cases of known percentage reliability, especially when multiple aspects or signs are involved that each have reasonable reliability: the odds of several reasonably independent tests, n, all being wrong in the same way [1 – p] fall away rather quickly. For simplicity, say odds of being right, p, are the same; the probability of n tests all being wrong the same way would be like (1 – p)^n. This is BTW the basis for correcting Hume’s error on witness to miracles: if we make an error in recognising a friend sitting to supper and conversing with us as 0.1% . . . not coincidentally, once in three years of daily encounters . . . then 11 witnesses to say the risen Christ would arguably have collective odds of error [10^-3]^11 ~10^-33. A fairly safe bet, especially as collective illusion or conspiracy to lie their way into collective martyrdom would be rather unlikely. [Cf. here on.])

Okay, let’s pause and keep track of this series so far:

FYI-FTR: Part 4, What about Paley’s self-replicating watch thought exercise?

FYI-FTR: Part 5, on evolutionary materialism, can a designer even exist?

Consider a putative deer track:

Now, it is possible for such to be made up, or perhaps there is another animal out there with a track-mark that looks remarkably like that left by a deer.

But, so long as a deer is possible, the reasonable inference is, that this comes from a deer. Of course, should countervailing evidence appear of sufficient weight, that would be surrendered. That is implied in any process of inductive reasoning.

It’s worth a pause to bring that out, via Stanford Enc of Phil:

>>An inductive logic is a system of evidential support that extends deductive logic to less-than-certain inferences. For valid deductive arguments the premises logically entail the conclusion, where the entailment means that the truth of the premises provides a guarantee of the truth of the conclusion. Similarly, in a good inductive argument the premises should provide some degree of support for the conclusion, where such support means that the truth of the premises indicates with some degree of strength that the conclusion is true. Presumably, if the logic of good inductive arguments is to be of any real value, the measure of support it articulates should meet the following condition:

Criterion of Adequacy (CoA):

As evidence accumulates, the degree to which the collection of true evidence statements comes to support a hypothesis, as measured by the logic, should tend to indicate that false hypotheses are probably false and that true hypotheses are probably true.>>

That is, on observable evidence, a conclusion is drawn that has some strength of support but which has an inescapably provisional character. (In that more modern context of understanding induction [as opposed to the traditional inference from particular cases to a general conclusion . . . ], abductive inferences to best current explanation can be seen as a type of inductive argument.)

Scientific thinking, classically, is inductive, as we can see from Newton in Query 31 to his Opticks, though of course his terminology is archaic:

>>As in Mathematicks, so in Natural Philosophy, the Investigation of difficult Things by the Method of Analysis, ought ever to precede the Method of Composition. This Analysis consists in making Experiments and Observations, and in drawing general Conclusions from them by Induction, and admitting of no Objections against the Conclusions, but such as are taken from Experiments, or other certain Truths. For Hypotheses are not to be regarded in experimental Philosophy. And although the arguing from Experiments and Observations by Induction be no Demonstration of general Conclusions; yet it is the best way of arguing which the Nature of Things admits of, and may be looked upon as so much the stronger, by how much the Induction is more general. And if no Exception occur from Phaenomena, the Conclusion may be pronounced generally. But if at any time afterwards any Exception shall occur from Experiments, it may then begin to be pronounced with such Exceptions as occur. By this way of Analysis we may proceed from Compounds to Ingredients, and from Motions to the Forces producing them; and in general, from Effects to their Causes, and from particular Causes to more general ones, till the Argument end in the most general. This is the Method of Analysis: And the Synthesis consists in assuming the Causes discover’d, and establish’d as Principles, and by them explaining the Phaenomena proceeding from them, and proving the Explanations. [Emphases added.]>>

(You may recognise in this an early statement of the school level “scientific method.” Though, in fact, such inductive reasoning is not peculiar to science and philosophers of science commonly warn us that there is no one size fits all method that all and only scientists use.)

I think a graphic will be helpful:

So, we may now take a more balanced view of testing the design inference on signs such as functionally specific, complex organisation and associated information [FSCO/I], especially digitally coded functionally specific information [dFSCI] as we find in, say DNA:

A code is an integral part of a wider information system, so let us note the general layer-cake architecture for such a system . . . which obviously also exhibits an irreducible core of components organised in a particular way in order to carry through the logic of coded communication from context A and point X to obtain the decoded (perhaps noise-degraded) message A* –> B at point Y:

So, it is no surprise to observe Hubert Yockey’s interpretation of protein synthesis:

So, it is no surprise to observe Hubert Yockey’s interpretation of protein synthesis:

. . . in this light:

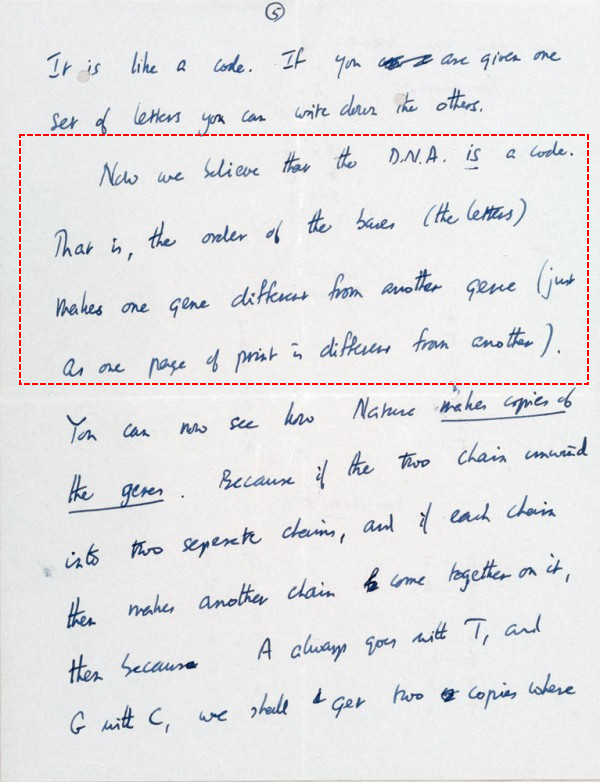

But, dFSCI and wider FSCO/I are a commonplace in today’s technological world. And, before that, people were familiar with alphanumeric characters and messages based on strings of such characters, as we may see from — and yes, I insist on hammering home this example — Crick’s famous March 19, 1953 letter to his son Michael which exemplifies such communication even as it extends to recognising the presence of code in DNA:

Now, we have trillions of cases in point where we routinely observe or have reliable report of the causal process that generates dFSCI (and FSCO/I): intelligently directed configuration.

AKA, design.

Indeed, as the vera causa/ adequate cause principle demands to make a specific causal conclusion, such is the only actually observed cause.

Therefore, with all due recognition of the limitations of inductive reasoning, we are entitled to infer with some confidence that dFSCI and wider FSCO/I are reliable signs of design as causal process.

Signs, that then raise the further challenge posed so aptly by Paley: “[c]ontrivance must have had a contriver; design, a designer . . .”

Can this be tested?

Patently, yes, that is implicit in the vera causa principle: if it can be shown in the here and now that significantly complex cases of FSCO/I and particularly dFSCI can and do — per reliable observation — come about by blind chance and mechanical necessity, then that would force re-evaluation of such as signs of design.

In former years, that was commonly accepted by objectors in and around UD.

But after dozens of attempts consistently turned out to illustrate instead ways that FSCO/I-rich items can come about by intelligently directed configuration (including, someone’s clocks world Youtube vid that failed to realise how much fine tuning is involved, a discussion of sketches of perceived canals on Mars, and of course endless cases of quite intelligently designed loose sense hill climbing genetic algorithms, etc), such attempts seem to have by and large been abandoned.

As just one case of a real world test (which has been pointed out many times here at UD), here is Wiki testifying against interest on random document generation:

>>One computer program run by Dan Oliver of Scottsdale, Arizona, according to an article in The New Yorker, came up with a result on August 4, 2004: After the group had worked for 42,162,500,000 billion billion monkey-years, one of the “monkeys” typed, “VALENTINE. Cease toIdor:eFLP0FRjWK78aXzVOwm)-‘;8.t” The first 19 letters of this sequence can be found in “The Two Gentlemen of Verona”. Other teams have reproduced 18 characters from “Timon of Athens”, 17 from “Troilus and Cressida”, and 16 from “Richard II”.[24]

A website entitled The Monkey Shakespeare Simulator, launched on July 1, 2003, contained a Java applet that simulates a large population of monkeys typing randomly, with the stated intention of seeing how long it takes the virtual monkeys to produce a complete Shakespearean play from beginning to end. For example, it produced this partial line from Henry IV, Part 2, reporting that it took “2,737,850 million billion billion billion monkey-years” to reach 24 matching characters:

- RUMOUR. Open your ears; 9r"5j5&?OWTY Z0d…

Due to processing power limitations, the program uses a probabilistic model (by using a random number generator or RNG) instead of actually generating random text and comparing it to Shakespeare. When the simulator “detects a match” (that is, the RNG generates a certain value or a value within a certain range), the simulator simulates the match by generating matched text.>>

Now, notice what they go on to say:

>>More sophisticated methods are used in practice for natural language generation. If instead of simply generating random characters one restricts the generator to a meaningful vocabulary and conservatively following grammar rules, like using a context-free grammar, then a random document generated this way can even fool some humans (at least on a cursory reading) as shown in the experiments with SCIgen, snarXiv, and the Postmodernism Generator.>>

Notice, how injection of active information based on skill and knowledge narrows down the search?

That is tantamount to parking oneself next to an island of function in the sea of possibilities then reaching out with controlled randomness as a part of one’s design method:

Which of course further underscores the point: FSCO/I and especially dFSCI come about reliably by intelligently directed configuration.

That brings me back to some long ago now initial observations for the ID Foundations series:

>>Signs: I observe one or more signs [in a pattern], and infer the signified object, on a warrant:

I: [si] –> O, on Wa –> Here, as I will use “sign” [as opposed to “symbol”], the connexion is a more or less causal or natural one; e.g. a pattern of deer tracks on the ground is an index, pointing to a deer.

(NB, 02:28: Sign can be used more broadly in technical semiotics to embrace “symbol” and other complexities, but this is not needed for our purposes. I am using “sign” much as it is used in medicine, at least since Hippocrates of Cos in C5 BC, i.e. to point to a disease on an objective, warranted indicator.)

b –> If the sign is not a sufficient condition of the signified, the inference is not certain and is defeat-able; though it may be inductively strong. (E.g. someone may imitate deer tracks.)

c –> The warrant for an inference may in key cases require considerable background knowledge or cues from the context.

d –> The act of inference may also be implicit or even intuitive, and I may not be able to articulate but may still be quite well-warranted to trust the inference. Especially, if it traces to senses I have good reason to accept are working well, and are acting in situations that I have no reason to believe will materially distort the inference.

e –> The process of observation may be passive, where I simply respond to effects of the sign-emitting object; or it may involve active emission of signals or interaction with the object. For instance, we may contrast passive and active sonar sensing here, noting that both modes are used by sea-animals as well as technical systems. (NB: “Object” is here used in a very broad sense [u/d 02:17: it includes objects and credibly objective states of affairs].)

f –> A sign can also be iconic, i.e sufficiently resembling [u/d, 02:17: or representing] the object to be recognisable as a representation, as a general class [a rock shaped like a face] or in specific [a sculptural portrait]. [u/d 02:28: In the case of a mace in its rest in Parliament, unless an elaborate form of a former weapon sits there, Parliament is not legitimately in session.]>>

Obviously, even without knowing a detailed method, if I can show that a sign’s claimed reliability fails, that sign has been shown to be less than a unique, credibly 100% reliable indicator of what it signifies, that is it is shown capable of inferring state of affairs S when the actual state is NOT-S, a Type I, false positive error.

(No-one has seriously proposed that the design inference process is immune to Type II false negative errors; in fact the threshold of complexity of 500 – 1,000 bits is deliberately willing to accept false negatives in order to gain high confidence that the positive is reliable. And dismissive sniffing about big numbers is besides the point.)

So, nope, one does not need to have detailed models of howtwerdun etc in order to reason on signs.

In this case: