|

Over at Why Evolution Is True, Professor Jerry Coyne has responded to neurosurgeon Michael Egnor’s recent article arguing that materialism cannot account for our ability to form abstract concepts, such as the concept of “good” (Free Will is Real and Materialism is Wrong, Evolution News and Views, January 15, 2015). As Professor Egnor puts it:

Intellect and will are immaterial powers, and obviously so. Here’s why.

Let us imagine, as a counterfactual, that the intellect is a material power of the mind. As such, the judgment that a course of action is good, which is the basis on which an act of the will would be done, would entail “Good” having a material representation in the brain. But how exactly could Good be represented in the brain? The concept of Good is certainly not a particular thing — a Good apple, or a Good car — that might have some sort of material manifestation in the brain. Good is a universal, not a particular. In fact the judgment that a particular thing is Good presupposes a concept of Good, so it couldn’t explain the concept of Good. Good, again, is a universal, not a particular.

So how could a universal concept such as Good be manifested materially in the brain?

Good must be an engram, coded in some fashion in the brain. Perhaps Good is a particular assembly of proteins, or dendrites, or a specific electrochemical gradient in a specific location in the brain.

…Obviously there’s nothing that actually means Good about that particular protein in that particular location — one engram would be as Good as another — so we would require another engram to decode the hippocampal engram for Good, so it would mean Good, and not just be a clump of protein….

In short, any engram in the brain that coded for Good would presuppose the concept of Good in order to establish the code for Good. So Good, from a materialist perspective on the mind, must be an infinite regress of Good engrams. Engrams all the way down, so to speak, which of course is no engrams at all.

Coyne’s criticisms of Professor Egnor’s argument

Objection 1: There’s no hard-and-fast distinction between universals and particulars

Professor Coyne finds this argument puzzling and “opaque,” for several reasons. First, he rejects the notion of there being a hard-and-fast separation between universals and particulars:

After all, when I think of an apple, I usually don’t think of a particular apple, but the concept of an apple, although I could think of a particular apple, like the one I’m eating at the moment (a tart Granny Smith). But in many cases when you think of objects, you think of ideal reifications of those objects, and those are concepts. Further, when I start thinking about “justice,” or “the good,” my mind is often focused on particular situations: “would it be good to do X?”, or “what might be the effect on the world everyone did Y”?, and those also involve representations of the real world. The particular and the universal ineluctably blur together…

Now, Professor Coyne is perfectly correct in saying that when I think of an apple, what I have in mind is a universal concept of an apple. Indeed, I would add that when I think only of a particular apple, I’m not really thinking of an apple at all: I’m just imagining and/or remembering one. And to do either of those things, I don’t need universal concepts.

|

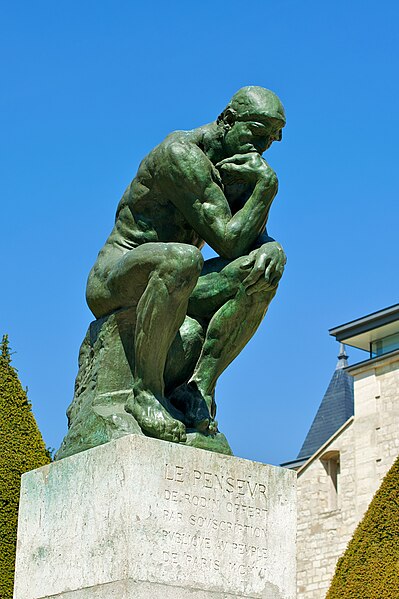

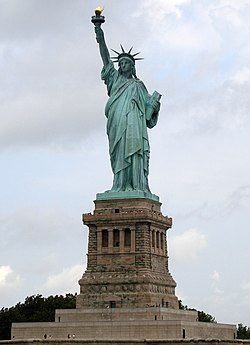

Coyne also appears to believe that we can have a concept of a particular object. On this point, I believe he is mistaken: following Aristotle, I would maintain that a concept can only apply to a class of objects. For instance, someone can have a general concept of a statue, but one cannot have a concept of the Statue of Liberty. The reason is that a concept functions as a kind of rule for deciding whether an object belongs to the class in question or not. In deciding whether or not an object is a statue, I have to ask myself: is it three-dimensional? And was it carved, cast or molded into the shape it now has? Only if the answer to both questions is “yes” is the object eligible to be considered as a statue. In other words, there are criteria that must be satisfied before an object is deemed a member of a class. But with the particular object known as the Statue of Liberty, the situation is quite different: the issue here is not whether the object satisfies certain criteria, such as the criteria we use to classify something as a statue, but rather, whether it is the same object as the one that was originally named the Statue of Liberty. To establish this fact, we need to ascertain whether the object in question is spatio-temporally continuous with the work of art that was originally designed by French sculptor Frédéric Auguste Bartholdi, subsequently given by the people of France to the United States, and dedicated by President Grover Cleveland on October 28, 1886.

Professor Coyne’s subsequent observation that “when you think of objects, you think of ideal reifications of those objects, and those are concepts” is somewhat muddled. My ideal reification of my favorite football team or vacation spot certainly does not deserve to be called a concept: it’s just a window-dressed image of a particular object. And as the philosopher Aristotle pointed out 2,300 years ago in his De Anima, a concept is not the same thing as an image: my image of the Sun (which we now know to be a common type G star) is less than a foot across, yet in reality, I understand it to be larger than the Earth (since stars need to be large and massive in order to generate heat and light). But if Coyne is arguing that concepts often serve as ideals which individuals falling under those concepts may not always live up to, he is surely correct: for instance, my ideal concept of a cat is one of a four-footed domesticated carnivorous mammal with protractible and retractable claws, even though I know that some cats have only three feet, some cats turn feral when released into the wild, some old toothless cats are no longer able to chew meat, and some unfortunate cats have lost all their claws. The ideal which my mental concept of a cat refers to describes what a cat should be like, and to the extent that it falls short of that ideal, it is (biologically speaking) a less-than-perfect specimen, even if makes an excellent companion. And while the biological concept of a cat was indeed constructed by scientists after they looked at a variety of particular individuals, it is more than a mere mélange of those individuals: after all, even if (by some misfortune) the majority of the world’s cats happened to be three-footed or toothless or otherwise defective, that would not make any of these defects normal. They would still be defects. It is a reductionistic fallacy to argue from the fact that we cannot form a universal concept (such as “cat”) without looking at particular individuals to the conclusion that universals are simply aggregates or averages of particulars.

For the benefit of readers, I should mention at this point that while thinkers in the Aristotelian tradition (such as Professor Egnor and myself) would maintain all concepts are universals, they would not argue that all concepts are abstract. The concept of a cat is universal but not abstract, as it refers to a type of animal; whereas the concept of goodness is both universal and abstract.

Objection 2: Why are abstract concepts tied to the brain, if they’re not material?

Next, Professor Coyne wonders why our abstract universal concepts are associated with activity in specific regions of the brain, which, if they are damaged, result in our losing those concepts altogether:

In fact, when you think about more abstract things, like God or faith, parts of the brain light up in brain scans. Why should they if such notions are immaterial?

…For you can demolish people’s notion of the good, and affect their volition, by manipulating or effacing regions of the brain. If those had no materialistic grounding, why would that be?

|

The question that Coyne poses here is a reasonable one. The short answer is that concepts can only be expressed (and shared) in public by using particular words and by invoking certain mental schemas (not images) which represent the concept which we are talking about. I would argue that the brain stores information relating to the words we use to talk about our concepts, as well as the schemas that we use to represent them. For concepts of material entities (e.g. the concept of a cat), the brain also stores images of what a typical cat looks like (such as the image above of a Turkish Van kitten, courtesy of Wikipedia), which enable us to rapidly identify one when we see one. Severe damage to the language-processing areas of my brain may make me unable to recall the word “cat,” but they surely do not eradicate my concept of a cat, and if I were to re-learn the word “cat” while recovering from a nasty stroke, I would not be re-acquiring the concept of a cat, but simply learning how to refer to that concept. Damage to other parts of my brain may leave me unable to recognize cats; nevertheless, I may still know and be able to describe what a cat is, so my concept of a cat has not been impaired.

What if my mental schema of a cat, which is stored in my brain, were somehow destroyed, with the result that I was no longer able to provide a definition of a cat, because I could no longer link it to other terms such as “carnivore,” “mammal” and “protractible”? It seems tempting to conclude that since I now lack the concept of a cat and must be re-taught the mental schema which I formerly possessed in order to re-acquire the concept of a cat, therefore the concept of a cat can be equated with the mental schema we use to represent it. But as I pointed out in my recent post, Remembering Rameses, what this argument overlooks is that our concepts are normative: they function as rules. The content of a concept can certainly be stored in the brain, but its normativity, or “ought-iness,” cannot.

What I’m trying to say here is that a concept should not be regarded as a “thing.” Even to think of it as a higher-level, more general kind of thing would be a mistake. Rather, we should think of a concept as having the logical form of a rule which tells us how to think about a designated class of objects (e.g. statues or cats). We can think of this rule as having the following general form:

You should think of a [insert class-name here] as having certain essential attributes – [attribute-1], [attribute-2], …. , [attribute-n] – as well as certain parts or components – [component-1], [component-2], …. , [component-m] – which are related as follows: [insert description of structure here].

The term “attributes” is meant to be a broad one, which encompasses the properties, activities, behavioral tendencies and relationships to other things that characterize a given class of entities.

What I would maintain is that for human beings, the brain stores all the stuff in square brackets. And that’s all. However, the stuff in square brackets doesn’t give us the notion of a rule, which is fundamental to any concept; nor does it express the underlying metaphysical lens through which we comprehend the different kinds of entities in our world. That’s why it’s absurd to say that the brain stores concepts as such. What the brain stores is the content of concepts. It does not store the normativity which makes them concepts, or rules that we can rely on, rather than mere generalizations that happen to be true by accident.

Objection 3: Michael Egnor’s argument leads to the absurd conclusion that none of our concepts are encoded in the brain

|

Finally, Professor Coyne contends that Professor Egnor’s “engram” argument proves too much, since it could be used to show that not only abstract concepts but also concepts of material objects (such as an apple) could not be encoded in the brain either:

Now maybe I’m missing something, but I don’t understand why pondering concepts can’t either be coded in the brain, or be taken in from the environment and run through one of the brain’s computer programs (i.e., what we call “pondering” or “reasoning”). The error in Egnor’s thinking, it seems to me, is twofold: thinking that a concept of Good, or other concepts, must be coded in the brain before you think about them (they well may be, but needn’t be), and claiming that if they were coded in the brain, like any memory, they could not be material because they’d somehow require another material object to decode the concept, and so on and so on and so on.

But that argument can be made for anything. You could say that if the idea of an apple was coded in the brain, you’d need another “engram” to “mean that it signifies the code for the Apple engram,” and so on and so on and so on.

Now, I should point out here that I don’t think that Professor Egnor’s “infinite regress” argument works, for reasons that I have explained in a previous post, titled, Remembering Rameses. But we can formulate an argument against materialism without appealing to infinite regresses. Thomist philosopher David Oderberg summarizes the argument as follows in his essay, Concepts, Dualism, and the Human Intellect:

A problem arises for the view that the mind, considered just as the brain (or some other physical entity or system), acts in a purely material way. For if the abstracted forms – the concepts – are literally in the mind so conceived, we should be able to find them, just as we are able to find universals in rebus by finding the things that instantiate them or the things of which they are true. We can find triangularity by finding the triangles, and redness by finding the red things. But we cannot find the concepts of either of these by looking inside the brain. Nothing in the brain instantiates redness or triangularity; when Fred acquires the concept of either, nothing in his brain becomes red or triangular. Yet these concepts must be in his mind, and if the mind just is the brain they should be in his brain; yet they are not.

It should be noted that the above argument works equally well for any universal concept, whether abstract or not. Consequently, there is no point in Professor Coyne’s urging the fact that Michael Egnor’s argument would imply that even concepts of material entities cannot be stored in the brain, as an objection to dualism. That is the very point that Egnor was arguing for!

Coyne is certainly correct in observing that our ability to form concepts is in some way dependent on the brain. But as Dr. Oderberg points out in his article, we need to distinguish between two different kinds of dependence – intrinsic and extrinsic. Intrinsic dependence is what needs to be established, in order to demonstrate the truth of materialism. For if our ability to form and store concepts is only extrinsically dependent on the brain, then we haven’t shown that we perform these activities with our brains; all we have shown is that we cannot perform them without our brains:

The idea is that intellectual activity – the formation of concepts, the making of judgments, and logical reasoning – is an essentially immaterial process. By essentially immaterial is meant that intellectual processes, in the sense just mentioned, are intrinsically independent of matter, this being consistent with their being extrinsically dependent on matter for their normal operation in the human being. Extrinsic dependence, then, is a kind of non-essential dependence. For example, certain kinds of plant depend extrinsically, and so non-essentially, on the presence of soil for their nutrition, since they can also be grown hydroponically. But they depend intrinsically, hence essentially, on the presence of certain nutrients that they normally receive from soil but can receive via other routes. Something similar is true of the human intellect.

Perhaps the Aristotelian-Thomistic philosopher Mortimer Adler summed it up best when he declared: “You don’t think with your brain, but you can’t think without it.” Thinking itself is a non-bodily, intellectual activity; but for human beings, this activity cannot take place without the occurrence of a host of subsidiary activities in the brain and central nervous system, which store information about the world around us, and about the content of our memories and concepts.

Finally, Professor Coyne does not seem to have a clear grasp of the difference between Cartesian dualism and the Aristotelian-Thomistic dualism espoused by Professor Michael Egnor. On the latter view, all living things have a soul, and an organism’s soul is simply its underlying principle of unity. The human soul, with its ability to reason, does not distinguish us from the animals; it distinguishes us as animals: we are rational animals. The unity of a human being’s actions is actually deeper and stronger than that underlying the acts of a non-rational animal: rationality allows us to bring together our past, present and future acts, when we formulate plans. And when St. Thomas Aquinas argues that the acts of the intellect (e.g. understanding a concept) are not the act of a bodily organ, he is not claiming that there is a non-animal act which is engaged in by human beings. Rather, he is asserting that not every act of an animal is a bodily act. Professor Coyne might enjoy reading Fr. John O’Callaghan’s perspicuous article, From Augustine’s Mind to Aquinas’ Soul, which contrasts the substance dualism advocated by St. Augustine (354-430) and much later, in a somewhat different form, by Rene Descartes (1596-1650), with the more sophisticated hylemorphic dualism endorsed by St. Thomas Aquinas (1225-1274). I would also recommend Dr. David Oderberg’s article, Hylemorphic Dualism in E.F. Paul, F.D. Miller, and J. Paul (eds) Personal Identity (Cambridge: Cambridge University Press, 2005): 70-99.

Sundry objections to Professor Egnor’s argument from Jerry Coyne’s readers

As one might expect, most of Professor Coyne’s readers declared themselves unimpressed with neurosurgeon Michael Egnor’s argument against materialism, based on our ability to form abstract concepts, such as the concept of goodness. I shall now attempt to summarize their objections under several headings, and respond to each of them briefly.

Computers can form abstract concepts, too

Ben Goren wrote:

I punch 1 + 1 into my calculator, and it understands the abstractions and is answer, “2.” Where are those ones, plusses, and twos in the calculator? I can open it up, but I don’t see any little number marbles rolling around – yet it still deals with the purest of abstractions with superb aplomb.

Ben Goren is anthropomorphizing here, as Professor Egnor aptly demonstrates in his recent article, Your Computer Doesn’t Know Anything (Evolution News and Views, January 23, 2015). Ben Goren’s calculator spits out the answer “2” when given the input “1 + 1,” but there is no need to suppose that it understands what it does, while there is excellent reason to believe that it understands nothing at all. Understanding is an inherently critical activity: I may think that I grasp a concept, but my concepts are by their very nature open to correction from other people, who may know better than I do. A concept which cannot be corrected isn’t a concept at all. So if calculators really understand what they are doing, they should be able to critique calculations performed by other machines and say what’s wrong with them, when they give the wrong answer (as they may do, when there’s an electronic glitch or when the correct answer is out of the numerical range of the calculator). Calculators should also be able to say how they arrived at their answer to a given sum, and why it’s correct, if they truly understand what they are doing. Finally, they should be able to argue their case against a variety of objections that might be raised, and not merely in a rote, pre-programmed fashion, but in a way that someone who genuinely understood mathematics might do. (Parroting an argument isn’t the same as understanding it.) Calculators can do none of these things, and I’m not holding my breath that they will any time soon.

Animals can form abstract concepts as well

Ben Goren also wrote:

Alex the parrot and at least that one dog (and quite a number of primates starting with Koko) have extensive vocabularies that include adjectives. You can present a set of toys the animal has never seen before and ask it to bring you the red ball and it will.

If that’s not abstract reasoning, I don’t know what is.

Alex the parrot died in 2007 (see here and here for a brief bio). Alex had a vocabulary of 150 words, knew the names of 50 objects, and was able to identify their colors, shapes and what they were made of. He could count up to six, including the number zero. He could also use the terms “bigger,” “smaller,” “same” and “different”.

Nevertheless, I would argue that Alex’s “concept” of redness or triangularity or was not really a concept at all; instead, I would say that it was a general idea. For instance, Alex’s idea of red can be easily explained in terms of a range: he formed that idea because certain cones in his eyes are sensitive to a given range of frequencies. The reason why Alex’s idea of red is not a concept is that he does not think of it as a rule to which red objects must conform. By contrast, our modern scientific understanding of red has the form of a rule: very simply, to be red means to emit light at certain wavelengths (620–740 nanometers), and if an object that appeared to be red was found not to be emitting light in those wavelengths, then we’d say it wasn’t really red. Color illusions can also make an object look red, even when it isn’t. If Alex didn’t have the concept of an illusion, then one can argue that he didn’t have the concept of red, or any other color.

What about Alex’s ability to identify shapes, such as triangles? This looks more convincing, at first sight. However, the ability to distinguish triangular objects from other objects doesn’t necessarily an ability to say what a triangle is. For instance, did Alex understand the rule that a triangle is a closed figure? And did he understand the rule that the sides of a triangle have to be perfectly straight? Somehow I doubt it. Nevertheless, he had a good brain that enabled him to identify, with a high degree of accuracy, the objects we call triangles. And that’s no mean feat. Just don’t call it a concept.

Is “goodness” a computational concept?

Eric wrote:

How could Good be coded?

The same way neural nets learn to categorize inputs. A ‘general mental concept’ consists of a neural subset that takes a wide variety of inputs about disparate things and cranks out a much more limited number of outputs. A decent programmer could probably make one that allowed you to input the name of any fruit or vegetable and have it output “fruit” or “vegetable.” In the same way, we learn to input a wide variety of actions and output a notion of “that’s good” or “that’s evil.”

Through our intellect we grasp and comprehend universals, not particulars,

He should read Babboon Metaphysics. Babboons (sic) can do that too. I guess that means they have souls?

I have already addressed the alleged ability of non-human animals to form concepts (see my remarks on Alex the parrot). And I’d be willing to wager that no baboon can identify categories of objects better than Alex did.

Finally, in response to Eric’s claims about neural nets, I’d like to set him a simple challenge: let him build me a neural network that can do a better job than humans of evaluating “goodness” across a wide variety of contexts (including virtuous people, pets that please their owners, organisms that are thriving, and artifacts that do their job well), explain the basis of its judgments and argue its case against human assessors who may disagree with it, and then I’ll be prepared to credit Eric’s neural net with concepts.

Is “goodness” simply whatever I like?

Rickflick endorsed the long-discredited emotivist “Boo-Hurrah” theory of goodness, which most philosophers gave up fifty years ago:

The universal, good, is the name we give to the set of actions, behaviors, and events that we would approve of. The word, good, is certainly representable in the brain, just like other nouns, except it is known as an abstract noun.

Another commenter named Chris disputed whether the term “goodness” actually refers to anything at all:

Oh, and “good” isn’t an actual thing. It’s a value judgement. Anyone arguing from this position should provide a sample of it on a plate.

Well, how about a sample of love on a plate, then? Or is love unreal too?

In any case, the utilitarian philosopher Richard Brandt provided a crushing refutation of the view that goodness is simply whatever I happen to like more than fifty years ago, when he pointed out that people who change their moral views see their prior views as mistaken, not just different, and that this does not make sense if their attitudes were all that changed:

Suppose, for instance, as a child a person disliked eating peas. When he recalls this as an adult he is amused and notes how preferences change with age. He does not say, however, that his former attitude was mistaken. If, on the other hand, he remembers regarding irreligion or divorce as wicked, and now does not, he regards his former view as erroneous and unfounded…. Ethical statements do not look like the kind of thing the emotive theory says they are.

(Brandt, Richard. 1959. “Noncognitivism: The Job of Ethical Sentences Is Not to State Facts” in Ethical Theory, Englewood Cliffs: Prentice Hall, p. 226.)

Another, more fundamental problem with the view that a good X is simply an X that I like is that it would entail that a good argument is simply an argument I happen to like – which would render it impossible for outsiders to adjudicate debates between two people. In practice, however, we routinely appeal to external, independent criteria (validity and soundness) when evaluating arguments.

Is “goodness” a fuzzy concept?

Commenter Johzek suggested that the concept of “good” is a fuzzy concept which we apply to a class of objects which are loosely similar to one another, when he wrote:

The concept named by the word ‘good’ is formed by our minds from all the particular instances which share a certain similarity and which we have come to identify with the word ‘good’. This concept, like all concepts, has a universal aspect to it since if an observed instance fits the definition it too is a member of the class subsumed by the concept.

In a similar vein, Sastra argued that the resemblance between different things which are called “good” is only a vague one:

The concept of “Good” is cobbled together from personal observations and experiences which have the same aspect in common, that of being desirable, pleasing, or satisfactory. A chocolate bar is not “good” in the same way that an expression of gratitude is, but there’s a vague resemblance which our brains connect together.

I would respond by posing the following dilemma. Either there is some common feature (or set of features) which is shared by things which are good, or there is not. If there is not, then it will be impossible, in practice, to judge whether an object is sufficiently similar to things deemed to be good as to warrant inclusion in the set, and we will never be able to decide whether it is good or not. But if there is such a feature (or set of features) shared by good things, then judgments of similarity will not be required: all we need to ascertain is whether the object possesses the feature (or features) in question.

Perhaps Johzek would respond that goodness denotes a cluster of features which are loosely associated with one another in the real world, making the boundaries of “goodness” somewhat arbitrary. For instance, reasonable people might disagree on how many of these features an object would need to possess, in order to be called “good.” Now, there are indeed some loose concepts – e.g. the concept of a game – which are inherently fuzzy, for precisely this reason. But it would be illogical to conclude that all or even most of our concepts are fuzzy, simply because some of them are. After all, many of our natural concepts – particularly in physics and chemistry – are sharply defined. We know exactly what a proton is, what an amino acid is, and what a DNA molecule is. The same goes for many of our concepts of artifacts: diodes, engines, wheels and clocks are all fairly clearly defined, even if many other artifacts (chairs, bags, cars and cups) are not.

However, there is a more profound reason for expecting the concept of “good” to be a clearly defined one: it is our most fundamental evaluative concept. If this concept is inherently vague, then our evaluations should always be tentative and uncertain. In practice, however, there are many situations where evaluations are routinely carried out in a very black-and-white fashion: mathematics tests, science experiments, sports games and job applications, to name just a few. This suggests that the underlying concept of “good” is a precise one, even if its application in certain cases is rather tricky.

What goodness is

And indeed, if we think for a little while about the concept of “good,” we can readily discern that it is a very simple one. A thing is good if it is what it should be. When we say that an object is good, we mean that it satisfies the norms which define an ideal specimen of that object. These norms may be either built into the nature of that object (e.g. the norm that a good cat should be four-legged and able to eat meat), or they may be externally imposed (e.g. the norm imposed by judges at a dog show that a good German Shepherd should have certain specified proportions in the front and rear of their bodies). But insofar as the norms themselves are clearly defined, our judgments of an object’s goodness will be straightforward ones. Of course, there will be certain classes of things to which judgments of goodness are non-applicable, because there are no norms to which they must conform. It would be utterly meaningless to speak of a water molecule as being good or bad, for instance.

The concept of goodness, as we have seen, involves an appeal to norms which are either intrinsic to things themselves or agreed on by the community of people performing the evaluation. And while the content of a norm – i.e. the actual standards or criteria that define an object as a good specimen of its kind – can be stored in the brain, their prescriptiveness – or the fact that they ought to be satisfied – cannot. Making rules and reflectively adhering to those rules are not activities of which a material system is capable – not because matter knows no “oughts,” but because matter is simply that which scientists can describe: nothing more and nothing less. Matter might blindly conform to certain prescriptions – as objects do when they obey the laws of Nature – but matter itself cannot prescribe anything. It takes a mind to do that.